MINIF2F-DAFNY: LLM-Guided Mathematical Theorem Proving via Auto-Active Verification

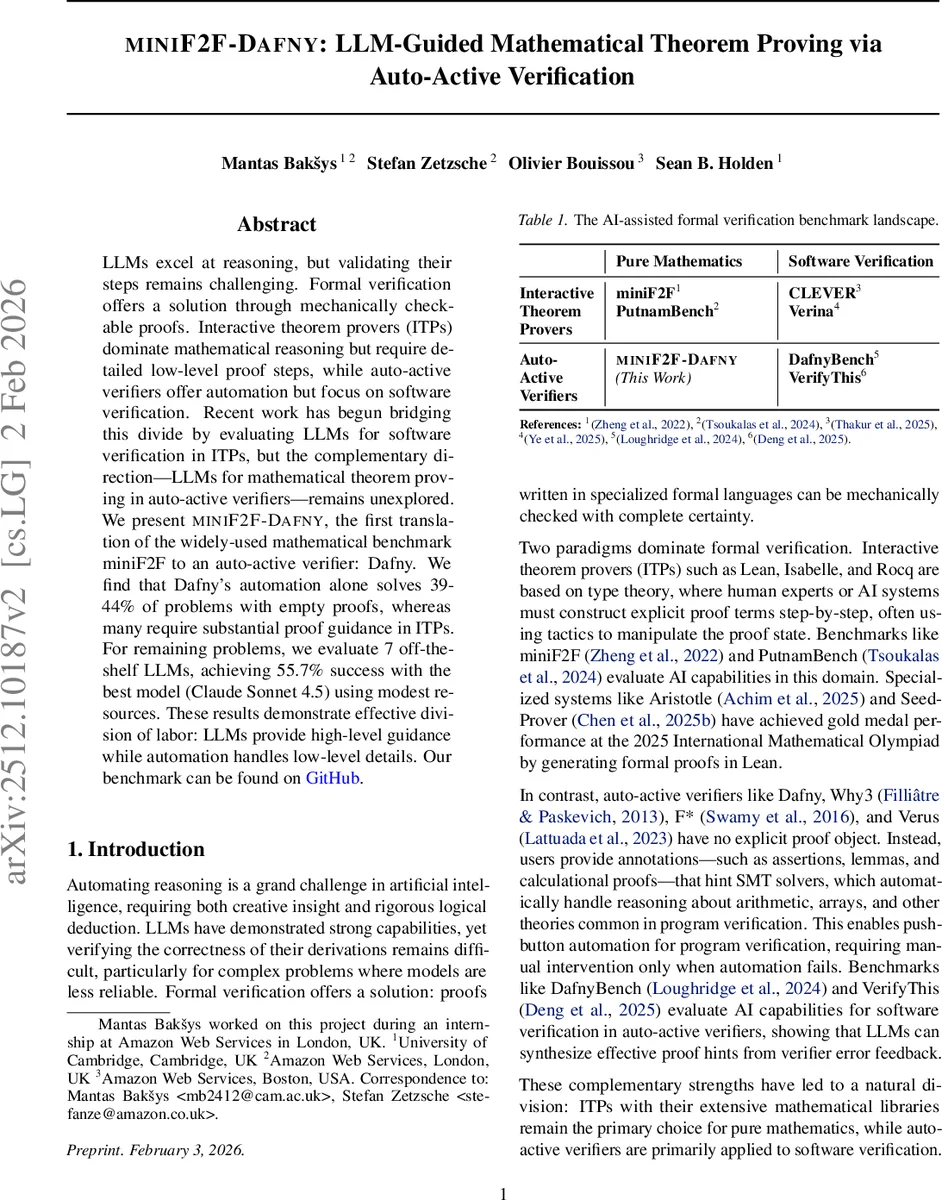

LLMs excel at reasoning, but validating their steps remains challenging. Formal verification offers a solution through mechanically checkable proofs. Interactive theorem provers (ITPs) dominate mathematical reasoning but require detailed low-level proof steps, while auto-active verifiers offer automation but focus on software verification. Recent work has begun bridging this divide by evaluating LLMs for software verification in ITPs, but the complementary direction–LLMs for mathematical theorem proving in auto-active verifiers–remains unexplored. We present MINIF2F-DAFNY, the first translation of the widely-used mathematical benchmark miniF2F to an auto-active verifier: Dafny. We find that Dafny’s automation alone solves 39-44% of problems with empty proofs, whereas many require substantial proof guidance in ITPs. For remaining problems, we evaluate 7 off-the-shelf LLMs, achieving 55.7% success with the best model (Claude Sonnet 4.5) using modest resources. These results demonstrate effective division of labor: LLMs provide high-level guidance while automation handles low-level details. Our benchmark can be found on GitHub at http://github.com/dafny-lang/miniF2F .

💡 Research Summary

This paper introduces MINIF2F‑DAFNY, the first translation of the widely used mathematical benchmark miniF2F into the auto‑active verifier Dafny. The authors begin by describing the two dominant paradigms in formal verification: interactive theorem provers (ITPs) such as Lean, Isabelle, and Coq, which require users to construct explicit proof terms step‑by‑step, and auto‑active verifiers like Dafny, Why3, and F*, which rely on annotations and SMT solvers to discharge low‑level reasoning automatically. While recent work has explored using large language models (LLMs) to assist software verification in ITPs, the opposite direction—employing LLMs to guide mathematical theorem proving in auto‑active verifiers—has not been investigated.

To fill this gap, the authors translate all 488 problems of miniF2F (244 test, 244 validation) into Dafny lemmas. Each lemma contains preconditions (requires) and postconditions (ensures) but an empty proof body. Supporting files definitions.dfy and library.dfy provide a compact axiomatization of integers, rationals, reals, and complex numbers, together with 174 standard lemmas (e.g., logarithm properties, number‑theoretic facts). The axioms are deliberately minimal to keep the SMT search space manageable while still expressive enough to state every miniF2F problem.

The first experimental phase evaluates Dafny’s built‑in automation alone. Dafny translates a lemma into Boogie, generates verification conditions (VCs), and hands them to the Z3 SMT solver. Without any user‑supplied hints, Dafny automatically verifies 95 of the 244 test problems (38.9 %) and 106 of the 244 validation problems (43.4 %). This outperforms Lean’s strongest SMT‑style tactic, grind, which solves only 79 of 244 (32.4 %). The result demonstrates that a substantial portion of miniF2F consists of routine arithmetic and logical steps that SMT solvers can handle without sophisticated proof insight.

For the remaining problems, the authors evaluate seven off‑the‑shelf LLMs (Claude Sonnet 4.5, GPT‑4‑Turbo, Gemini 1.5‑Flash, Claude Opus, Llama 2‑70B, Mistral‑Large, and Gemini 1.0‑Pro). The LLMs receive a prompt containing the problem statement, the current Dafny lemma, and a request to produce proof hints in the form of Dafny ghost code (assert, calc, auxiliary lemma calls). The generated hints are then fed back to Dafny, which attempts to complete the verification using its SMT backend. The best model, Claude Sonnet 4.5, achieves a 55.7 % success rate across the combined test and validation sets. While this is lower than state‑of‑the‑art ITP‑focused approaches that employ specialized fine‑tuned models and multi‑agent frameworks, those approaches also consume substantially more compute and rely on extensive mathematical libraries.

A qualitative analysis shows that LLM‑generated proofs are markedly shorter and more readable than typical ITP scripts. On average, the Dafny proofs with LLM hints consist of fewer than 30 lines, whereas Lean proofs for the same problems often exceed 100 lines of tactic invocations. This brevity stems from Dafny’s “no explicit proof object” design: the SMT solver implicitly carries out low‑level deductions, leaving the human‑or‑LLM to supply only high‑level structural guidance.

The paper’s contributions are fourfold: (1) the release of MINIF2F‑DAFNY, a new benchmark linking pure mathematics to an auto‑active verifier; (2) baseline measurements showing that Dafny’s automation alone solves roughly 40 % of miniF2F problems; (3) an empirical study of seven general‑purpose LLMs providing proof hints, with the top model reaching 55.7 % success; and (4) evidence that a division of labor—automation handling low‑level logical steps, LLMs providing high‑level strategies—produces shorter, more human‑friendly proofs.

The authors discuss several avenues for future work. First, a more rigorous soundness audit of the axiomatized libraries is needed; they currently rely on manual spot‑checking of a random subset of problems. Second, prompt engineering and few‑shot examples could be refined to improve LLM hint quality. Third, integrating multi‑step reasoning agents that can iteratively query Dafny’s error messages may raise success rates further. Finally, extending the benchmark to richer mathematical domains (measure theory, topology, etc.) would test the limits of auto‑active verification and guide the development of richer mathematical libraries for SMT‑based provers.

Overall, the study demonstrates that auto‑active verifiers like Dafny, when paired with modern LLMs, constitute a viable and efficient platform for automated mathematical theorem proving, offering a complementary alternative to the traditional ITP‑centric paradigm.

Comments & Academic Discussion

Loading comments...

Leave a Comment