The T12 System for AudioMOS Challenge 2025: Audio Aesthetics Score Prediction System Using KAN- and VERSA-based Models

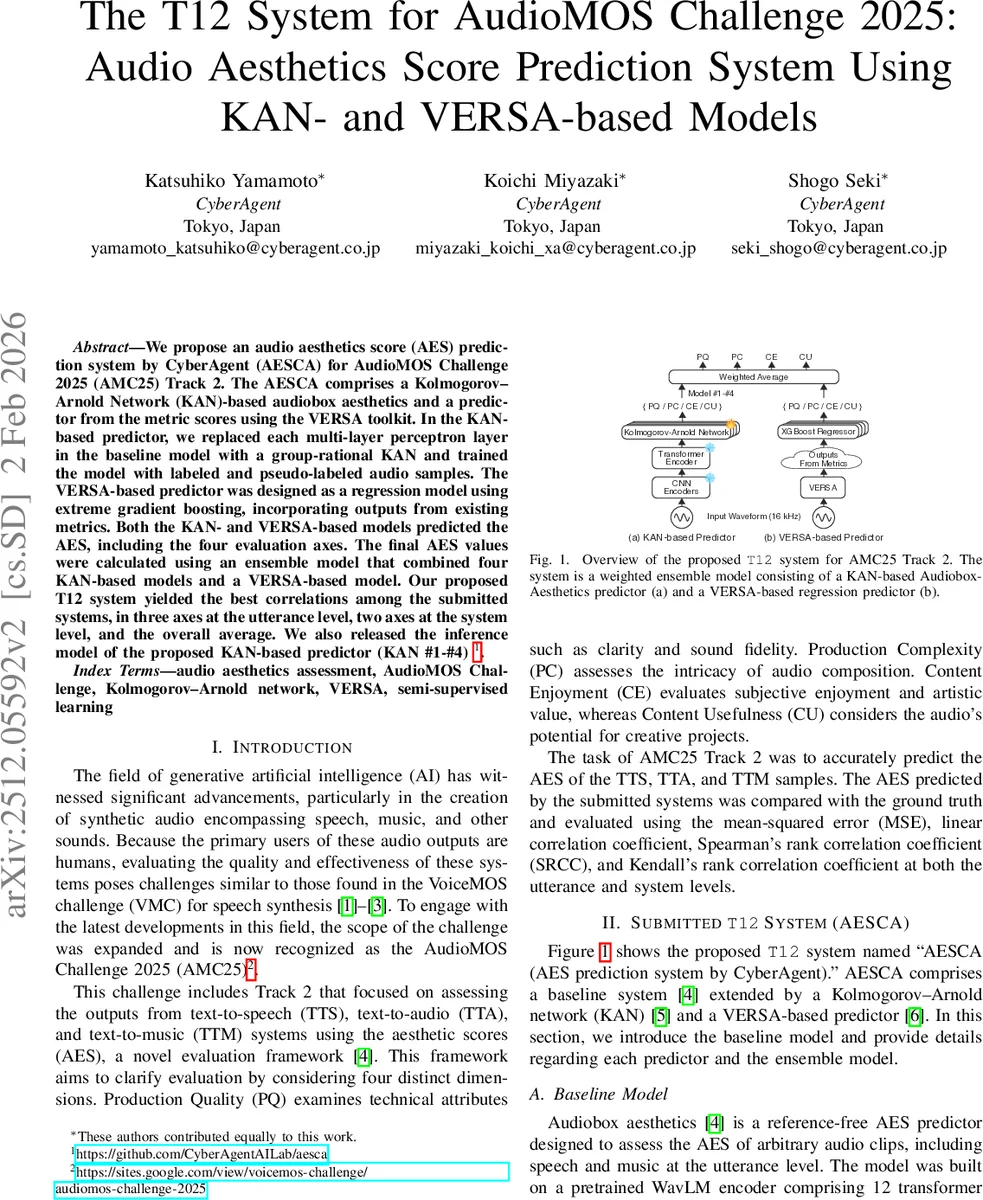

We propose an audio aesthetics score (AES) prediction system by CyberAgent (AESCA) for AudioMOS Challenge 2025 (AMC25) Track 2. The AESCA comprises a Kolmogorov–Arnold Network (KAN)-based audiobox aesthetics and a predictor from the metric scores using the VERSA toolkit. In the KAN-based predictor, we replaced each multi-layer perceptron layer in the baseline model with a group-rational KAN and trained the model with labeled and pseudo-labeled audio samples. The VERSA-based predictor was designed as a regression model using extreme gradient boosting, incorporating outputs from existing metrics. Both the KAN- and VERSA-based models predicted the AES, including the four evaluation axes. The final AES values were calculated using an ensemble model that combined four KAN-based models and a VERSA-based model. Our proposed T12 system yielded the best correlations among the submitted systems, in three axes at the utterance level, two axes at the system level, and the overall average. We also released the inference model of the proposed KAN-based predictor (KAN #1-#4).

💡 Research Summary

**

The paper presents a comprehensive solution for the AudioMOS Challenge 2025 (AMC25) Track 2, where the task is to predict Audio Aesthetics Scores (AES) for synthetic audio generated by text‑to‑speech (TTS), text‑to‑audio (TTA), and text‑to‑music (TTM) systems. AES consists of four orthogonal dimensions: Production Quality (PQ), Production Complexity (PC), Content Enjoyment (CE), and Content Usefulness (CU). The proposed system, named AESCA (AES prediction system by CyberAgent), combines two complementary predictors: a Kolmogorov‑Arnold Network (KAN)‑based Audiobox Aesthetics model and a VERSA‑based regression model.

Baseline Model

The starting point is the publicly released Audiobox Aesthetics model, which uses a pretrained WavLM encoder (12‑layer Transformer, hidden size 768) to extract frame‑level representations from 16 kHz waveforms. The encoder outputs are weighted across layers, aggregated over time, and fed into four independent multilayer perceptrons (MLPs), each regressing one AES dimension. The baseline was pretrained on ~500 h of audio (≈97 k samples) covering speech, music, and environmental sounds.

KAN‑Based Predictor

The authors replace each MLP in the baseline with a Group‑Rational KAN (GR‑KAN) layer. KAN augments neural networks by learning a rational‑function activation for every input‑output pair, dramatically increasing expressive power while preserving compatibility with pretrained weights. Consequently, the KAN‑based predictor inherits the knowledge encoded in the baseline’s weights but can model more complex nonlinear relationships. Four separate KAN heads predict PQ, PC, CE, and CU respectively.

VERSA‑Based Predictor

VERSA is a versatile evaluation toolkit that aggregates a wide range of reference‑free audio quality metrics, such as DNS‑MOS, NISQA, UTMOSv2, TORCHAUDIO‑SQIM, and the Prompted Audio‑Language Model (PAM). The authors extract 28 scalar metric outputs and feed them into an XGBoost regression model that jointly predicts the four AES dimensions. This predictor captures complementary information that may be missed by the purely neural KAN model, especially domain‑specific cues present in music or complex soundscapes.

Data and Pre‑processing

Training data comprise the AMC25 Track 2 set (2,700 labeled samples + 250 dev samples) augmented with the PAM dataset (900 train + 100 dev samples) and a large pool of unlabeled speech from the VoiceMOS Challenge 2022 (VMC22, 8,459 samples). The unlabeled set is used for semi‑supervised learning via pseudo‑labeling. All audio is resampled to 16 kHz, and missing samples are removed.

Training Strategies

Fine‑tuning (FT) – The KAN model is fine‑tuned on the labeled AMC25 + PAM data for 10 epochs (batch = 40, lr = 1e‑4, AdamW).

Iterative Pseudo‑Labeling (IPL) – An initial “teacher” model (the baseline) generates pseudo‑labels for the unlabeled VMC22 data. These pseudo‑labels are merged with the labeled sets, and a “student” KAN model is trained. If the student achieves lower dev loss, it becomes the new teacher. The process repeats up to five iterations, typically resulting in three updates before convergence.

The VERSA predictor is trained on the same labeled data using 10‑fold cross‑validation, with Optuna optimizing XGBoost hyper‑parameters to minimize MSE.

Ensemble Construction

Four KAN models are trained with different random seeds (#1‑#4). Their outputs and the VERSA prediction are combined via a weighted average (stacking). Grid search determines the optimal weights, yielding a final ensemble that balances the strengths of each component.

Experimental Results

-

Baseline vs KAN: KAN improves SRCC on all four axes (ΔSRCC ≈ 0.02–0.07). Adding PAM data further reduces MSE for PQ, CE, and CU, indicating that the extra music‑related samples enrich the model’s understanding of content dimensions.

-

VERSA: Shows competitive MSE and SRCC for CE and CU, but underperforms on PQ and PC, likely because those dimensions rely more on low‑level acoustic fidelity captured better by the neural encoder.

-

FT vs IPL: IPL yields lower MSE and slightly higher SRCC than FT, especially when the unlabeled VMC22 data are incorporated. The semi‑supervised approach stabilizes training and mitigates overfitting on the modest labeled set.

-

Final Ensemble (T12): At the utterance level, the ensemble achieves the highest SRCC on PQ, CE, and CU among all submitted systems; at the system level, it leads on CE and CU; overall average SRCC is also top‑ranked. MSE remains higher than some competitors due to the inverse transformation of target variables and outliers in TTM predictions, highlighting a remaining area for improvement.

Conclusion and Future Work

AESCA demonstrates that integrating a high‑capacity KAN backbone with a metric‑driven VERSA regressor, and training the former with iterative pseudo‑labeling, yields state‑of‑the‑art correlation performance across diverse audio domains. The work validates KAN’s suitability for audio quality regression and showcases the value of semi‑supervised learning when labeled data are scarce. Future directions include refining pseudo‑label quality (e.g., confidence‑weighted labeling), exploring automated metric selection for VERSA, applying target scaling or robust loss functions to reduce MSE, and developing lightweight versions of the KAN predictor for real‑time deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment