SciTextures: Collecting and Connecting Visual Patterns, Models, and Code Across Science and Art

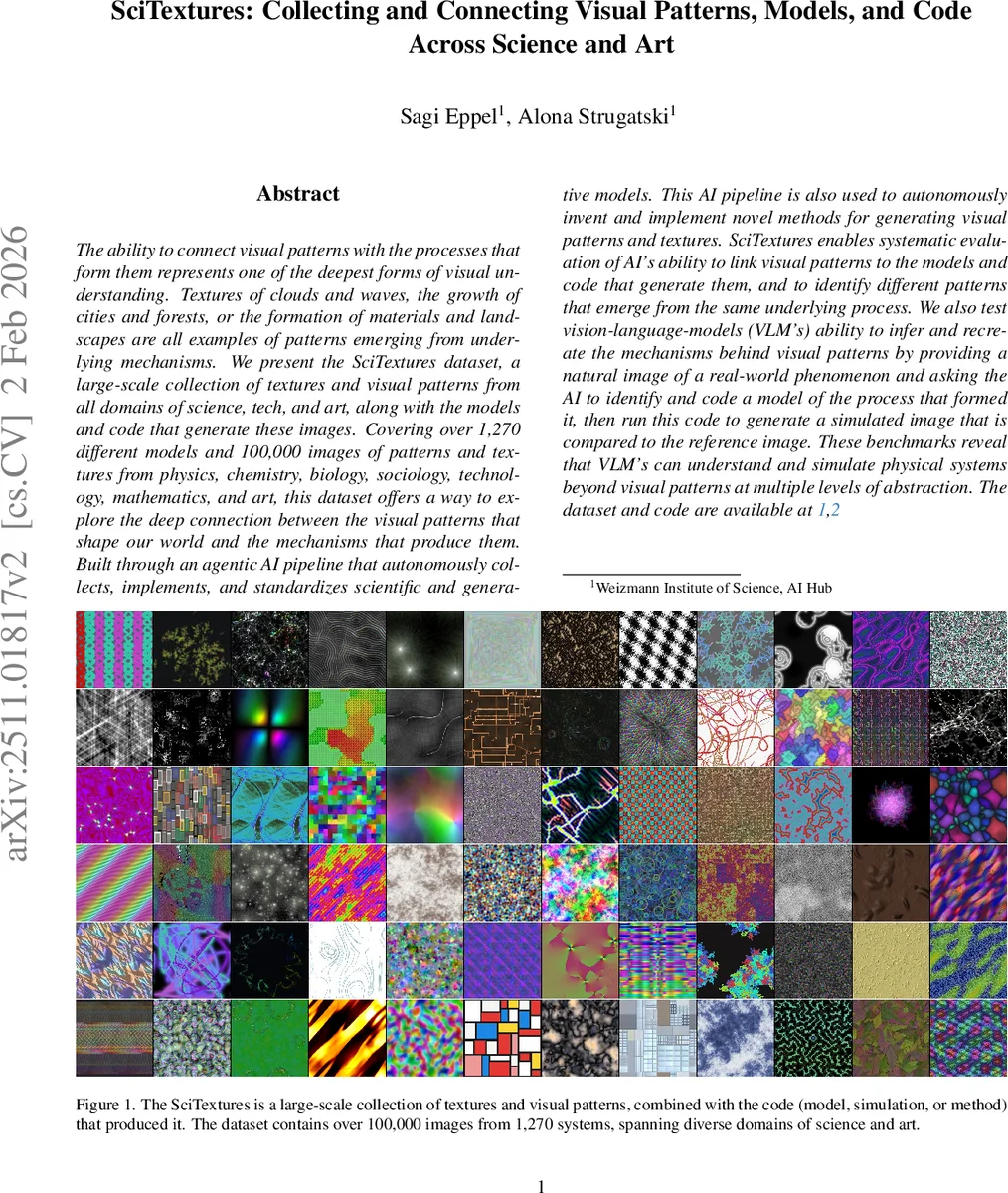

The ability to connect visual patterns with the processes that form them represents one of the deepest forms of visual understanding. Textures of clouds and waves, the growth of cities and forests, or the formation of materials and landscapes are all examples of patterns emerging from underlying mechanisms. We present the SciTextures dataset, a large-scale collection of textures and visual patterns from all domains of science, tech, and art, along with the models and code that generate these images. Covering over 1,270 different models and 100,000 images of patterns and textures from physics, chemistry, biology, sociology, technology, mathematics, and art, this dataset offers a way to explore the deep connection between the visual patterns that shape our world and the mechanisms that produce them. Built through an agentic AI pipeline that autonomously collects, implements, and standardizes scientific and generative models. This AI pipeline is also used to autonomously invent and implement novel methods for generating visual patterns and textures. SciTextures enables systematic evaluation of vision language models (VLM’s) ability to link visual patterns to the models and code that generate them, and to identify different patterns that emerge from the same underlying process. We also test VLMs ability to infer and recreate the mechanisms behind visual patterns by providing a natural image of a real-world phenomenon and asking the AI to identify and code a model of the process that formed it, then run this code to generate a simulated image that is compared to the reference image. These benchmarks reveal that VLM’s can understand and simulate physical systems beyond visual patterns at multiple levels of abstraction. The dataset and code are available at: https://zenodo.org/records/17485502

💡 Research Summary

SciTextures introduces a massive, cross‑disciplinary dataset that pairs visual textures and patterns with the underlying generative models and executable code that produce them. Covering over 1,270 distinct scientific, technological, and artistic models—from the Ising model and Conway’s Game of Life to city growth simulations, chemical reaction networks, and biological morphogenesis—the collection contains more than 100 000 images, each accompanied by standardized Python or shader code capable of generating arbitrary‑resolution outputs.

The dataset is built through an autonomous “agentic AI” pipeline. A GPT‑5‑based agent first proposes candidate models, drawing on published literature, open‑source repositories, or novel ideas inferred from natural images. It then implements each proposal as clean, reusable code, runs automated debugging, and validates the generated images both automatically (e.g., detecting uniform or noisy outputs) and manually (checking for diversity and tileability). Multiple large‑language models (Claude 4.5, DeepSeek R1, etc.) perform iterative inspections to catch conceptual errors. A small human audit of 50 sampled scripts confirmed the pipeline’s reliability.

To assess how well vision‑language models (VLMs) can link visual patterns to their generating processes, the authors define three benchmark tasks. Im2Code asks a VLM to select which image(s) were produced by a given piece of code (with or without comments). Im2Im requires the model to identify images generated by the same underlying process among a set of distractors. Im2Sim2Im is the most ambitious: given a real‑world photograph of a pattern, the VLM must infer the physical or biological mechanism, write executable code that simulates it, run the code, and then match the synthetic output to the original photograph.

Evaluation across ten state‑of‑the‑art VLMs (GPT‑5, GPT‑5‑mini, Gemini‑2.5‑flash/pro, Qwen2.5‑VL‑72B, Llama‑4 variants, Grok‑4, etc.) shows a clear hierarchy. GPT‑5 achieves the highest scores—58 % on Im2Desc (text‑to‑image matching), 50 % on Im2Code, and 44 % on Im2Sim2Im—while human evaluators reach 82 % on the same Im2Sim2Im matching task, indicating that current models still lag behind human intuition. Performance drops markedly when code comments are removed, highlighting that VLMs rely heavily on natural‑language cues rather than pure code structure. Other models hover around random‑guess levels (≈10 % baseline) for the most complex task, underscoring the difficulty of mechanistic inference from visual data.

Model fidelity within the dataset is categorized into five levels (Accurate 4 %, Good Approximation 42 %, Toy 40 %, Weak Approximation 1 %, Inspired 13 %). Most entries fall into the “Toy” category, meaning they capture qualitative behavior without strict quantitative accuracy. This design choice favors visual richness and tileability over precise physical realism, which in turn influences benchmark outcomes.

The paper’s contributions are threefold: (1) providing the first large‑scale, domain‑general texture‑pattern‑model dataset; (2) demonstrating an autonomous pipeline that can discover, implement, and validate thousands of generative models with minimal human oversight; (3) introducing novel VLM evaluation protocols that move beyond surface‑level pattern classification toward deeper mechanistic understanding and simulation. The authors argue that these resources open a new research frontier where AI systems are judged not only on recognizing patterns but also on reconstructing the underlying processes that generate them. Future work should aim to increase model fidelity, incorporate more rigorous physical simulations, and develop VLMs capable of reliable code synthesis and execution, thereby bridging the gap between visual perception and scientific reasoning.

Comments & Academic Discussion

Loading comments...

Leave a Comment