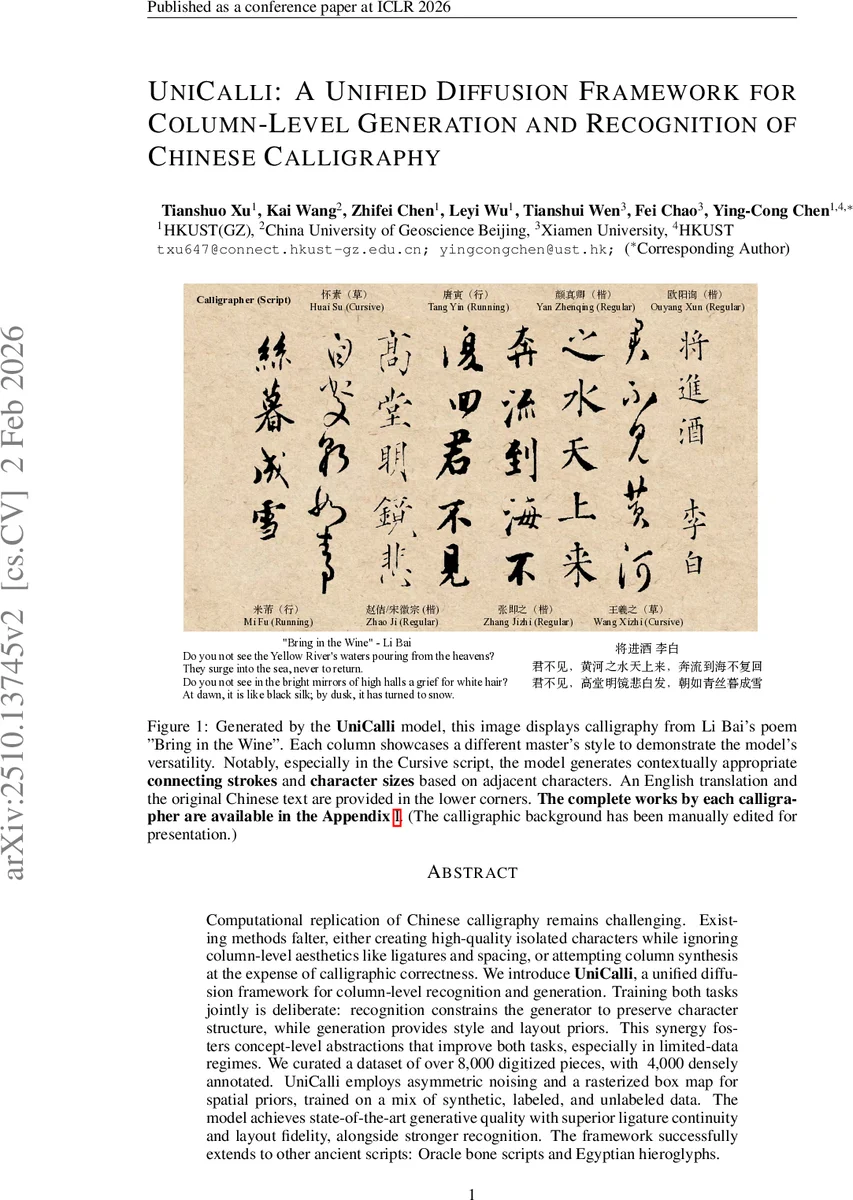

UniCalli: A Unified Diffusion Framework for Column-Level Generation and Recognition of Chinese Calligraphy

Computational replication of Chinese calligraphy remains challenging. Existing methods falter, either creating high-quality isolated characters while ignoring page-level aesthetics like ligatures and spacing, or attempting page synthesis at the expense of calligraphic correctness. We introduce \textbf{UniCalli}, a unified diffusion framework for column-level recognition and generation. Training both tasks jointly is deliberate: recognition constrains the generator to preserve character structure, while generation provides style and layout priors. This synergy fosters concept-level abstractions that improve both tasks, especially in limited-data regimes. We curated a dataset of over 8,000 digitized pieces, with ~4,000 densely annotated. UniCalli employs asymmetric noising and a rasterized box map for spatial priors, trained on a mix of synthetic, labeled, and unlabeled data. The model achieves state-of-the-art generative quality with superior ligature continuity and layout fidelity, alongside stronger recognition. The framework successfully extends to other ancient scripts, including Oracle bone inscriptions and Egyptian hieroglyphs. Code and data can be viewed in \href{https://github.com/EnVision-Research/UniCalli}{this URL}.

💡 Research Summary

**

The paper introduces UniCalli, a unified diffusion‑based framework that simultaneously tackles column‑level Chinese calligraphy generation and recognition. Existing approaches either excel at isolated‑character synthesis while neglecting page‑level aesthetics (ligatures, spacing, rhythm) or attempt full‑page generation at the cost of character correctness. UniCalli bridges this gap by jointly training a single model on both tasks, allowing each to regularize the other: the recognition objective forces the generator to preserve glyph structure, while the generative objective supplies strong style and layout priors that improve recognition robustness, especially under limited‑label conditions.

Dataset – The authors curate a new corpus of more than 8,000 digitized calligraphy works spanning 93 historical masters. Over 4,000 pieces are densely annotated with script type (regular, running, cursive), per‑character bounding boxes, and modern Chinese transcriptions. This dataset provides a rare combination of high‑quality visual data and fine‑grained spatial annotations, enabling column‑level modeling.

Methodology – UniCalli builds on the Multimodal Diffusion Transformer (MMDiT) backbone but departs from causal autoregressive generation. Instead, it employs asymmetric noising: a clean “standard‑font” latent (z_c) and a noisy calligraphy latent (z_i) are processed together. For generation, t_c = 0 (condition) and t_i is sampled, so the model denoises the image and a rasterized box‑map (z_m) conditioned on the clean text. For recognition, the roles are swapped (t_i = 0, t_c sampled), forcing the model to reconstruct the text latent from the image. This dual‑mode training yields a bidirectional mapping between content and visual form.

Spatial Priors – A rasterized bounding‑box map is introduced as an explicit spatial prior. The map encodes each character’s position and scale within the vertical column, guiding the model to learn realistic inter‑character spacing, size variation, and ligature formation.

Duplicate RoPE – To fuse spatial information across the three modalities (content, image, box‑map), the authors compute a Rotary Positional Embedding (RoPE) from the image latent, duplicate it for the other two latents, and add modality‑specific learnable modulation embeddings. This creates a shared coordinate system while preserving modality identity, enabling the transformer to attend jointly to “what”, “where”, and “how” each glyph should appear.

Training – The loss combines flow‑matching terms for image (L_img), box map (L_box), and content (L_cond). In generation mode the objective is L_img + L_box + λ L_cond; in recognition mode it is L_cond + λ(L_img + L_box), with λ = 0.02. Conditional dropout randomly replaces the content condition with pure noise, preventing over‑fitting to rare styles and encouraging the model to rely on visual cues for glyph fidelity. The training mix includes labeled, synthetic (font‑based), and unlabeled data, improving robustness to long‑tail style distributions.

Results – Quantitatively, UniCalli achieves state‑of‑the‑art generative quality: lower FID and LPIPS scores than CalliPaint, Moyun, and CalliffusionV2, and human evaluators rate its ligature continuity and column rhythm highest. For recognition, it attains a character error rate (CER) of 4.2 %, comparable to specialized recognizers such as OracleNet and CalliReader. Notably, in few‑shot experiments with only 10 % of the annotations, performance degrades minimally, demonstrating the benefit of shared concept‑level abstractions (radicals, strokes).

Generalization – The same architecture and training schedule are applied without modification to Oracle bone script and Egyptian hieroglyphs. The model successfully reproduces the distinct structural conventions of these ancient scripts, indicating that UniCalli learns script‑agnostic spatial‑visual representations.

Limitations & Future Work – Current work focuses on vertical columns; extending to horizontal layouts or mixed‑orientation pages will require additional layout modeling. The need for dense bounding‑box annotations poses a scalability bottleneck; the authors suggest active‑learning or semi‑automatic box‑map generation to alleviate this. Exploring multi‑scale RoPE and hierarchical diffusion steps could further improve fine‑grained stroke detail.

Contributions – (1) A large, richly annotated Chinese calligraphy dataset; (2) UniCalli, the first unified diffusion model for column‑level generation and recognition; (3) Demonstrated SOTA generative fidelity, competitive recognition, and cross‑script generalization. The work opens avenues for digital preservation, scholarly analysis of ancient scripts, and creative AI‑assisted calligraphy tools.

Comments & Academic Discussion

Loading comments...

Leave a Comment