Standard-to-Dialect Transfer Trends Differ across Text and Speech: A Case Study on Intent and Topic Classification in German Dialects

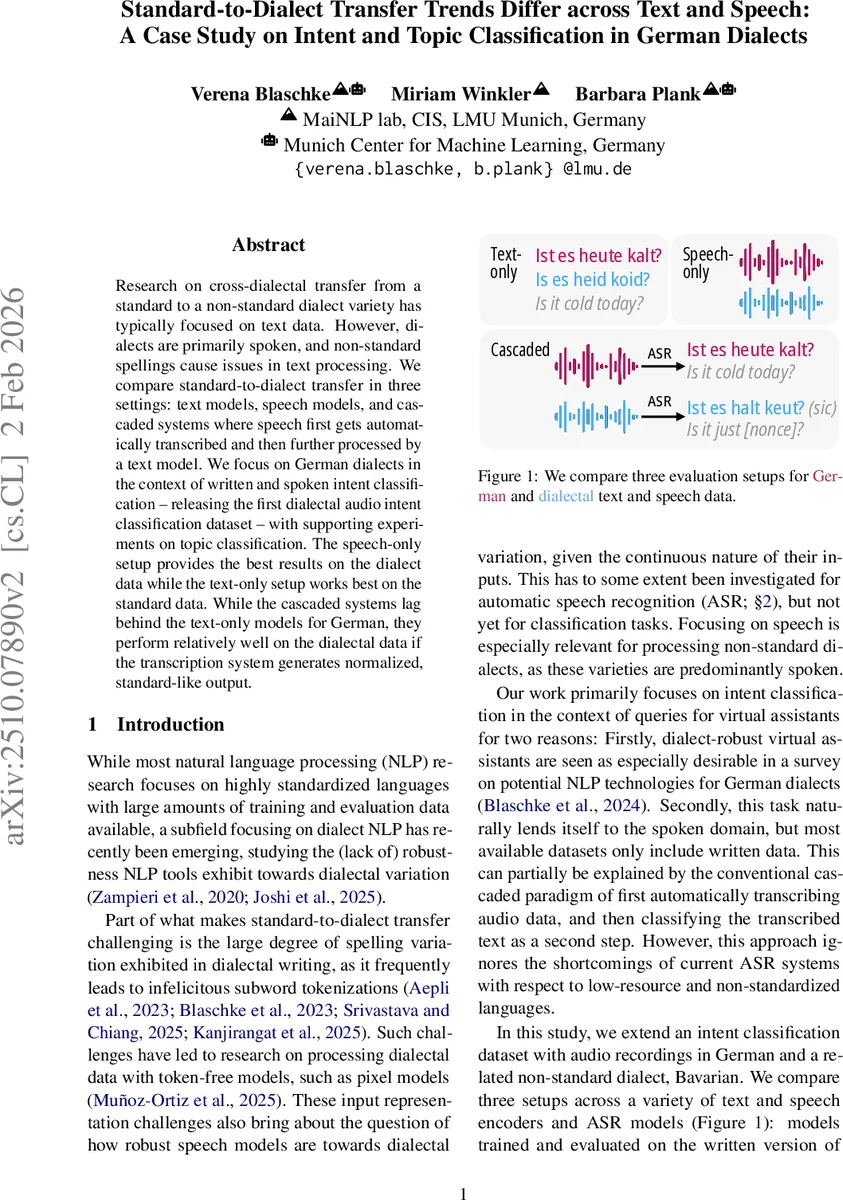

Research on cross-dialectal transfer from a standard to a non-standard dialect variety has typically focused on text data. However, dialects are primarily spoken, and non-standard spellings cause issues in text processing. We compare standard-to-dialect transfer in three settings: text models, speech models, and cascaded systems where speech first gets automatically transcribed and then further processed by a text model. We focus on German dialects in the context of written and spoken intent classification – releasing the first dialectal audio intent classification dataset – with supporting experiments on topic classification. The speech-only setup provides the best results on the dialect data while the text-only setup works best on the standard data. While the cascaded systems lag behind the text-only models for German, they perform relatively well on the dialectal data if the transcription system generates normalized, standard-like output.

💡 Research Summary

This paper investigates the often‑overlooked problem of transferring NLP models trained on a standard language to its non‑standard dialects, explicitly comparing three processing pipelines: (1) text‑only models that are fine‑tuned on written data, (2) speech‑only models that directly ingest audio, and (3) cascaded systems that first run automatic speech recognition (ASR) and then apply a text classifier. The authors focus on German and its closely related Bavarian dialect, evaluating both intent classification (the primary task) and a supporting topic‑classification experiment with Swiss German.

Data: The study builds on existing resources (MASSIVE and Speech‑MASSIVE) for standard German, and extends the xSID dataset by recording Bavarian utterances in‑house, thereby creating the first publicly released Bavarian audio intent‑classification corpus. For the supporting experiment, the SwissDial corpus provides parallel Standard German and eight Swiss‑German dialects, with both written and spoken forms for the dialects.

Models: A range of pretrained multilingual encoders are used for the text‑only condition (mBERT, XLM‑R base/large, mDeBERTa‑v3) and for the speech‑only condition (Whisper tiny, base, small, medium, large‑v3, large‑v3‑turbo, XLS‑R 300 M, XLS‑R fine‑tuned for German, MMS 300 M). All models contain German in their pre‑training data; only mBERT also sees Bavarian tokens. For the cascaded condition, the same text classifiers are fed with transcriptions generated by a suite of ASR models (the same speech encoders used as ASR, plus larger Whisper and XLS‑R 1 B variants). No additional fine‑tuning of ASR is performed; German is set as the target language.

Experiments: Three setups are evaluated on both the standard and dialect test splits. Accuracy is reported as the primary metric, averaged over three random seeds. The paper also measures word/character error rates for ASR and conducts a manual quality check of transcriptions (fluency, meaning preservation, keyword retention).

Key Findings:

- Text‑only superiority on standard German – Across all text encoders, accuracy on German reaches ~93 % (far above the 29 % majority‑class baseline), confirming that modern pretrained models excel when the input conforms to standard orthography.

- Severe degradation on Bavarian text – The same models drop to the mid‑50 % range, reflecting the impact of non‑standard spelling, sub‑word fragmentation, and lexical divergence.

- Speech‑only models excel on dialect – Even though speech‑only systems lag behind text‑only on German (≈70 % vs. 93 %), they achieve ~80 % accuracy on Bavarian, surpassing all text‑based approaches. This suggests that acoustic cues capture dialectal variation more robustly than noisy orthographic representations.

- Cascaded performance hinges on ASR normalization – When ASR outputs are close to standard German (e.g., Whisper large‑v3‑turbo, which tends to “normalize” dialectal speech), cascaded models can outperform text‑only on Bavarian (+5 % absolute). Conversely, ASR systems that preserve dialectal forms or produce many errors lead to cascaded accuracies comparable to or worse than speech‑only.

- Consistent trends across tasks – The supporting Swiss German topic‑classification experiment mirrors the intent results: text‑only dominates on Standard German, speech‑only dominates on dialect, and cascaded models only catch up when ASR normalizes well.

Implications: The study challenges the default pipeline of “ASR → text classifier” for dialectal speech. For dialects that are primarily spoken and lack standardized orthography, directly using speech encoders can be more effective. When a cascaded approach is required (e.g., for downstream text‑centric pipelines), selecting an ASR model that performs strong dialect‑to‑standard normalization is crucial. The authors also highlight that token‑free or pixel‑based models, which have shown promise for noisy orthography, could be explored further for dialectal text.

Contributions:

- Release of a Bavarian audio intent‑classification dataset (train/dev/test splits, 2.5 k/459/361 German instances and 412 Bavarian test instances).

- Systematic empirical comparison of text‑only, speech‑only, and cascaded pipelines across multiple model sizes and ASR systems.

- Demonstration that findings from standard‑language text transfer do not automatically extend to closely related dialects, especially in the spoken domain.

Overall, the paper provides a comprehensive benchmark and practical guidance for researchers and engineers aiming to build robust NLP systems that serve speakers of non‑standard dialects, emphasizing the need to reconsider the dominance of text‑centric pipelines in favor of speech‑centric or well‑normalized ASR solutions.

Comments & Academic Discussion

Loading comments...

Leave a Comment