How to Train Your Advisor: Steering Black-Box LLMs with Advisor Models

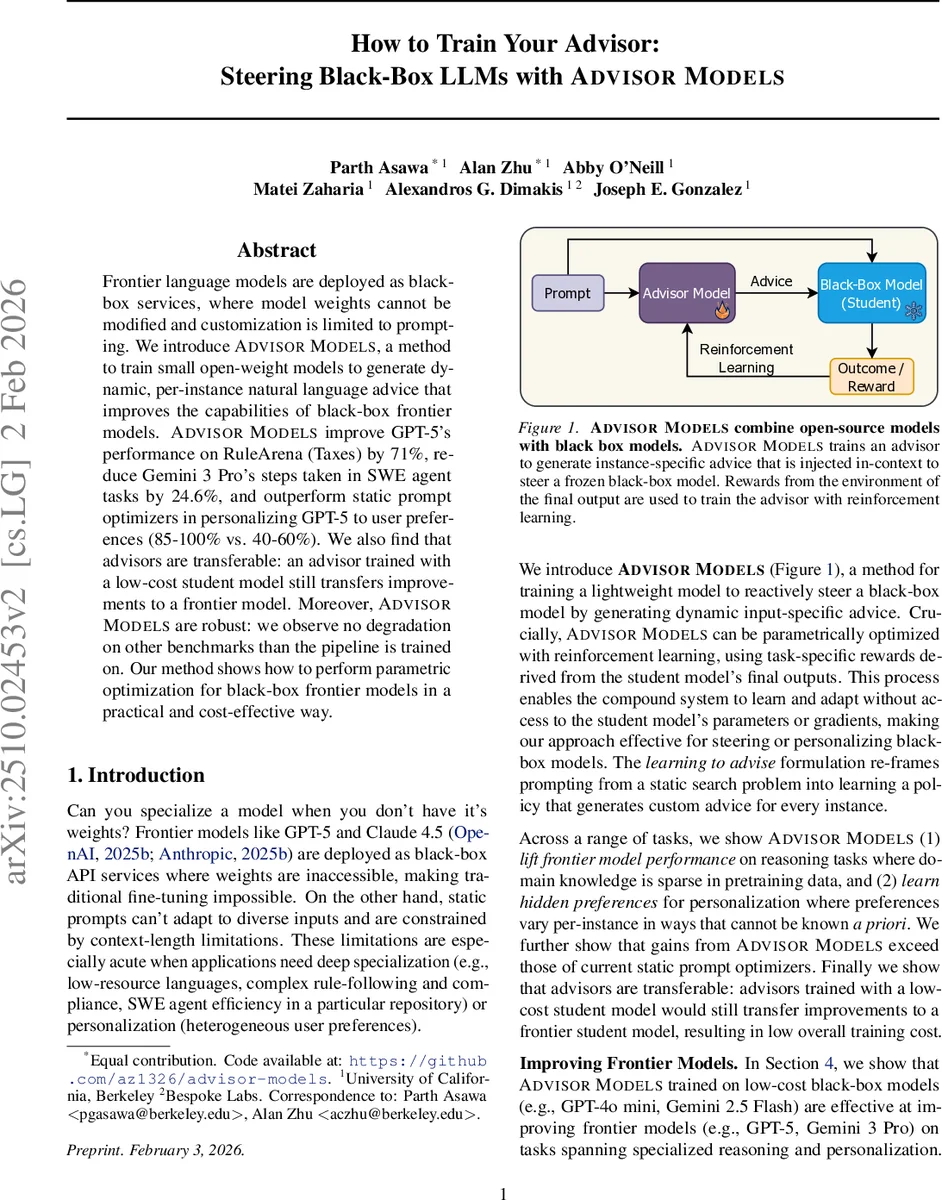

Frontier language models are deployed as black-box services, where model weights cannot be modified and customization is limited to prompting. We introduce Advisor Models, a method to train small open-weight models to generate dynamic, per-instance natural language advice that improves the capabilities of black-box frontier models. Advisor Models improve GPT-5’s performance on RuleArena (Taxes) by 71%, reduce Gemini 3 Pro’s steps taken in SWE agent tasks by 24.6%, and outperform static prompt optimizers in personalizing GPT-5 to user preferences (85-100% vs. 40-60%). We also find that advisors are transferable: an advisor trained with a low-cost student model still transfers improvements to a frontier model. Moreover, Advisor Models are robust: we observe no degradation on other benchmarks than the pipeline is trained on. Our method shows how to perform parametric optimization for black-box frontier models in a practical and cost-effective way.

💡 Research Summary

The paper introduces “Advisor Models,” a novel framework for steering large, black‑box language models (LLMs) such as GPT‑5 and Gemini 3 Pro without access to their weights. Traditional fine‑tuning is impossible for API‑only models, and static prompt engineering suffers from lack of adaptability, especially in multi‑turn or highly specialized tasks. Advisor Models address these limitations by training a lightweight, open‑weight model (the “advisor”) to generate per‑instance natural‑language advice that is injected directly into the prompt given to the frozen black‑box model.

The core methodology casts prompt generation as a reinforcement‑learning (RL) problem. The advisor observes the user input and, optionally, an initial response from the student model (a 3‑step variant). It then produces advice, which the black‑box model consumes to produce its final output. A task‑specific reward—e.g., binary correctness for tax calculations, a combination of correctness and step‑count for software‑engineering (SWE) agents, or chrF similarity for low‑resource translation—is computed from the final output. The advisor’s policy is updated using Group Relative Policy Optimization (GRPO), requiring only API calls to the black‑box model; no gradients or weight access are needed.

Three experimental domains showcase the approach:

- RuleArena Taxes – Advisors raise GPT‑5’s accuracy from 31.2 % to 53.6 % (a 71 % relative gain).

- SWE Agent Efficiency – Advisors reduce the average number of interaction steps taken by Gemini 3 Pro from 31.7 to 26.3 (‑24.6 %) while preserving success rates.

- Low‑Resource Translation (Kalamang) – Advisors improve chrF from 0.32 to 0.48.

Across all domains, Advisor Models outperform state‑of‑the‑art static prompt optimizers such as GEP‑A and Profile‑Augmented Generation, which either cannot adapt per instance or are not designed for multi‑turn settings.

A key contribution is transferability. Advisors are first trained using inexpensive student models (e.g., GPT‑4o mini, Gemini 2.5 Flash). The same trained advisor is then applied unchanged to much larger, more expensive frontier models (GPT‑5, Gemini 3 Pro), still delivering substantial performance lifts. Moreover, cross‑family transfer (GPT → Claude) is demonstrated, indicating that the advice language itself, rather than model‑specific embeddings, drives the gains.

Robustness is validated by showing that training advisors on one benchmark does not degrade performance on unrelated benchmarks, confirming that the approach does not cause catastrophic forgetting in the black‑box model. The 3‑step variant further mitigates risk of harmful advice by having the advisor act as a verifier of the student’s initial output.

The paper discusses limitations: advisors still contain hundreds of millions of parameters, which may be costly for ultra‑low‑budget scenarios; reward design is domain‑specific and may require careful engineering for new tasks; and the approach assumes the black‑box model can reliably follow natural‑language advice, which may not hold for all APIs.

In conclusion, Advisor Models provide a practical, cost‑effective paradigm for “parametric optimization” of black‑box LLMs. By learning a policy that emits instance‑specific natural‑language guidance, they enable dynamic specialization, personalization, and efficiency improvements without ever modifying the underlying frontier model. This opens avenues for deploying customized AI services at scale while preserving the safety and stability of the original black‑box systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment