Game-Time: Evaluating Temporal Dynamics in Spoken Language Models

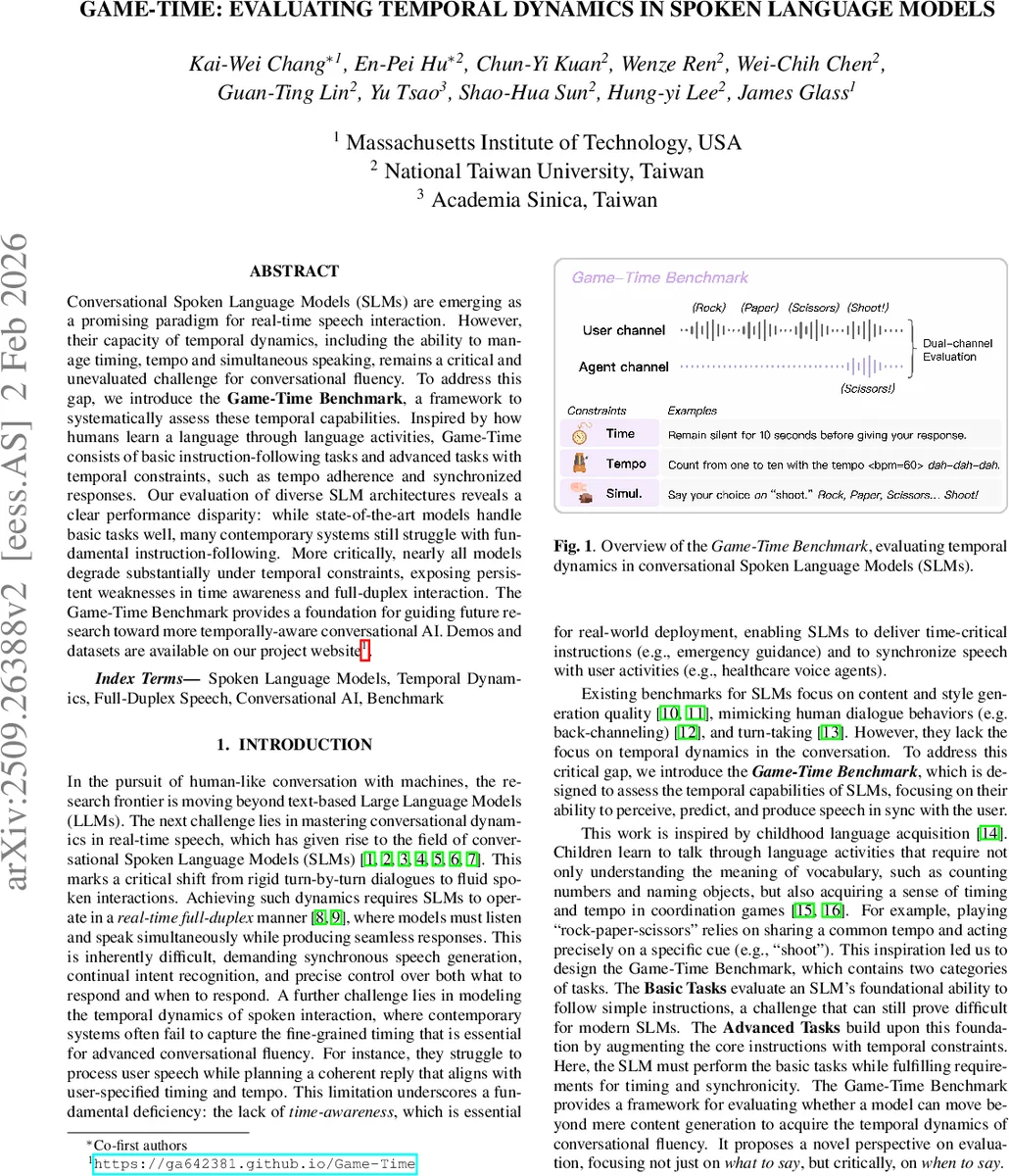

Conversational Spoken Language Models (SLMs) are emerging as a promising paradigm for real-time speech interaction. However, their capacity of temporal dynamics, including the ability to manage timing, tempo and simultaneous speaking, remains a critical and unevaluated challenge for conversational fluency. To address this gap, we introduce the Game-Time Benchmark, a framework to systematically assess these temporal capabilities. Inspired by how humans learn a language through language activities, Game-Time consists of basic instruction-following tasks and advanced tasks with temporal constraints, such as tempo adherence and synchronized responses. Our evaluation of diverse SLM architectures reveals a clear performance disparity: while state-of-the-art models handle basic tasks well, many contemporary systems still struggle with fundamental instruction-following. More critically, nearly all models degrade substantially under temporal constraints, exposing persistent weaknesses in time awareness and full-duplex interaction. The Game-Time Benchmark provides a foundation for guiding future research toward more temporally-aware conversational AI. Demos and datasets are available on our project website https://ga642381.github.io/Game-Time.

💡 Research Summary

The paper introduces Game‑Time, a novel benchmark designed to evaluate the temporal dynamics of spoken language models (SLMs) in real‑time conversational settings. While existing SLM benchmarks focus on content quality, style, turn‑taking, or back‑channeling, they largely ignore the ability to manage timing, tempo, and simultaneous speech—skills essential for fluid human‑machine dialogue. Inspired by language acquisition activities, the authors construct two tiers of tasks. Basic Tasks comprise six families (Sequence, Repeat, Compose, Recall, Open‑Ended, Role‑Play) that test fundamental speech understanding and generation. Advanced Tasks pair each Basic Task with explicit temporal constraints, yielding seven constraint types: Time‑Fast, Time‑Slow, Time‑Silence, Tempo‑Interval, Tempo‑Adhere, Simul‑Shadow, and Simul‑Cue (e.g., rock‑paper‑scissors). This taxonomy probes whether an SLM can not only produce the right content but also do so within a prescribed duration, at a specified rhythm, or overlapping with user speech.

Dataset creation follows a four‑stage pipeline: (1) expert‑written seed instructions for the Basic Tasks; (2) LLM‑driven paraphrasing and variable substitution to generate a large, linguistically diverse set of textual instructions; (3) conversion of these texts into audio using multiple TTS voices (CosyVoice, Google TTS) and manual editing for precise tempo control; (4) quality control via ASR transcription (Whisper‑medium) to filter mismatches. The final corpus contains 1,475 test instances (700 Basic, 775 Advanced).

Evaluation is performed in a dual‑channel setting: user and model audio streams are recorded, time‑aligned transcripts are produced with Whisper‑medium, and a powerful LLM judge (Gemini 2.5 Pro) scores each interaction on two dimensions—instruction‑following accuracy and compliance with temporal constraints. The authors validate the LLM‑judge against human ratings, showing high correlation and arguing that rule‑based metrics are too rigid for nuanced spoken dialogue.

Four model families are benchmarked: (i) time‑multiplexing systems (Freeze‑Omni, Unmute) that combine a streaming encoder, a frozen LLM, and a streaming decoder; (ii) a dual‑channel model (Moshi) that fine‑tunes an LLM to process and generate speech directly; (iii) commercial real‑time voice agents (Gemini‑Live, GPT‑realtime); and (iv) an oracle non‑causal system (SSML‑LLM) that uses Speech Synthesis Markup Language to achieve perfect timing with future knowledge. Results show that on Basic Tasks, GPT‑realtime achieves the highest scores, while time‑multiplexing models generally outperform the dual‑channel Moshi, suggesting that current fine‑tuning of LLMs on raw speech remains challenging. However, when temporal constraints are introduced, performance drops dramatically across all systems. Models manage overall speed adjustments (Time‑Fast, Time‑Slow) reasonably but fail on precise silence insertion (Time‑Silence) and especially on simultaneous speaking tasks (Simul‑Shadow, Simul‑Cue), indicating a fundamental gap in “when‑to‑speak” capabilities.

The paper acknowledges limitations: reliance on an LLM judge that may lack fine‑grained temporal sensitivity, offline simulation that does not capture true real‑time interaction, and potential error propagation from TTS/ASR in data generation. Future directions include building real‑time streaming evaluation protocols, integrating multimodal cues (visual, haptic) for richer temporal grounding, and developing hybrid architectures that combine the strengths of time‑multiplexing and dual‑channel designs.

Overall, Game‑Time provides a systematic, reproducible framework for measuring and improving the temporal awareness of spoken AI, shifting the research focus from “what to say” toward the equally important “when to say it.”

Comments & Academic Discussion

Loading comments...

Leave a Comment