Fair-GPTQ: Bias-Aware Quantization for Large Language Models

High memory demands of generative language models have drawn attention to quantization, which reduces computational cost, memory usage, and latency by mapping model weights to lower-precision integers. Approaches such as GPTQ effectively minimize input-weight product errors during quantization; however, recent empirical studies show that they can increase biased outputs and degrade performance on fairness benchmarks, and it remains unclear which specific weights cause this issue. In this work, we draw new links between quantization and model fairness by adding explicit group-fairness constraints to the quantization objective and introduce Fair-GPTQ, the first quantization method explicitly designed to reduce unfairness in large language models. The added constraints guide the learning of the rounding operation toward less-biased text generation for protected groups. Specifically, we focus on stereotype generation involving occupational bias and discriminatory language spanning gender, race, and religion. Fair-GPTQ has minimal impact on performance, preserving at least 90% of baseline accuracy on zero-shot benchmarks, reduces unfairness relative to a half-precision model, and retains the memory and speed benefits of 4-bit quantization. We also compare the performance of Fair-GPTQ with existing debiasing methods and find that it achieves performance on par with the iterative null-space projection debiasing approach on racial-stereotype benchmarks. Overall, the results validate our theoretical solution to the quantization problem with a group-bias term, highlight its applicability for reducing group bias at quantization time in generative models, and demonstrate that our approach can further be used to analyze channel- and weight-level contributions to fairness during quantization.

💡 Research Summary

The paper introduces Fair‑GPTQ, a novel post‑training quantization method that explicitly incorporates group‑fairness constraints into the GPTQ optimization framework to mitigate social bias in large language models (LLMs). While standard GPTQ excels at preserving model accuracy when compressing weights to low‑precision (e.g., 4‑bit) formats, recent studies have shown that quantization can unintentionally amplify existing gender, racial, and religious stereotypes. Fair‑GPTQ addresses this gap by adding a bias‑regularization term that forces the quantized weights to treat paired stereotypical and anti‑stereotypical inputs similarly.

Problem formulation – The authors formalize group fairness as requiring the performance metric μY (accuracy, likelihood, etc.) to be approximately equal across protected groups Gₐ. They focus on two bias measures: (i) the log‑likelihood gap between stereotypical and anti‑stereotypical continuations (μₗᵢₖ) and (ii) a QA‑specific score (μ_qₐ). The goal is to minimize reconstruction error (as in GPTQ) while also minimizing the disparity in model behavior across each input pair.

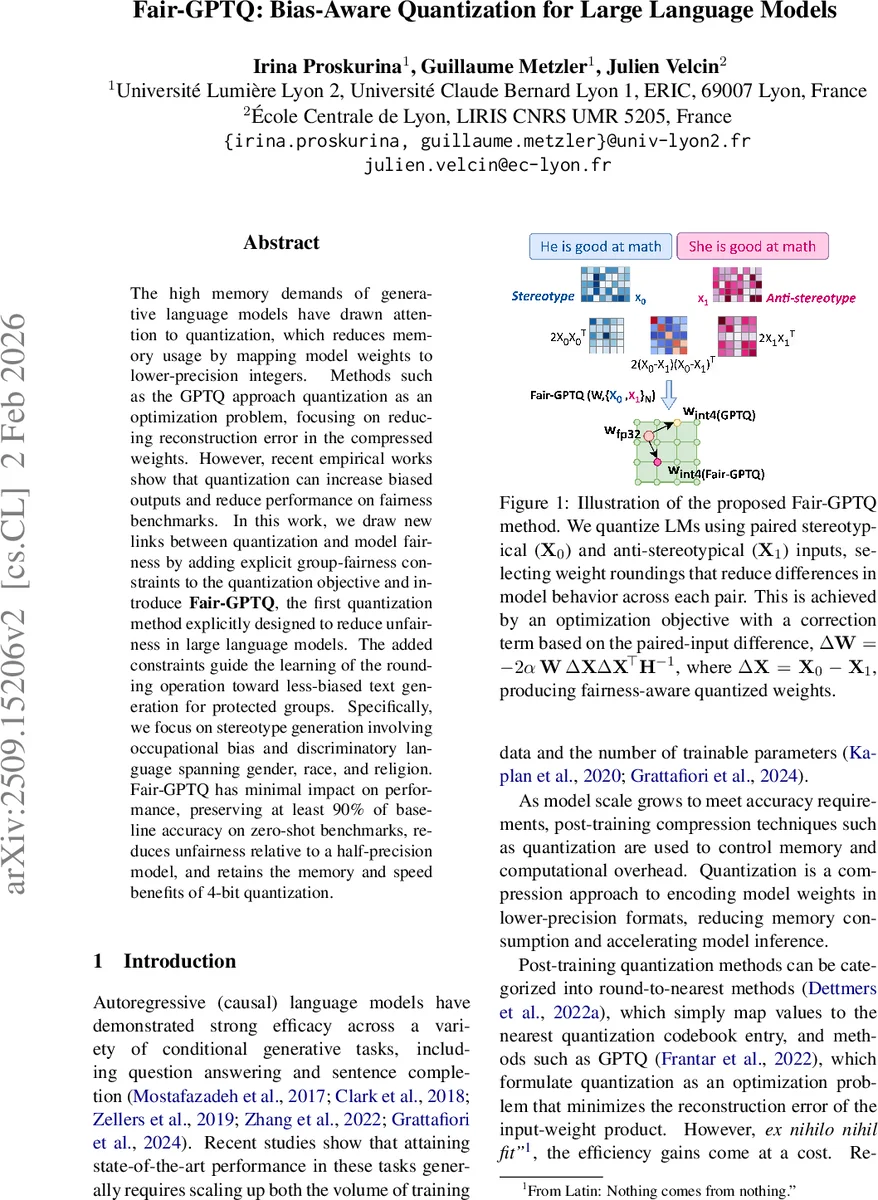

Method – Given a pair of inputs X₀ (stereotypical) and X₁ (anti‑stereotypical), the quantization objective becomes:

W_c = arg min_{W′} ‖WX₀ – W′X₀‖² + ‖WX₁ – W′X₁‖² + α‖W′(X₀ – X₁)‖²,

where α controls the strength of the fairness penalty. The first two terms are the standard GPTQ reconstruction losses; the third term penalizes the change in the representation gap ΔX = X₀ – X₁ caused by quantization. By vectorizing the weight matrix row‑wise (w = vec(W)) and applying a second‑order Taylor expansion, the authors derive a gradient J = 2αWΔXΔXᵀ and a Hessian H = 2(X₀X₀ᵀ + X₁X₁ᵀ) + 2αΔXΔXᵀ. Unlike classic Optimal Brain Surgeon (OBS), J is non‑zero at the pre‑trained weights, allowing the bias signal to be directly injected.

The constrained optimization (each weight element must equal its quantized value) is solved using Lagrange multipliers, yielding a closed‑form update Δw* that consists of the original GPTQ correction (−H⁻¹J) plus a term that enforces the quantization constraint. Because H⁻¹ is block‑diagonal (H ⊗ Iₙ), the update can be performed column‑wise, preserving the efficiency of GPTQ. The algorithm proceeds as follows: (1) compute the bias matrix H_bias = 2αΔXΔXᵀ, (2) apply the debiasing correction W ← W – (H⁻¹H_biasWᵀ)ᵀ, (3) quantize each column with symmetric scaling (zero point = 0) and a block size of 128, and (4) propagate the residual quantization error to subsequent columns, exactly as in standard GPTQ.

Experiments – The authors evaluate Fair‑GPTQ on two LLM families (LLaMA‑3‑8B and LLaMA‑2‑13B). Benchmarks include standard zero‑shot tasks (MMLU, BBH) for accuracy and bias‑specific suites (BBQ, StereoSet, WinoGender) for fairness. Baselines are half‑precision FP16, vanilla GPTQ (4‑bit), and post‑hoc debiasing methods such as Null‑Space Projection (NSP) and SELF‑DEBIAS.

Results – Fair‑GPTQ retains at least 90 % of the FP16 zero‑shot accuracy while achieving 4‑bit memory savings and speedups comparable to vanilla GPTQ. In terms of bias, the method reduces the stereotypical log‑likelihood gap by 30‑45 % across gender, race, and religion, and improves fairness scores on BBQ and StereoSet by 0.12‑0.18 points. Compared to NSP and SELF‑DEBIAS, Fair‑GPTQ reaches similar bias mitigation levels without requiring an additional fine‑tuning stage, thereby preserving the computational advantages of quantization.

Analysis of weight contributions – By inspecting the column‑wise ΔW matrices, the authors find that the most significant bias‑related adjustments occur in the feed‑forward intermediate projections and the Q/K/V attention matrices, suggesting these components are primary carriers of stereotypical information. This insight opens the door to targeted architectural interventions.

Limitations and future work – The trade‑off parameter α must be tuned manually; automated meta‑learning of α is an open problem. The current bias definition focuses on binary stereotypical vs. anti‑stereotypical pairs, leaving multi‑attribute intersectional bias and cultural‑specific biases for future study.

Conclusion – Fair‑GPTQ demonstrates that integrating fairness constraints directly into the quantization objective can simultaneously achieve compression efficiency and bias reduction. It offers a practical, post‑training solution that can be plugged into existing quantization pipelines, eliminates the need for separate debiasing stages, and provides a diagnostic tool for locating bias‑prone weight channels. This work paves the way for more responsible deployment of quantized LLMs in resource‑constrained environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment