MaiBERT: A Pre-training Corpus and Language Model for Low-Resourced Maithili Language

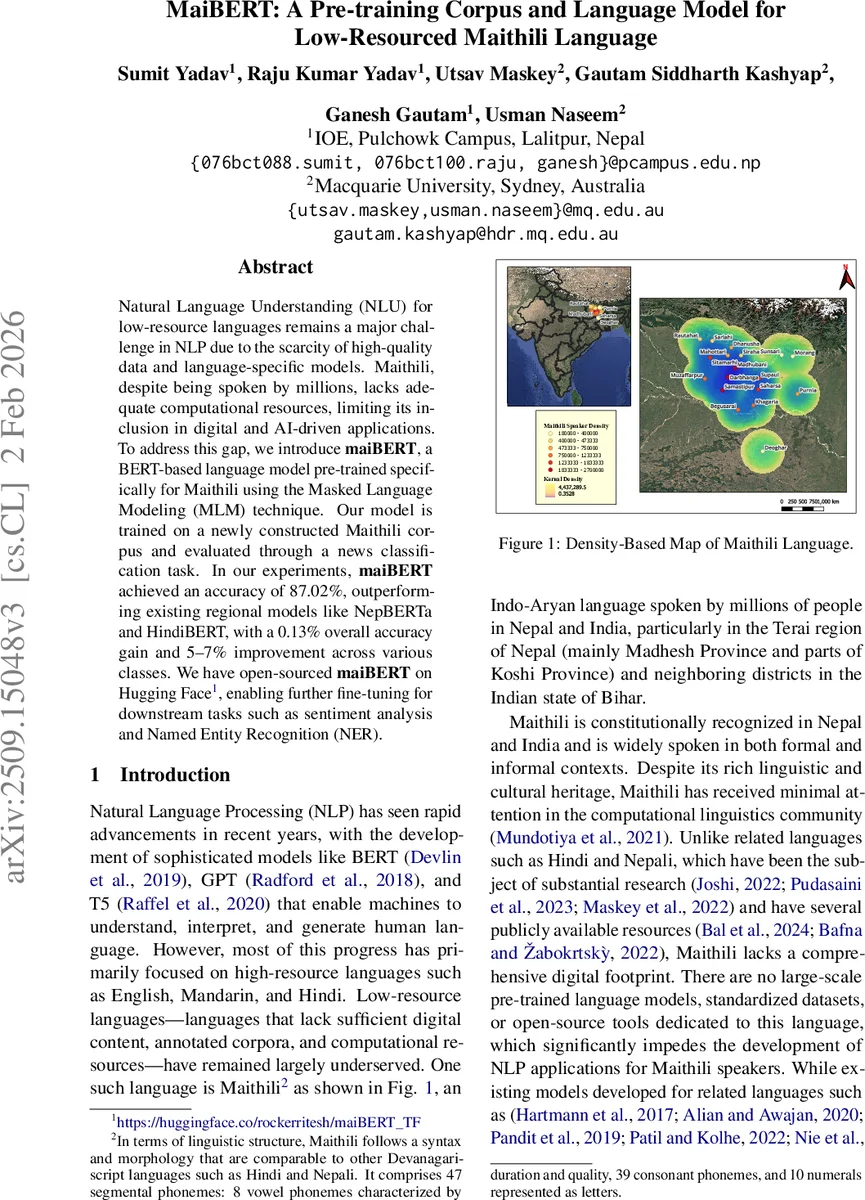

Natural Language Understanding (NLU) for low-resource languages remains a major challenge in NLP due to the scarcity of high-quality data and language-specific models. Maithili, despite being spoken by millions, lacks adequate computational resources, limiting its inclusion in digital and AI-driven applications. To address this gap, we introducemaiBERT, a BERT-based language model pre-trained specifically for Maithili using the Masked Language Modeling (MLM) technique. Our model is trained on a newly constructed Maithili corpus and evaluated through a news classification task. In our experiments, maiBERT achieved an accuracy of 87.02%, outperforming existing regional models like NepBERTa and HindiBERT, with a 0.13% overall accuracy gain and 5-7% improvement across various classes. We have open-sourced maiBERT on Hugging Face enabling further fine-tuning for downstream tasks such as sentiment analysis and Named Entity Recognition (NER).

💡 Research Summary

The paper introduces maiBERT, a BERT‑based language model specifically pre‑trained for the low‑resource Maithili language. Recognizing the scarcity of computational resources for Maithili despite its millions of speakers in Nepal and India, the authors first construct a sizable, high‑quality Maithili corpus. The corpus aggregates three primary sources: Maithili Wikipedia (≈ 880 K words), digitized books obtained via OCR from 418 scanned documents (≈ 10.4 M words), and raw newspaper articles from local outlets (≈ 6.6 M words). After de‑duplication and extensive preprocessing—including Unicode NFC normalization, Devanagari‑only filtering, and custom tokenization—the final dataset contains 1,028,017 sentences, about 18 million words, and roughly 450 MB of cleaned text. Vocabulary analysis reveals 1,357,081 unique tokens and a notable frequency of the Maithili‑specific punctuation marker “।”.

For tokenization, the authors employ SentencePiece with a Byte‑Pair Encoding (BPE) sub‑word model, fixing the vocabulary size at 30,000 to capture rare and compound forms. The pre‑training follows the standard Masked Language Modeling (MLM) objective: 15 % of tokens are masked per BERT’s scheme (80 % replaced by

Comments & Academic Discussion

Loading comments...

Leave a Comment