RDDM: Practicing RAW Domain Diffusion Model for Real-world Image Restoration

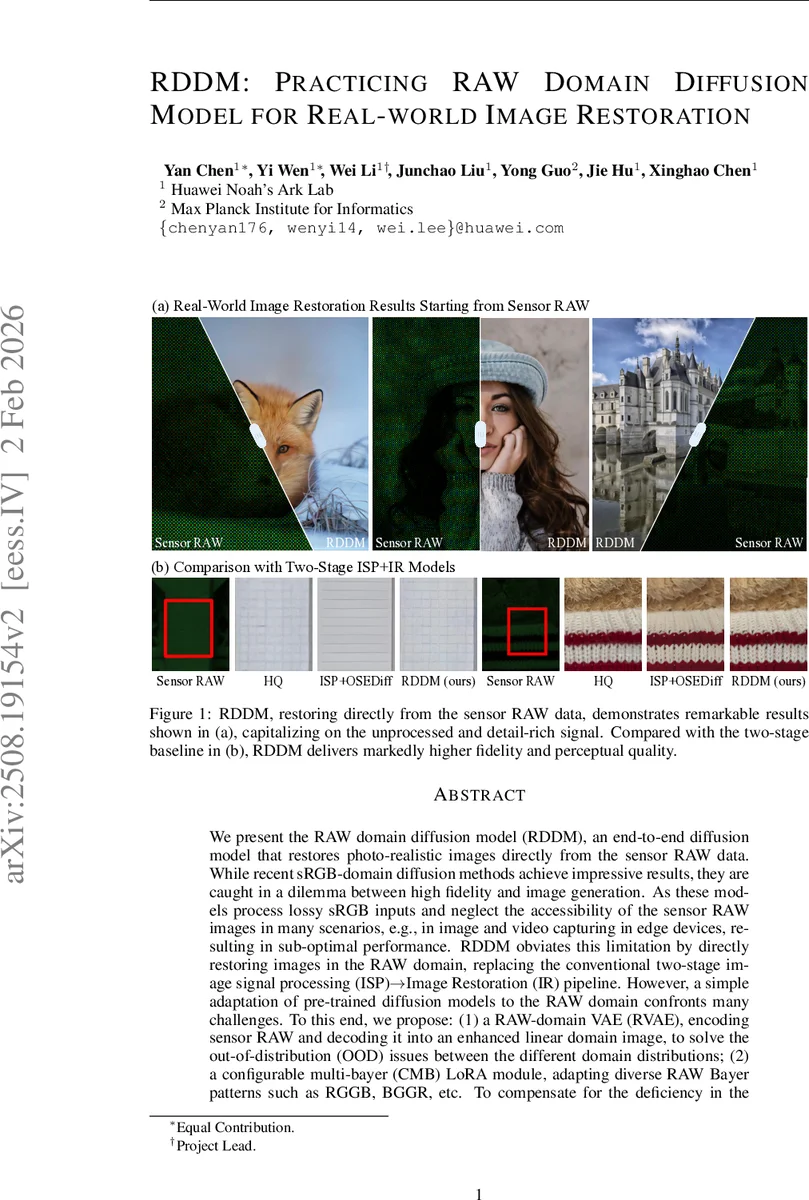

We present the RAW domain diffusion model (RDDM), an end-to-end diffusion model that restores photo-realistic images directly from the sensor RAW data. While recent sRGB-domain diffusion methods achieve impressive results, they are caught in a dilemma between high fidelity and image generation. These models process lossy sRGB inputs and neglect the accessibility of the sensor RAW images in many scenarios, e.g., in image and video capturing in edge devices, resulting in sub-optimal performance. RDDM obviates this limitation by directly restoring images in the RAW domain, replacing the conventional two-stage image signal processing (ISP)->Image Restoration (IR) pipeline. However, a simple adaptation of pre-trained diffusion models to the RAW domain confronts many challenges. To this end, we propose: (1) a RAW-domain VAE (RVAE), encoding sensor RAW and decoding it into an enhanced linear domain image, to solve the out-of-distribution (OOD) issues between the different domain distributions; (2) a configurable multi-bayer (CMB) LoRA module, adapting diverse RAW Bayer patterns such as RGGB, BGGR, etc. To compensate for the deficiency in the dataset, we develop a scalable data synthesis pipeline synthesizing RAW LQ-HQ pairs from existing sRGB datasets for large-scale training. Extensive experiments demonstrate RDDM’s superiority over state-of-the-art sRGB diffusion methods, yielding higher fidelity results with fewer artifacts. Codes will be publicly available at https://github.com/YanCHEN-fr/RDDM.

💡 Research Summary

The paper introduces RDDM, a raw‑domain diffusion model that restores high‑quality images directly from sensor RAW data, bypassing the traditional ISP→Image‑Restoration pipeline. The authors identify two major obstacles when adapting existing sRGB diffusion models to RAW: (1) a severe distribution gap caused by different luminance ranges and Bayer mosaic patterns, and (2) limited generalization across sensors with diverse Bayer arrangements and noise characteristics. To overcome these, they propose three key innovations. First, a Raw‑Domain Variational Auto‑Encoder (RVAE) is trained in two stages. The initial stage learns to reconstruct linear‑domain images from clean data using L1, LPIPS and GAN losses, while normalizing the latent space to a standard Gaussian. In the second stage the encoder is frozen, LoRA adapters are added, and the model learns to encode noisy mosaicked RAW inputs, effectively bridging the RAW‑sRGB domain gap. Second, Configurable Multi‑Bayer LoRA (CMB‑LoRA) introduces pattern‑specific LoRA branches that can be switched at inference time according to the sensor’s Bayer layout, enabling efficient cross‑sensor adaptation without full fine‑tuning. Third, a scalable RAW data synthesis pipeline converts large‑scale sRGB datasets into RAW‑LQ/HQ pairs by inverse tone‑mapping (IPTP) to linear space followed by a Mosaic Noise Synthesizer (MNS) that injects realistic sensor noise and Bayer mosaicking. This synthetic data supplies the massive training corpus needed for diffusion models. The overall architecture couples the RVAE with a pre‑trained Stable Diffusion backbone (ϵθ) and incorporates a text prompt extractor (DAPE) to provide semantic guidance from rendered sRGB images. Training losses combine VSD, LPIPS, and MSE in both RAW and sRGB domains, ensuring fidelity in the final linear output and perceptual quality after post‑tone‑mapping (PTP). Extensive experiments on synthetic benchmarks (DIV2K‑Val, RealSR, DRealSR) and real‑world RAW datasets (DND, RealCapture, SIDD) demonstrate that RDDM outperforms state‑of‑the‑art sRGB diffusion methods and GAN‑based restorers across a suite of metrics (PSNR, SSIM, LPIPS, DISTS, FID, NIQE, MUSIQ, CLIPIQA). Notably, even with a single diffusion step (s1) RDDM achieves higher PSNR (23.74 dB) and lower LPIPS (0.254) than multi‑step baselines, highlighting its efficiency. Qualitative results show reduced color shifts, fewer artifacts, and better preservation of fine textures. In summary, RDDM establishes a practical framework for raw‑domain generative image restoration by (i) learning a RAW‑aware latent space via RVAE, (ii) enabling sensor‑agnostic adaptation through CMB‑LoRA, and (iii) supplying abundant training data via a novel synthesis pipeline. The work opens new avenues for leveraging the rich information present in RAW sensors for high‑fidelity restoration, with future directions including real‑time ISP integration, support for non‑standard sensors, and broader deployment on edge devices.

Comments & Academic Discussion

Loading comments...

Leave a Comment