Hospital-Specific Bias in Patch-Based Pathology Models

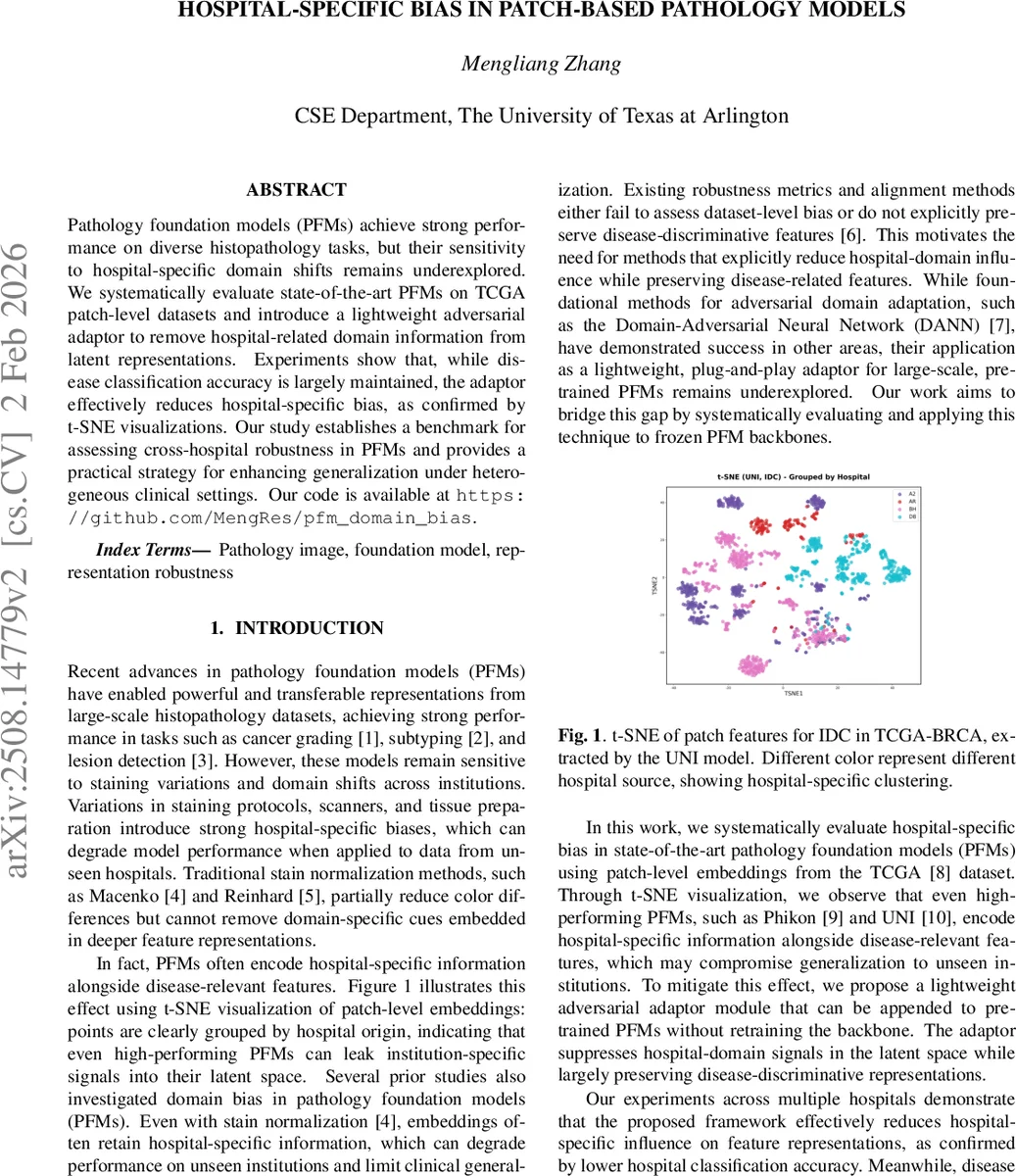

Pathology foundation models (PFMs) achieve strong performance on diverse histopathology tasks, but their sensitivity to hospital-specific domain shifts remains underexplored. We systematically evaluate state-of-the-art PFMs on TCGA patch-level datasets and introduce a lightweight adversarial adaptor to remove hospital-related domain information from latent representations. Experiments show that, while disease classification accuracy is largely maintained, the adaptor effectively reduces hospital-specific bias, as confirmed by t-SNE visualizations. Our study establishes a benchmark for assessing cross-hospital robustness in PFMs and provides a practical strategy for enhancing generalization under heterogeneous clinical settings. Our code is available at https://github.com/MengRes/pfm_domain_bias.

💡 Research Summary

This paper investigates the often‑overlooked problem of hospital‑specific domain bias in state‑of‑the‑art pathology foundation models (PFMs). Using patch‑level data from the TCGA‑BRCA cohort, the authors first construct a balanced multi‑hospital dataset: four major contributing hospitals each provide 20 whole‑slide images (WSIs), from which 500 256 × 256 patches at 40× magnification are sampled. To reduce label noise, patches are filtered with the CONCH model, retaining only those whose top‑1 prediction matches the WSI label with confidence > 0.8, resulting in 4,029 high‑confidence patches covering invasive ductal carcinoma (IDC) and invasive lobular carcinoma (ILC).

Eleven representative PFMs—including ResNet‑50, Giga‑Path, UNI, UNI2‑H, CONCH, TITAN, MUSK, H‑Optimus‑0, Phikon, Phikon‑v2, and Virchow—are used as frozen feature extractors. For each model, two independent multilayer perceptrons (MLPs) are trained: one predicts disease labels, the other predicts the originating hospital. High hospital‑prediction accuracy indicates that the latent space encodes institution‑specific cues, which could jeopardize generalization to unseen sites.

To mitigate this, the authors adapt the Domain‑Adversarial Neural Network (DANN) concept into a lightweight “adversarial adaptor” that can be appended to any frozen PFM without retraining its backbone. The adaptor consists of a trainable projection head (512‑dimensional), a disease classifier, a hospital classifier, and a Gradient Reversal Layer (GRL). During training, the GRL flips the gradient from the hospital classifier, encouraging the projected features to be discriminative for disease while being invariant to hospital origin. The total loss is L_total = L_disease + λ L_hospital, with λ = 0.5 chosen via validation. Only the projection head and the two classifiers are updated; the encoder remains frozen. At inference, the GRL and hospital classifier are discarded, and disease predictions are made directly from the adapted features.

Experiments are conducted with a batch size of 64 over 30 epochs, using five‑fold cross‑validation that ensures patches from the same WSI never appear in both training and validation folds. Results show a consistent reduction in hospital‑source accuracy (ACC) and AUC across all PFMs when the adversarial adaptor is applied. For example, UNI’s hospital AUC drops from 0.96 ± 0.02 to 0.51 ± 0.05, while disease AUC remains at 0.98 ± 0.01. Similar patterns are observed for UNI2‑H, Virchow, Phikon‑v2, and others. Importantly, disease classification performance is largely preserved; most models retain disease ACC above 0.90 and AUC above 0.95, with only marginal changes.

t‑SNE visualizations corroborate the quantitative findings. In the original UNI embeddings, patches cluster tightly by hospital, revealing strong domain leakage. After adversarial adaptation, the same embeddings become intermingled, indicating successful suppression of hospital‑specific signals while retaining disease‑related structure.

The paper concludes that (1) PFMs inherently capture institution‑specific artifacts that can impair cross‑hospital deployment, (2) a simple, plug‑and‑play adversarial adaptor can effectively remove such bias without sacrificing diagnostic accuracy, and (3) this approach provides a practical pathway toward more robust, fair, and clinically deployable AI systems in pathology. The authors suggest future work on extending the method to multi‑domain scenarios (e.g., different countries, scanner models, patient demographics) and integrating multimodal clinical data to further enhance generalization.

Comments & Academic Discussion

Loading comments...

Leave a Comment