CUS-QA: Local-Knowledge-Oriented Open-Ended Question Answering Dataset

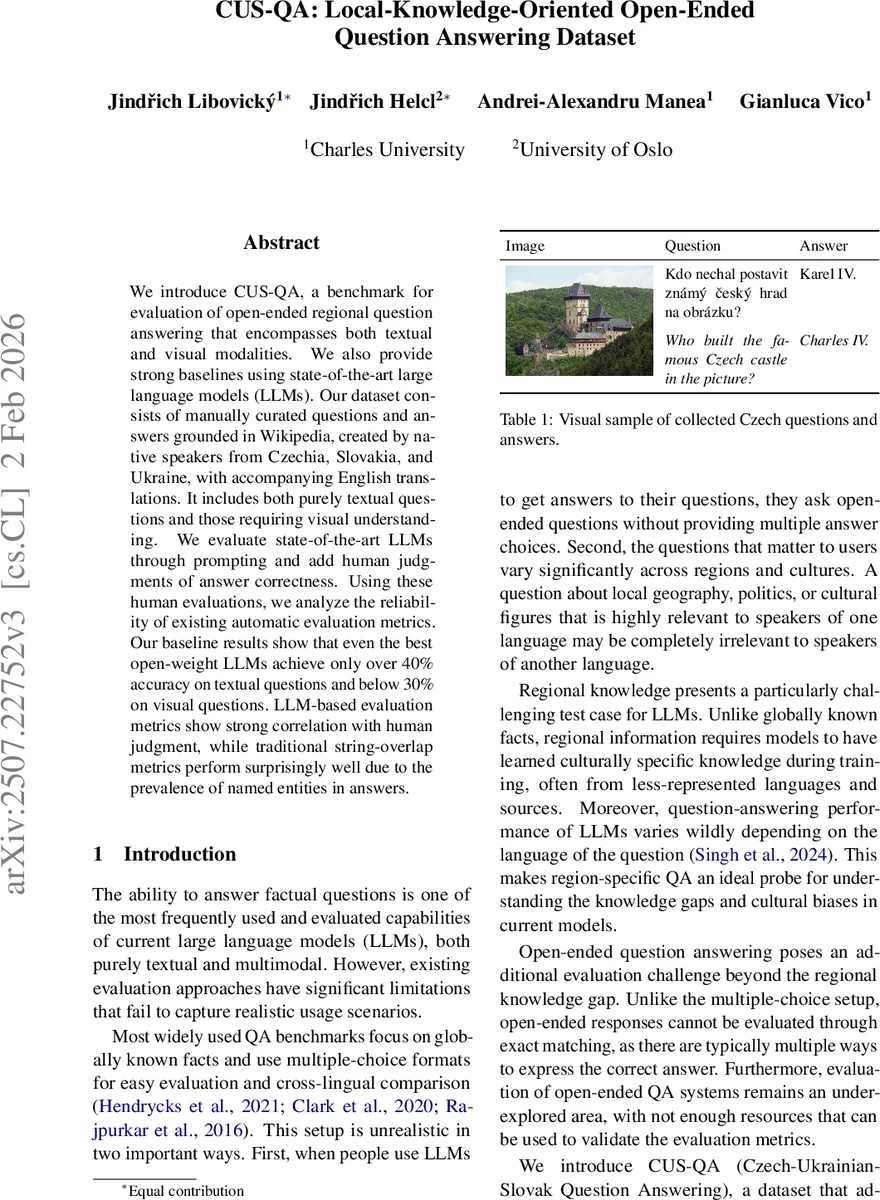

We introduce CUS-QA, a benchmark for evaluation of open-ended regional question answering that encompasses both textual and visual modalities. We also provide strong baselines using state-of-the-art large language models (LLMs). Our dataset consists of manually curated questions and answers grounded in Wikipedia, created by native speakers from Czechia, Slovakia, and Ukraine, with accompanying English translations. It includes both purely textual questions and those requiring visual understanding. We evaluate state-of-the-art LLMs through prompting and add human judgments of answer correctness. Using these human evaluations, we analyze the reliability of existing automatic evaluation metrics. Our baseline results show that even the best open-weight LLMs achieve only over 40% accuracy on textual questions and below 30% on visual questions. LLM-based evaluation metrics show strong correlation with human judgment, while traditional string-overlap metrics perform surprisingly well due to the prevalence of named entities in answers.

💡 Research Summary

The paper introduces CUS‑QA, a novel benchmark designed to evaluate open‑ended question answering (QA) that requires both textual and visual understanding of region‑specific knowledge. The dataset focuses on three Central/Eastern European countries—Czechia, Slovakia, and Ukraine—covering three Slavic languages with varying resource levels. Questions and answers were created by native speakers who were instructed to select facts that are well‑known within their country (roughly “known by at least ten thousand people”) but largely unknown outside it. Annotators browsed a pre‑selected pool of Wikipedia pages (both location‑based entities and language‑exclusive pages), wrote a question and answer in the native language, and provided an English translation. If the page contained a photograph, a parallel visual question‑answer pair was also generated. After quality control (removing 22 % of items and correcting minor errors), the final dataset comprises 1,536 Czech, 1,210 Slovak, and 1,158 Ukrainian textual QA pairs, plus 226 + 118 + 204 visual pairs. Each entry includes the source Wikipedia URL, the question and answer in the local language, the English translation, and, for visual items, the associated image.

Statistical analysis shows that questions average about 60 characters, while answers are short (≈20 characters for Czech/Slovak, ≈30 characters for Ukrainian) and are predominantly noun phrases. Named‑entity tagging reveals a heavy concentration of location entities (especially city names) and person entities (politicians dominate). Visual items are categorized into seven groups (portraits, buildings, cityscapes, nature, statues, food, technology, other) using LLaMA‑Vision, with roughly 40 % of visual questions involving a person’s face.

The authors evaluate several state‑of‑the‑art open‑weight large language models (LLMs) in a zero‑shot setting. Text‑only models include LLaMA 3.1 8B, LLaMA 3.3 70B, Mistral v0.3 7B, and EuroLLM 7B. Multimodal models for visual QA include mBLIP, LLaMA 3.2 Vision, LLaMA 4 Vision, Maya, Gemma 3, and idefics. All models receive a uniform English system prompt that instructs them to answer in the language of the input question. Decoding uses nucleus sampling (p = 0.9) with a single sample per query.

Performance is modest. The best text‑only model (LLaMA 3.3 70B) achieves just under 40 % accuracy on textual questions when judged by human annotators, while visual QA accuracy stays below 30 % for the strongest multimodal models. Notably, models often perform better when the same question is asked in English rather than the native language, highlighting the impact of language‑specific data scarcity and cultural bias in training corpora.

Human evaluation was conducted using a binary rubric covering four dimensions: correctness (does the answer match the reference, allowing minor hallucinations), truthfulness (is the content objectively true), relevance (does the answer stay on‑topic and specific), and coherence (grammaticality and language correctness). Each answer was judged independently by two annotators; a criterion was considered satisfied only when both agreed. This dual‑annotator approach yields a reliable gold standard for assessing automatic metrics.

Automatic evaluation metrics examined include traditional string‑overlap scores (BLEU, ROUGE, BERTScore) and LLM‑based evaluators (GPT‑4o, Claude 3.5). Correlation analysis shows that LLM‑based metrics align strongly with human judgments (Pearson r > 0.8), while surprisingly, string‑overlap metrics also exhibit high correlation in this dataset because many correct answers are short named entities where exact token overlap is informative. This finding suggests that, for region‑specific QA with concise factual answers, simple overlap measures may still be useful, though they may fail for longer, more nuanced responses.

Cross‑lingual consistency was probed by translating each question into the other two local languages using Claude 3.5 and feeding the translations to the models. The resulting answers displayed substantial variance, confirming that current multilingual LLMs lack robust knowledge transfer across languages, especially for low‑resource Slavic languages.

The paper’s contributions are threefold: (1) the creation of CUS‑QA, a high‑quality, multilingual, multimodal open‑ended QA benchmark that reflects real‑world user needs; (2) a thorough human evaluation framework and an empirical study of how well existing automatic metrics capture answer quality in this setting; (3) an empirical demonstration that even the most powerful open‑weight LLMs struggle with regional knowledge and visual reasoning, and that language‑specific gaps remain pronounced.

Future work suggested includes expanding the benchmark to additional languages and cultural regions, designing more sophisticated multimodal prompts that better exploit visual context, and developing new evaluation metrics that combine human‑aligned scoring with model‑based judgments to better capture answer diversity and nuance. The authors release the dataset on the Hugging Face Hub and provide the annotation interface code on GitHub, encouraging the community to adopt CUS‑QA for more realistic assessment of LLMs’ regional and multimodal capabilities.

Comments & Academic Discussion

Loading comments...

Leave a Comment