Improving the Distributional Alignment of LLMs using Supervision

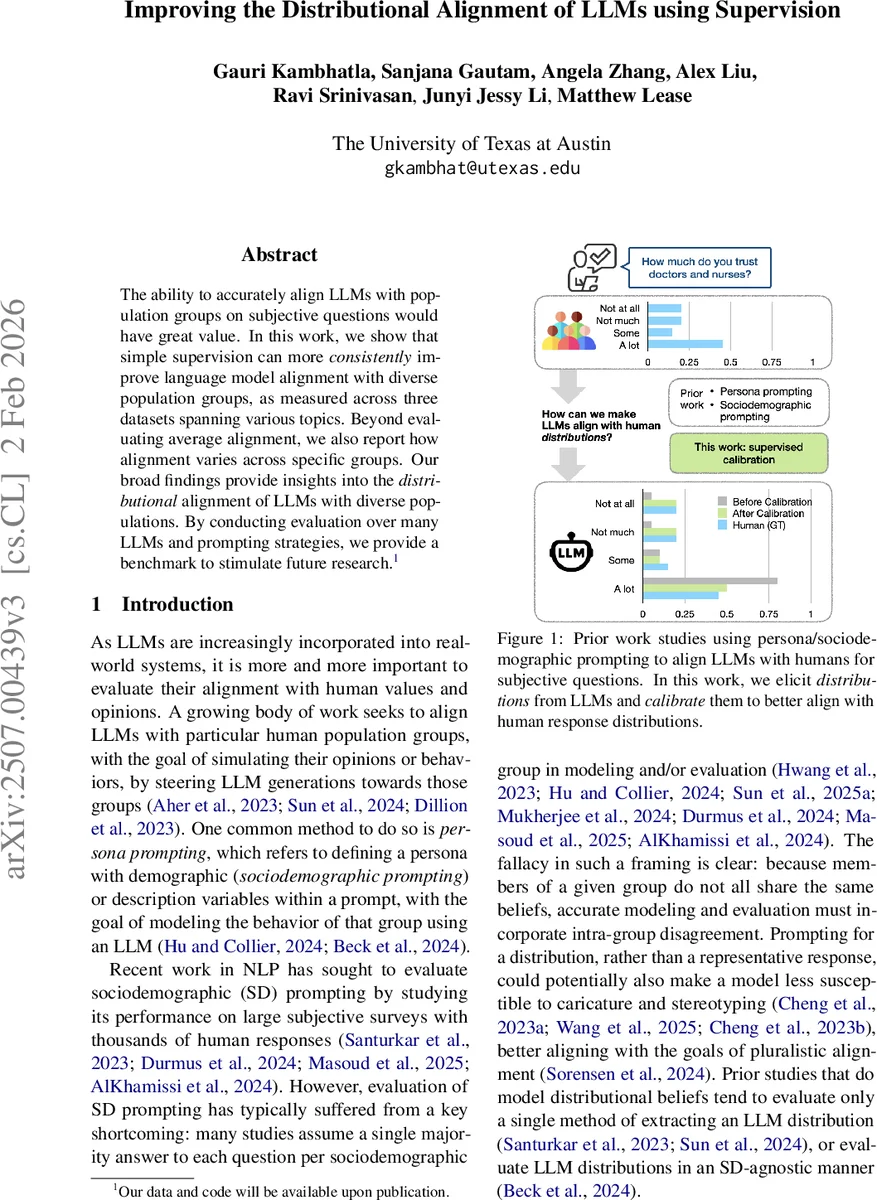

The ability to accurately align LLMs with population groups on subjective questions would have great value. In this work, we show that simple supervision can more consistently improve language model alignment with diverse population groups, as measured across three datasets spanning various topics. Beyond evaluating average alignment, we also report how alignment varies across specific groups. Our broad findings provide insights into the distributional alignment of LLMs with diverse populations. By conducting evaluation over many LLMs and prompting strategies, we provide a benchmark to stimulate future research.

💡 Research Summary

This paper investigates how to improve the alignment between large language models (LLMs) and human opinion distributions across diverse demographic groups. While prior work has largely focused on “sociodemographic prompting” – inserting demographic descriptors into prompts to steer a model toward a single representative answer – the authors argue that such approaches ignore intra‑group variability and risk ecological fallacies. Instead, they propose a distributional alignment framework that compares the full probability distribution over answer choices generated by an LLM with the empirical distribution of human responses for each demographic slice.

Three public survey datasets are used: Welcome Global Monitor (2018), OpinionQA, and the World Values Survey, together providing 92 questions and roughly 4,500 human response distributions across multiple demographic axes (e.g., age, gender, education). Fifteen LLMs spanning open‑source, open‑weight, and API‑only families (Claude‑3.5‑v2, Llama‑3.2‑90B, Mistral‑large, OLMo‑2‑7B‑I, Qwen‑2.5‑72B, etc.) are evaluated.

The authors explore two prompt families: a “Base” prompt that asks the question without demographic context, and a “Sociodemographic (SD)” prompt that prefixes the question with a demographic description (e.g., “Imagine you are a 30‑year‑old female”). Each family is further varied with standard, few‑shot, and chain‑of‑thought (CoT) formulations. To extract probability distributions from the models, three elicitation methods are tested:

- Verbalized – the model is asked to output a probability vector directly (e.g., “

Comments & Academic Discussion

Loading comments...

Leave a Comment