RePPL: Recalibrating Perplexity by Uncertainty in Semantic Propagation and Language Generation for Explainable QA Hallucination Detection

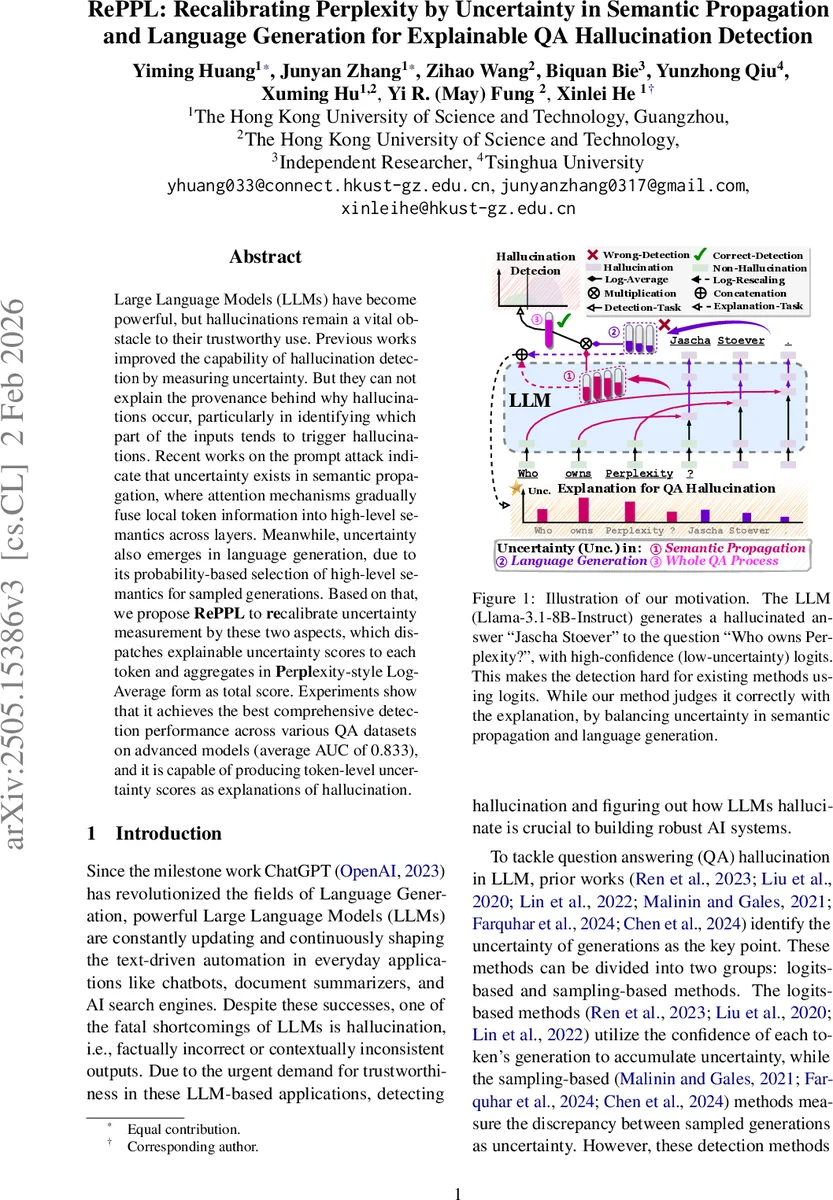

Large Language Models (LLMs) have become powerful, but hallucinations remain a vital obstacle to their trustworthy use. Previous works improved the capability of hallucination detection by measuring uncertainty. But they can not explain the provenance behind why hallucinations occur, particularly in identifying which part of the inputs tends to trigger hallucinations. Recent works on the prompt attack indicate that uncertainty exists in semantic propagation, where attention mechanisms gradually fuse local token information into high-level semantics across layers. Meanwhile, uncertainty also emerges in language generation, due to its probability-based selection of high-level semantics for sampled generations. Based on that, we propose RePPL to recalibrate uncertainty measurement by these two aspects, which dispatches explainable uncertainty scores to each token and aggregates in Perplexity-style Log-Average form as a total score. Experiments show that it achieves the best comprehensive detection performance across various QA datasets on advanced models (average AUC of 0.833), and it is capable of producing token-level uncertainty scores as explanations of hallucination.

💡 Research Summary

The paper introduces RePPL, a novel hallucination detection metric for large language models (LLMs) that explicitly accounts for two distinct sources of uncertainty: (1) uncertainty arising during semantic propagation (the way input tokens are transformed into high‑level representations across transformer layers) and (2) uncertainty inherent in the language generation process (the stochastic selection of tokens based on probability distributions). Existing detection methods either rely on log‑likelihood‑based perplexity or on sampling‑based disagreement, but they treat the output as a black box and cannot pinpoint which parts of the prompt or generated text cause hallucinations.

To capture semantic‑propagation uncertainty, the authors extract attention matrices from each layer and head of the LLM during generation. They apply three simple pooling strategies—maximum, average, and Rollout—to obtain a token‑to‑token attribution matrix R. For N sampled generations (N=10 in experiments), they collect N attribution matrices {R₁,…,R_N}. The region of interest (ROI) consists of columns corresponding to input tokens and rows corresponding to output tokens, reflecting how each input token contributes to each generated token. By averaging across rows (tokens) and then computing the coefficient of variation (standard deviation divided by mean) across the N samples, they obtain a per‑input‑token variability vector r. This vector is transformed into a pseudo‑confidence ˆp_i = 1/(1 + r_i^α) (α is a scaling hyper‑parameter). A log‑rescaling and length‑normalization yields the InnerPPL score: InnerPPL = – (1/T₀) Σ_i log(ˆp_i), where T₀ is the number of input tokens.

For generation‑side uncertainty, they compute token‑level confidence p_g from the model’s logits for each token in the greedy decoding path and normalize by the average length ¯S of the N sampled generations: OuterPPL = – (1/¯S) Σ_g log(p_g). The divisor ¯S penalizes cases where the greedy output is unusually short (a sign of high confidence) and rewards cases where it is comparable to sampled outputs (a sign of uncertainty).

The final RePPL score multiplies the two components with a small bias ε to avoid division by zero: RePPL = – (InnerPPL + ε) × OuterPPL. Multiplication ensures that a high score is only produced when both propagation and generation uncertainties are high, providing a hierarchical distinction that improves detection robustness.

The method also outputs token‑level uncertainty scores (both from the attribution variance and from generation confidence), which serve as explanations: they highlight which input tokens most destabilize the semantic flow and which generated tokens are most likely hallucinated.

Experiments were conducted on three open‑source instruction‑tuned LLMs (Llama‑3.1‑8B‑Instruct, Qwen2.5‑7B‑Instruct, Qwen2.5‑14B‑Instruct) across four QA benchmarks: TriviaQA, Natural Questions (closed‑book), CoQA, and SQuAD‑v2.0 (open‑book). The authors compare RePPL against a suite of baselines: traditional Perplexity, Verbalize (P(True)), Semantic Entropy, EigenScore, and several probe‑based classifiers. Using the same sampling settings as prior work (N=10, temperature = 1.0, top‑k = 50, top‑p = 0.99), RePPL achieves an average AUC of 0.833, outperforming all baselines on every dataset and model. Qualitative case studies (e.g., the question “Who owns Perplexity?”) demonstrate that RePPL correctly flags a hallucinated answer (“Jascha Stoever”) even when the logits suggest high confidence, because the attribution variance reveals that the prompt token “Perplexity” induces unstable semantic propagation.

Ablation studies examine the impact of the pooling method, the α scaling factor, and the bias ε. Results show modest sensitivity: MaxPool, AvgPool, and Rollout all yield similar performance, and RePPL remains stable across a reasonable range of α (0.5–2.0) and ε (10⁻⁵–10⁻³). The authors also test robustness to different numbers of samples (N = 5, 15) and find performance degrades gracefully.

In summary, RePPL advances hallucination detection by (1) jointly modeling uncertainty in both internal representation flow and external token selection, (2) providing token‑level explanations that identify the provenance of hallucinations, and (3) delivering state‑of‑the‑art detection accuracy across diverse QA tasks and modern LLMs. The work opens avenues for integrating explainable uncertainty metrics into real‑time LLM applications and for extending the approach to multimodal or instruction‑following scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment