Learning-Augmented Power System Operations: A Unified Optimization View

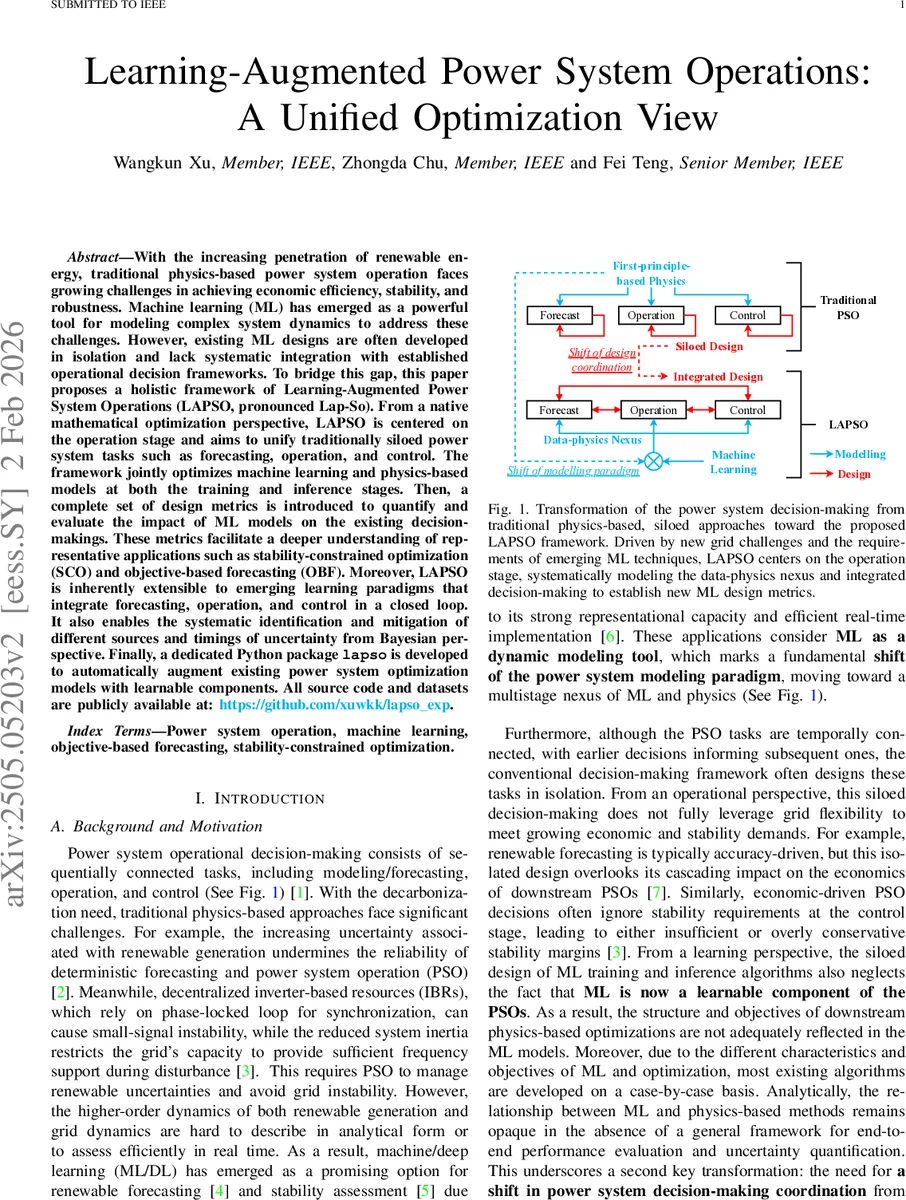

With the increasing penetration of renewable energy, traditional physics-based power system operation faces growing challenges in achieving economic efficiency, stability, and robustness. Machine learning (ML) has emerged as a powerful tool for modeling complex system dynamics to address these challenges. However, existing ML designs are often developed in isolation and lack systematic integration with established operational decision frameworks. To bridge this gap, this paper proposes a holistic framework of Learning-Augmented Power System Operations (LAPSO, pronounced Lap-So). From a native mathematical optimization perspective, LAPSO is centered on the operation stage and aims to unify traditionally siloed power system tasks such as forecasting, operation, and control. The framework jointly optimizes machine learning and physics-based models at both the training and inference stages. Then, a complete set of design metrics is introduced to quantify and evaluate the impact of ML models on the existing decision-makings. These metrics facilitate a deeper understanding of representative applications such as stability-constrained optimization (SCO) and objective-based forecasting (OBF). Moreover, LAPSO is inherently extensible to emerging learning paradigms that integrate forecasting, operation, and control in a closed loop. It also enables the systematic identification and mitigation of different sources and timings of uncertainty from Bayesian perspective. Finally, a dedicated Python package \texttt{lapso} is developed to automatically augment existing power system optimization models with learnable components. All source code and datasets are publicly available at: https://github.com/xuwkk/lapso_exp.

💡 Research Summary

**

The paper addresses the growing challenges in power system operation caused by high renewable penetration and inverter‑based resources (IBRs). Traditional physics‑based approaches struggle to simultaneously guarantee economic efficiency, stability, and robustness under increasing uncertainty. To bridge the gap between data‑driven machine learning (ML) and physics‑based optimization, the authors propose a unified framework called Learning‑Augmented Power System Operations (LAPSO).

LAPSO is centered on the operation stage and treats the sequential tasks of forecasting, operation, and control as a single optimization problem. Starting from a generic operation model (P_{\text{basic}}: \min_z f(z; y) \text{ s.t. } g(z; y) \le 0), the framework partitions parameters into unpredictable (y_1) and predictable (y_2). A parametric ML model (v(\cdot; \theta^*)) is trained on supervised data ({(x, z, y_1), y_2}) and used to predict (\hat y_2) inside the optimization. The learned prediction becomes a decision variable, constrained by an auxiliary function (g_v(\cdot) \le \tau) and possibly contributing an extra term (f_v(\cdot)) to the objective. In compact form, the learning‑augmented problem is written as (P_{\text{lapso}}: \min_z \tilde f(z; y_1, x, \theta^*) \text{ s.t. } \tilde g(z; y_1, x, \theta^*) \le 0).

A central contribution is the introduction of a “design triangle” that adds a third dimension—impact on the underlying optimization—to the conventional ML design criteria of accuracy and generalization. The authors identify three key trade‑offs: (1) computational efficiency versus model expressiveness, (2) optimality guarantees versus conservatism, and (3) robustness to uncertainty versus predictive performance. These trade‑offs are quantified through metrics that evaluate how the ML component affects solution time, cost optimality, and stability margins.

The framework is shown to subsume two representative applications: Stability‑Constrained Optimization (SCO) and Objective‑Based Forecasting (OBF). In SCO, ML‑based stability assessments (e.g., small‑signal or transient stability indices) are encoded as constraints, allowing the economic dispatch or unit commitment to respect stability limits without solving costly differential equations. In OBF, the downstream optimization problem is treated as an implicit loss function; gradients of the optimal decision with respect to forecast parameters are obtained via differentiable optimization, enabling end‑to‑end training that aligns forecast quality with operational cost. Both cases illustrate how LAPSO provides a common mathematical language for otherwise disparate ML‑optimization hybrids.

Uncertainty is treated from a Bayesian perspective. The authors distinguish sources (ML model parameters, input features, unknown grid parameters) and realization times (pre‑operational forecasting vs. real‑time control). Using automatic differentiation and the implicit function theorem, they derive sensitivity formulas that propagate parameter uncertainty through the entire learning‑augmented pipeline. To hedge against “wait‑and‑see” uncertainties, a robust multi‑stage, multi‑level training algorithm is proposed. The algorithm iteratively refines the ML model while solving a master problem that generates worst‑case scenarios; the resulting subproblems are efficiently tackled with a column‑and‑constraint generation (C&CG) scheme.

To facilitate adoption, two open‑source Python packages are released. The pso package automates the creation of large‑scale testbeds and spatiotemporal datasets for training ML models. The lapso package provides a flexible interface to embed learnable components into existing PSO formulations, handling model insertion, constraint transformation, and gradient computation. All code and datasets are publicly available on GitHub.

Experimental validation is performed on IEEE 14‑, 39‑, 57‑, 118‑, and 300‑bus systems, covering a variety of ML architectures (linear regression, decision trees, feed‑forward NNs, convolutional NNs) and optimization problems (unit commitment, economic dispatch, AC‑OPF). Results demonstrate that LAPSO‑based SCO reduces operational cost by 3–7 % while improving stability margins by 10–15 % compared with traditional methods. OBF integrated via LAPSO yields forecast models that directly minimize expected dispatch cost, achieving comparable or better economic performance than cost‑agnostic forecasters. Computationally, the inclusion of learnable components does not change the problem class (e.g., linear or convex) and adds only modest overhead (1–2×) in the training phase, while real‑time solution times remain within practical limits. Robust training further limits worst‑case cost escalation to under 20 % under severe uncertainty.

In conclusion, LAPSO offers a principled, optimization‑centric paradigm for co‑designing ML models and power system operations. By treating ML as an integral, learnable component rather than a peripheral predictor, the framework aligns predictive accuracy with operational objectives, quantifies and mitigates uncertainty, and preserves the tractability of established optimization solvers. Future work will explore real‑time scalability to very large networks, integration with reinforcement learning for adaptive control, and coupling with market mechanisms to capture economic incentives.

Comments & Academic Discussion

Loading comments...

Leave a Comment