CASE -- Condition-Aware Sentence Embeddings for Conditional Semantic Textual Similarity Measurement

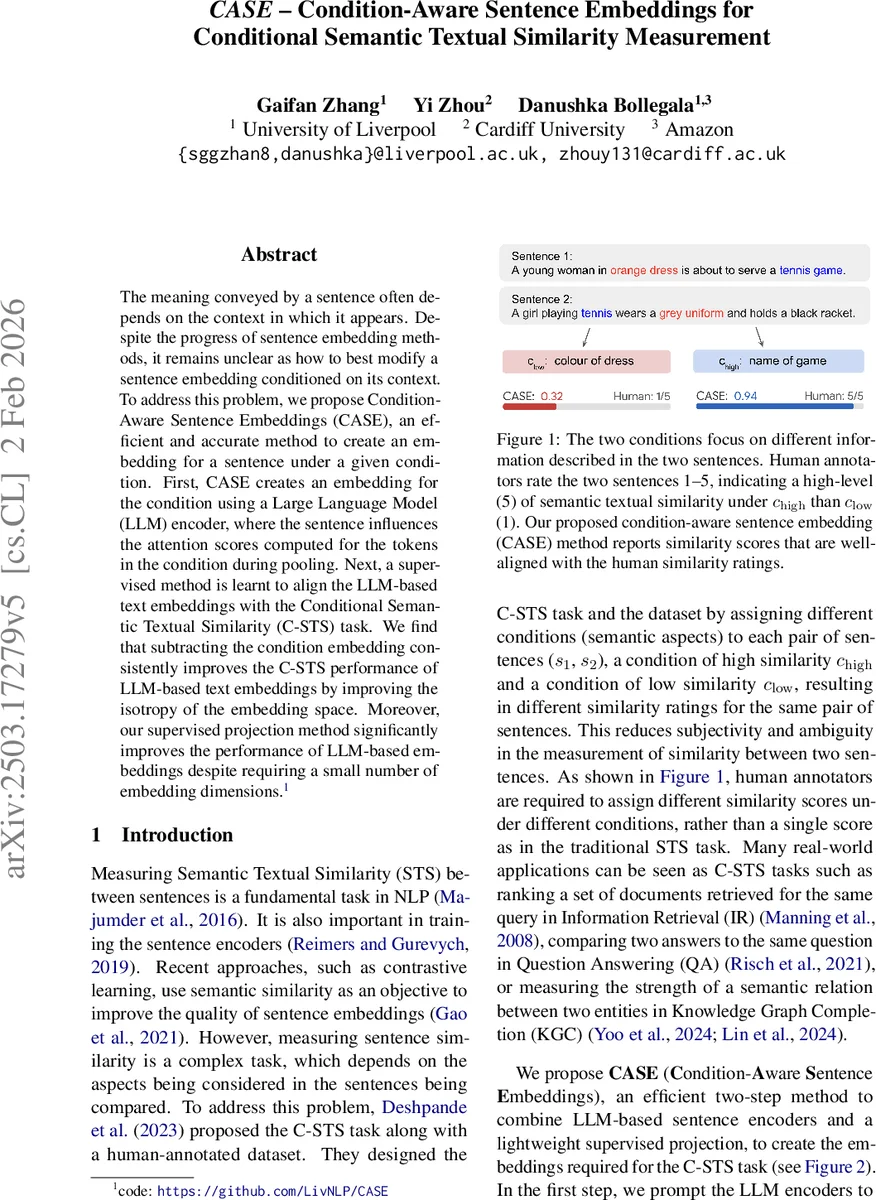

The meaning conveyed by a sentence often depends on the context in which it appears. Despite the progress of sentence embedding methods, it remains unclear as how to best modify a sentence embedding conditioned on its context. To address this problem, we propose Condition-Aware Sentence Embeddings (CASE), an efficient and accurate method to create an embedding for a sentence under a given condition. First, CASE creates an embedding for the condition using a Large Language Model (LLM) encoder, where the sentence influences the attention scores computed for the tokens in the condition during pooling. Next, a supervised method is learnt to align the LLM-based text embeddings with the Conditional Semantic Textual Similarity (C-STS) task. We find that subtracting the condition embedding consistently improves the C-STS performance of LLM-based text embeddings by improving the isotropy of the embedding space. Moreover, our supervised projection method significantly improves the performance of LLM-based embeddings despite requiring a small number of embedding dimensions.

💡 Research Summary

The paper introduces CASE (Condition‑Aware Sentence Embeddings), a two‑step framework designed to produce sentence embeddings that are explicitly conditioned on a given textual context, thereby enabling accurate measurement of Conditional Semantic Textual Similarity (C‑STS). The authors first observe that traditional sentence embeddings are largely context‑independent, which limits their applicability when similarity judgments depend on external conditions (e.g., “color of the dress”). To address this, CASE leverages large language model (LLM) encoders with a carefully crafted prompt: “Retrieve

Comments & Academic Discussion

Loading comments...

Leave a Comment