CAARMA: Class Augmentation with Adversarial Mixup Regularization

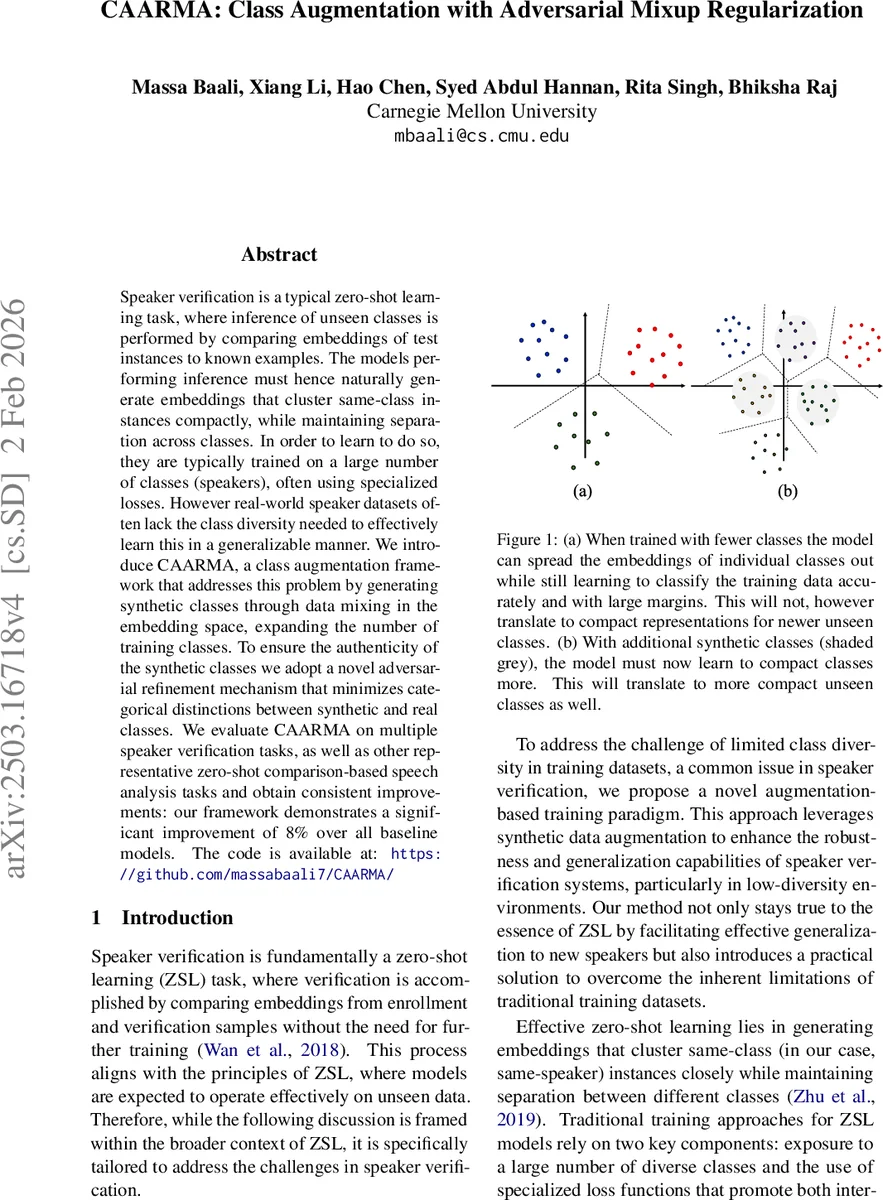

Speaker verification is a typical zero-shot learning task, where inference of unseen classes is performed by comparing embeddings of test instances to known examples. The models performing inference must hence naturally generate embeddings that cluster same-class instances compactly, while maintaining separation across classes. In order to learn to do so, they are typically trained on a large number of classes (speakers), often using specialized losses. However real-world speaker datasets often lack the class diversity needed to effectively learn this in a generalizable manner. We introduce CAARMA, a class augmentation framework that addresses this problem by generating synthetic classes through data mixing in the embedding space, expanding the number of training classes. To ensure the authenticity of the synthetic classes we adopt a novel adversarial refinement mechanism that minimizes categorical distinctions between synthetic and real classes. We evaluate CAARMA on multiple speaker verification tasks, as well as other representative zero-shot comparison-based speech analysis tasks and obtain consistent improvements: our framework demonstrates a significant improvement of 8% over all baseline models. The code is available at: https://github.com/massabaali7/CAARMA/

💡 Research Summary

The paper tackles speaker verification as a zero‑shot learning problem, emphasizing that effective verification requires embeddings that are tightly clustered for the same speaker while remaining well separated across different speakers. Conventional training relies on exposing the model to a large number of speaker classes and using specialized loss functions (e.g., AM‑Softmax, triplet loss). However, many real‑world speaker datasets lack sufficient class diversity, limiting generalization to unseen speakers.

CAARMA (Class Augmentation with Adversarial Mixup Regularization) addresses this limitation by artificially expanding the number of training classes. The core idea is to perform mixup not in the raw audio domain—where mixing two speech signals would simply produce a garbled sound—but in the learned embedding space. Within each mini‑batch, embeddings of two different speakers are linearly combined with a fixed weight of 0.5, producing a synthetic embedding that lies midway between the two original clusters. A new synthetic label (ID_syn) is assigned to each mixed embedding, effectively creating a “virtual speaker” class.

To ensure that these synthetic embeddings are indistinguishable from real ones, the authors introduce an adversarial discriminator built from a pretrained self‑supervised learning (SSL) model. The discriminator tries to classify embeddings as real (R) or synthetic (S), while the encoder (the speaker embedding network) is simultaneously trained to fool the discriminator. This adversarial game yields two additional loss terms: a discriminator loss L_D that maximizes the separation between R and S, and a generator loss L_gen that minimizes it. The encoder also continues to optimize the standard AM‑Softmax loss on both real (L_real) and synthetic (L_syn) embeddings, with L_syn scaled by the inverse of the number of classes (λ) to prevent the synthetic data from dominating training.

The overall training objective combines these components:

L_total = L_real + (1/λ)·L_syn + α·L_gen – β·L_D,

where α and β balance the adversarial contributions. After training, the discriminator is discarded, and the encoder alone is used for inference.

Experiments on multiple public speaker verification benchmarks (VoxCeleb1/2, LibriSpeech, etc.) demonstrate that CAARMA consistently improves performance over strong baselines, achieving an average 8 % reduction in Equal Error Rate (EER). The gains are especially pronounced when the original dataset contains a limited number of speakers, confirming that synthetic class augmentation directly mitigates the class‑diversity bottleneck. Additional evaluations on other zero‑shot speech comparison tasks (e.g., emotion recognition, speaker diarization) show similar benefits, indicating the method’s broader applicability.

In summary, CAARMA introduces a novel embedding‑space mixup strategy that creates synthetic speaker identities, and couples it with an adversarial refinement mechanism to preserve statistical authenticity. This combination effectively enlarges the training class set without requiring external data generation, leading to more compact intra‑class embeddings and better separation across classes, thereby enhancing zero‑shot speaker verification and related speech tasks. Future work may explore more sophisticated mixing schemes (non‑linear or multi‑sample mixup) and richer discriminator architectures to further boost the realism of synthetic classes.

Comments & Academic Discussion

Loading comments...

Leave a Comment