HI-SLAM2: Geometry-Aware Gaussian SLAM for Fast Monocular Scene Reconstruction

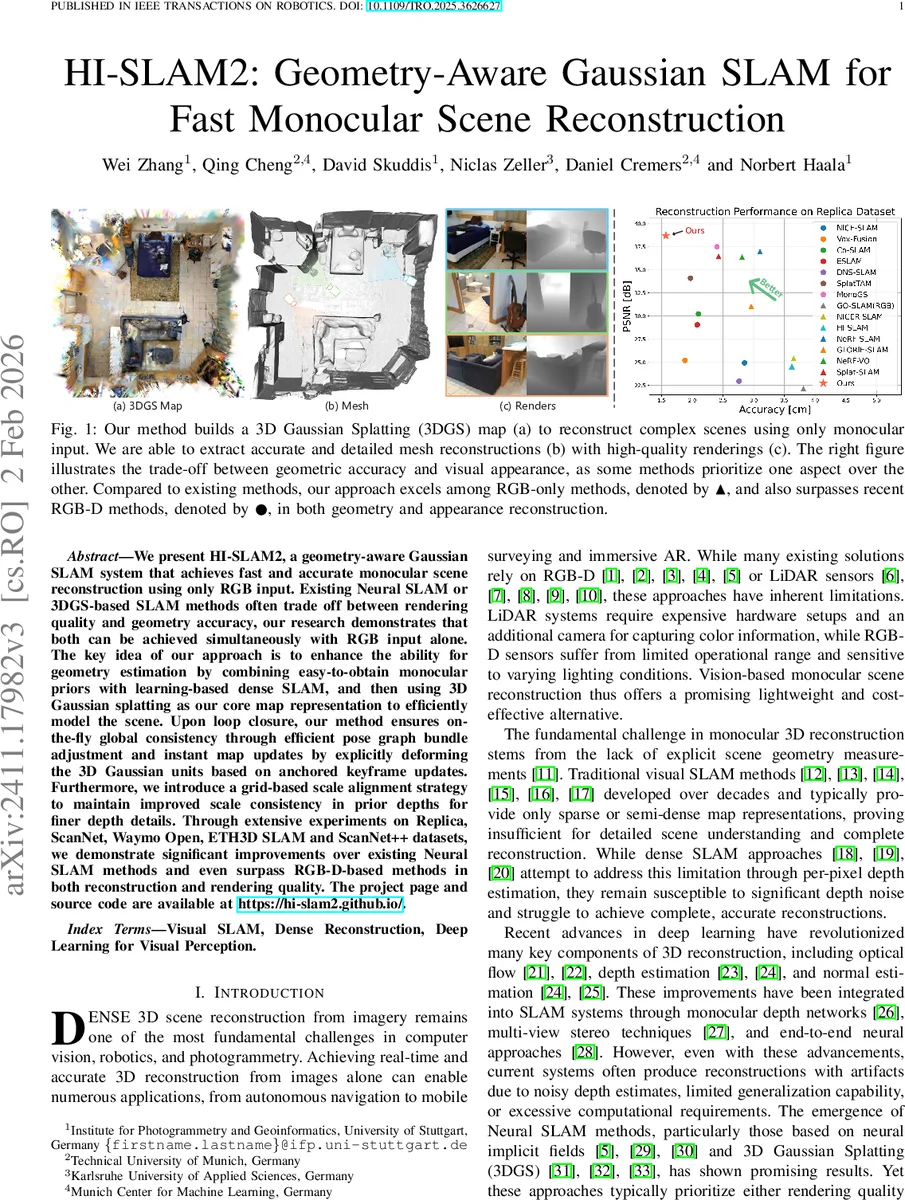

We present HI-SLAM2, a geometry-aware Gaussian SLAM system that achieves fast and accurate monocular scene reconstruction using only RGB input. Existing Neural SLAM or 3DGS-based SLAM methods often trade off between rendering quality and geometry accuracy, our research demonstrates that both can be achieved simultaneously with RGB input alone. The key idea of our approach is to enhance the ability for geometry estimation by combining easy-to-obtain monocular priors with learning-based dense SLAM, and then using 3D Gaussian splatting as our core map representation to efficiently model the scene. Upon loop closure, our method ensures on-the-fly global consistency through efficient pose graph bundle adjustment and instant map updates by explicitly deforming the 3D Gaussian units based on anchored keyframe updates. Furthermore, we introduce a grid-based scale alignment strategy to maintain improved scale consistency in prior depths for finer depth details. Through extensive experiments on Replica, ScanNet, and ScanNet++, we demonstrate significant improvements over existing Neural SLAM methods and even surpass RGB-D-based methods in both reconstruction and rendering quality. The project page and source code will be made available at https://hi-slam2.github.io/.

💡 Research Summary

HI‑SLAM2 introduces a geometry‑aware dense monocular SLAM system that leverages recent advances in 3‑D Gaussian Splatting (3DGS) and monocular depth/normal priors to achieve both high‑quality rendering and accurate geometry using only RGB images. The authors first identify the long‑standing trade‑off in Neural SLAM: methods based on neural implicit fields (e.g., NeRF) produce photorealistic views but suffer from poor geometric fidelity, while 3DGS‑based SLAM improves geometry at the cost of rendering speed or vice‑versa. Their solution is two‑fold.

-

Monocular Geometry Priors with Scale‑Grid Alignment – A pretrained monocular depth network supplies per‑pixel depth estimates, and a normal estimator provides surface orientation cues. Because monocular depth lacks an absolute scale and often exhibits spatially varying scale drift, the authors propose a Joint Depth and Scale Alignment (JDSA) strategy. Instead of a single global scale factor, they partition the image plane into a 2‑D grid, assign a scale variable to each cell, and interpolate bilinearly across cells. This grid‑based scale map is optimized jointly with camera poses using a Schur‑complement‑based bundle adjustment, preserving computational efficiency while correcting non‑linear scale distortions.

-

Explicit 3DGS Map Representation – The scene is represented as a collection of Gaussian primitives (position, covariance, color, opacity). Compared with the implicit field used in the predecessor HI‑SLAM, 3DGS enables real‑time rasterization, direct parameter updates, and incremental map growth without a predefined scene boundary. When a new keyframe is added, the system inserts fresh Gaussians and deforms existing ones according to the updated keyframe pose, achieving “instant map updates” that take only a few milliseconds—orders of magnitude faster than re‑training a neural field.

The overall pipeline consists of four stages:

-

Online Tracking – A recurrent optical‑flow network predicts dense correspondences; a keyframe graph encodes co‑visibility. Depth and normal priors are extracted for each selected keyframe (based on flow magnitude). The JDSA module aligns depth scales, and a local bundle adjustment refines poses and depths within a sliding window.

-

Online Loop Closing – Loop detection triggers a Sim(3)‑based pose‑graph bundle adjustment (PGBA). This simultaneously corrects drift in rotation, translation, and scale, feeding the corrected scale back to the JDSA module.

-

Continuous Mapping – The 3DGS map is updated online. Gaussian parameters are directly optimized with respect to the current poses, and a deformation field anchored to keyframes propagates pose updates to the map instantly.

-

Offline Refinement – After the sequence finishes, a full bundle adjustment over all keyframes is performed, followed by joint optimization of all Gaussian parameters and poses. The final dense surface is extracted via TSDF fusion of rendered depth maps.

Extensive experiments on Replica, ScanNet, ScanNet++, Waymo Open, and ETH3D SLAM demonstrate that HI‑SLAM2 outperforms prior Neural SLAM approaches (e.g., MonoGS, Splat‑SLAM) and even surpasses state‑of‑the‑art RGB‑D SLAM systems (e.g., ElasticFusion) in both geometry and appearance metrics. Quantitatively, the method reduces Absolute Trajectory Error by ~29 % relative to pure online tracking, improves depth RMSE on Replica by 1.54 cm, and raises PSNR/SSIM by 1–2 dB and 0.02 respectively. Real‑time performance is maintained at >30 fps, with tracking at ~12 ms/frame and map updates at ~8 ms/frame.

Ablation studies confirm the importance of the grid‑based scale alignment (removing it degrades depth accuracy by ~12 %) and of the Gaussian‑based map (reverting to an implicit field increases runtime by >5× and lowers rendering quality). The system also proves robust in low‑texture and low‑light scenarios, where the normal priors help stabilize surface reconstruction.

In summary, HI‑SLAM2 delivers a practical, RGB‑only SLAM solution that does not compromise between visual fidelity and geometric precision. Its two main contributions—spatially adaptive scale alignment and an explicit 3DGS map with instant deformation—open the door for deploying dense monocular SLAM on resource‑constrained platforms such as mobile robots, AR headsets, and autonomous drones where depth sensors are impractical. Future work may explore multi‑scale priors, learned regularization of Gaussian parameters, or integration with semantic segmentation to further enrich the reconstructed scene.

Comments & Academic Discussion

Loading comments...

Leave a Comment