Inferring Scientific Cross-Document Coreference and Hierarchy with Definition-Augmented Relational Reasoning

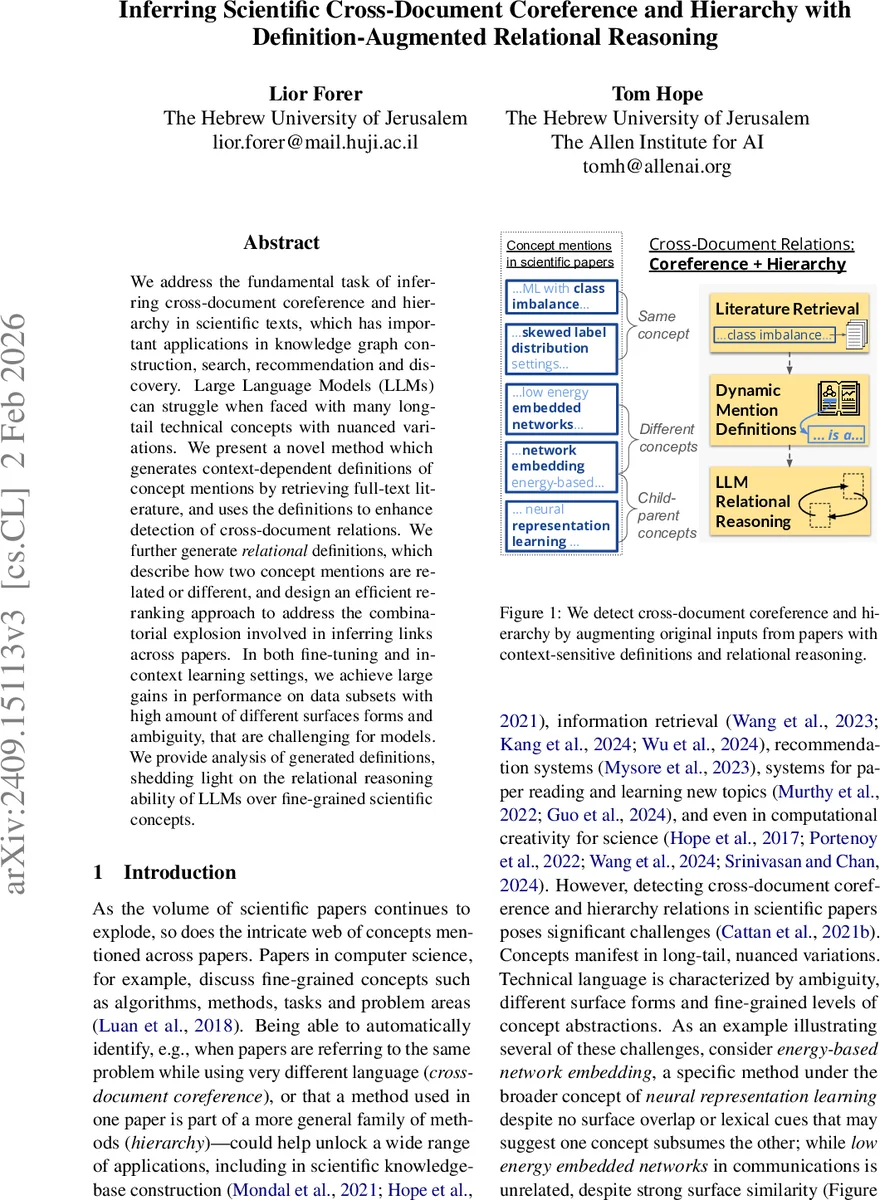

We address the fundamental task of inferring cross-document coreference and hierarchy in scientific texts, which has important applications in knowledge graph construction, search, recommendation and discovery. Large Language Models (LLMs) can struggle when faced with many long-tail technical concepts with nuanced variations. We present a novel method which generates context-dependent definitions of concept mentions by retrieving full-text literature, and uses the definitions to enhance detection of cross-document relations. We further generate relational definitions, which describe how two concept mentions are related or different, and design an efficient re-ranking approach to address the combinatorial explosion involved in inferring links across papers. In both fine-tuning and in-context learning settings, we achieve large gains in performance on data subsets with high amount of different surfaces forms and ambiguity, that are challenging for models. We provide analysis of generated definitions, shedding light on the relational reasoning ability of LLMs over fine-grained scientific concepts.

💡 Research Summary

The paper tackles the challenging problem of hierarchical cross‑document coreference resolution (H‑CDCR) in scientific literature, where the goal is to both cluster mentions that refer to the same concept across many papers and to infer a parent‑child hierarchy among those clusters. Existing approaches rely heavily on surface‑form similarity or simple contextual cues, which fail in the scientific domain because concepts often appear with long‑tail, ambiguous, or highly varied terminology. To overcome this, the authors introduce a definition‑augmented reasoning framework that enriches each mention with dynamically generated, context‑dependent definitions.

The core idea consists of two types of definitions. Singleton definitions are generated for each individual mention by retrieving relevant passages from a large corpus of full‑text arXiv papers, re‑ranking those passages, and prompting a large language model (LLM) to synthesize a concise definition that reflects both the local sentence context and the broader literature. This Retrieval‑Augmented Generation (RAG) pipeline yields high‑quality definitions that can be pre‑computed and stored offline.

Relational definitions go a step further by explicitly describing how two mentions are related (e.g., “A is a sub‑concept of B” or “A and B are unrelated”). Since generating relational definitions for every possible pair would be computationally prohibitive (the SCICO test set contains over half a million pairs), the authors devise a two‑stage candidate re‑ranking strategy. First, a model trained with singleton definitions scores all mention pairs and discards those predicted as “none”. Then, only the top‑k (≈25 % of the remaining pairs) are passed to the relational definition generator. This cascade mirrors classic retrieve‑and‑rerank pipelines in information retrieval and dramatically reduces the number of expensive LLM calls.

For model training, the authors adopt the standard four‑class classification formulation used in prior H‑CDCR work: (1) coreferent, (2) A → B (A is a child of B), (3) B → A, and (4) none. Definitions are injected into the input stream using special tokens (

Empirical results on the SCICO benchmark demonstrate substantial gains. With singleton definitions alone, fine‑tuned models improve overall CoNLL‑F1 by 3–5 percentage points over the non‑augmented baselines. On the hardest 10 % subset (Hard‑10), which contains the most ambiguous and surface‑form‑diverse mentions, the improvement rises to 7–9 pp. Adding relational definitions yields an extra 4 pp boost on hierarchy detection, confirming that explicit pairwise reasoning helps the model distinguish parent‑child from unrelated pairs. In the ICL regime, GPT‑4o‑mini’s performance jumps markedly when definition augmentation is enabled, narrowing the gap to fully fine‑tuned systems.

The authors also analyze definition quality. Human annotators rate the generated definitions for factual correctness and relevance; automatic ROUGE/BLEU scores correlate with downstream performance, indicating that better definitions lead to better coreference and hierarchy predictions. Error analysis reveals that occasional hallucinations in LLM‑generated definitions can mislead the classifier, especially when the retrieved literature is noisy. The paper discusses limitations such as the computational cost of definition generation and the dependence on the underlying retrieval corpus.

Future directions suggested include (1) automated verification and refinement of generated definitions, (2) incorporation of multimodal evidence (figures, tables) to enrich definitions, and (3) structuring definitions as graph edges to directly populate scientific knowledge graphs. Overall, the work presents a compelling paradigm: by grounding LLM reasoning in dynamically retrieved, definition‑level knowledge, we can substantially improve fine‑grained scientific concept understanding and relational inference, opening new avenues for knowledge‑graph construction, literature search, and recommendation in the research ecosystem.

Comments & Academic Discussion

Loading comments...

Leave a Comment