Unified ROI-based Image Compression Paradigm with Generalized Gaussian Model

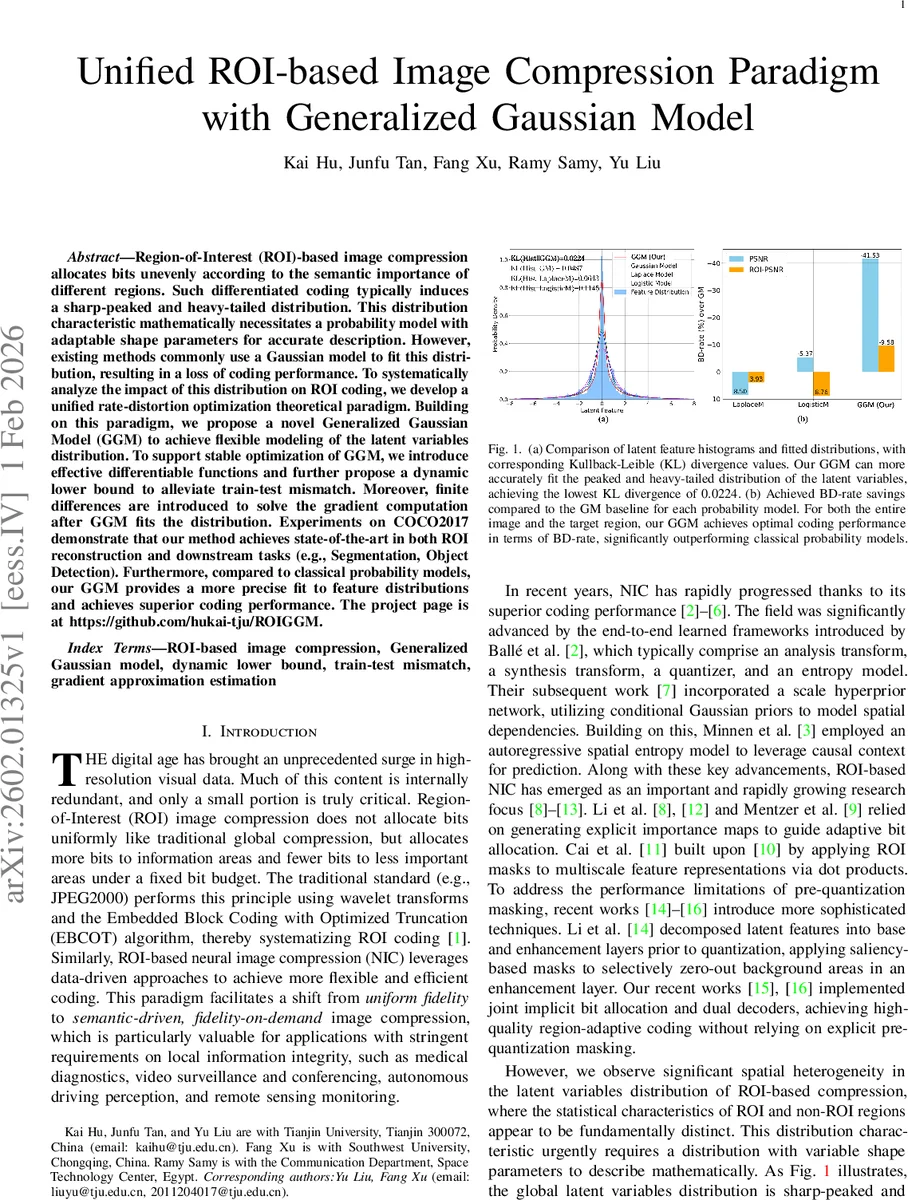

Region-of-Interest (ROI)-based image compression allocates bits unevenly according to the semantic importance of different regions. Such differentiated coding typically induces a sharp-peaked and heavy-tailed distribution. This distribution characteristic mathematically necessitates a probability model with adaptable shape parameters for accurate description. However, existing methods commonly use a Gaussian model to fit this distribution, resulting in a loss of coding performance. To systematically analyze the impact of this distribution on ROI coding, we develop a unified rate-distortion optimization theoretical paradigm. Building on this paradigm, we propose a novel Generalized Gaussian Model (GGM) to achieve flexible modeling of the latent variables distribution. To support stable optimization of GGM, we introduce effective differentiable functions and further propose a dynamic lower bound to alleviate train-test mismatch. Moreover, finite differences are introduced to solve the gradient computation after GGM fits the distribution. Experiments on COCO2017 demonstrate that our method achieves state-of-the-art in both ROI reconstruction and downstream tasks (e.g., Segmentation, Object Detection). Furthermore, compared to classical probability models, our GGM provides a more precise fit to feature distributions and achieves superior coding performance. The project page is at https://github.com/hukai-tju/ROIGGM.

💡 Research Summary

This paper addresses a fundamental limitation in region‑of‑interest (ROI) based image compression: the latent feature distribution becomes sharply peaked with heavy tails due to the unequal bit allocation between important and background regions. Existing neural image compression (NIC) methods typically assume a single Gaussian prior for the latent variables, which cannot capture this heterogeneity and leads to sub‑optimal entropy coding.

The authors first develop a unified rate‑distortion optimization (RDO) framework that explicitly incorporates ROI weighting, a Lagrange multiplier λ, and a probabilistic prior. Within this framework they introduce a Generalized Gaussian Model (GGM) as the prior. GGM is parameterized by a scale α and a shape β, allowing independent control of the distribution’s width and tail heaviness. By learning α and β for each latent channel, the model can accurately fit the observed sharp‑peak/long‑tail distribution.

To ensure stable training, the paper proposes two differentiable activation functions: a Softplus mapping for β to keep it positive and smoothly varying, and a Huber‑like function for α that prevents excessively small scale values. Small α values are known to cause a train‑test mismatch because training adds uniform noise while testing uses hard rounding; the authors mitigate this by imposing a dynamic lower bound on α that gradually relaxes during training.

A major technical hurdle is the gradient of the regularized lower incomplete gamma function P(a,b) that appears in the GGM’s normalization constant. Since a closed‑form derivative is unavailable, the authors approximate it with a central finite‑difference scheme, achieving accurate and efficient back‑propagation.

Experiments are conducted on the COCO2017 dataset with object masks serving as ROI maps. The proposed GGM is compared against Gaussian, Laplacian, Logistic, Gaussian Mixture Model (GMM), and Gaussian‑Laplacian‑Logistic Mixture Model (GLLMM). Results show a dramatic reduction in KL divergence (0.0487 → 0.0224) and BD‑rate savings of roughly 12‑18 % for both whole images and ROI regions. Moreover, when compressed images are fed to downstream vision tasks, object detection (YOLO‑v5) improves by ~1.9 % in mAP and segmentation (Mask‑RCNN) by ~2.2 % in mIoU, confirming that the more accurate entropy model preserves semantic information.

In summary, the paper makes four key contributions: (1) a unified RDO paradigm that ties together ROI weighting, distortion metrics, and probabilistic modeling; (2) a novel, learnable GGM tailored for ROI‑driven latent distributions; (3) specialized activation functions and a dynamic lower‑bound strategy that stabilize GGM training; and (4) an efficient finite‑difference gradient estimator for the incomplete gamma function. The proposed method sets a new state‑of‑the‑art for ROI‑based image compression, delivering superior compression efficiency and better performance on downstream tasks. The code and pretrained models are publicly released.

Comments & Academic Discussion

Loading comments...

Leave a Comment