PolySAE: Modeling Feature Interactions in Sparse Autoencoders via Polynomial Decoding

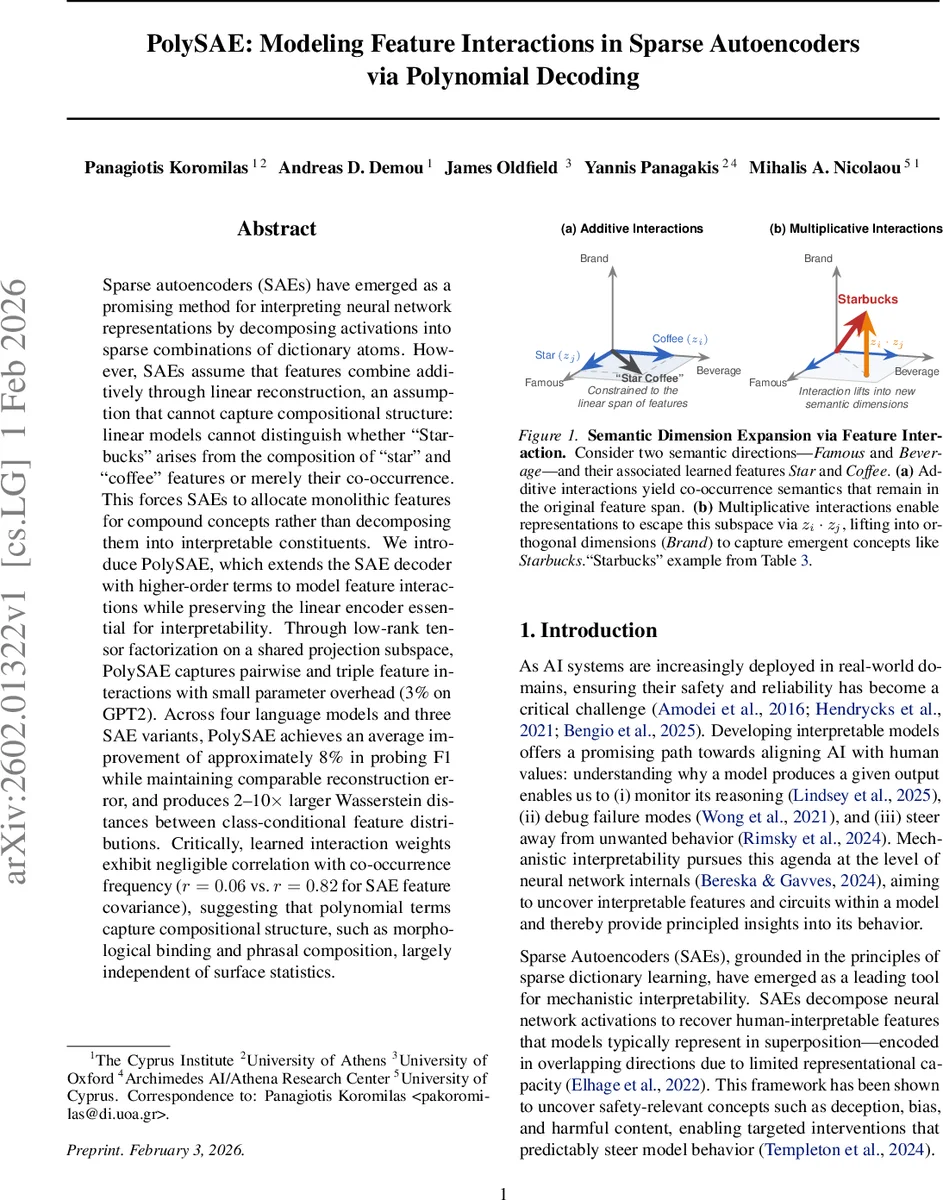

Sparse autoencoders (SAEs) have emerged as a promising method for interpreting neural network representations by decomposing activations into sparse combinations of dictionary atoms. However, SAEs assume that features combine additively through linear reconstruction, an assumption that cannot capture compositional structure: linear models cannot distinguish whether “Starbucks” arises from the composition of “star” and “coffee” features or merely their co-occurrence. This forces SAEs to allocate monolithic features for compound concepts rather than decomposing them into interpretable constituents. We introduce PolySAE, which extends the SAE decoder with higher-order terms to model feature interactions while preserving the linear encoder essential for interpretability. Through low-rank tensor factorization on a shared projection subspace, PolySAE captures pairwise and triple feature interactions with small parameter overhead (3% on GPT2). Across four language models and three SAE variants, PolySAE achieves an average improvement of approximately 8% in probing F1 while maintaining comparable reconstruction error, and produces 2-10$\times$ larger Wasserstein distances between class-conditional feature distributions. Critically, learned interaction weights exhibit negligible correlation with co-occurrence frequency ($r = 0.06$ vs. $r = 0.82$ for SAE feature covariance), suggesting that polynomial terms capture compositional structure, such as morphological binding and phrasal composition, largely independent of surface statistics.

💡 Research Summary

PolySAE introduces a polynomial decoder to the traditional sparse autoencoder (SAE) framework, addressing a core limitation of existing SAEs: the assumption that latent features combine additively in a linear reconstruction. While SAEs have become a standard tool for mechanistic interpretability—decomposing neural activations into sparse, human‑readable directions—their linear decoder cannot distinguish true compositional semantics from mere co‑occurrence. For example, the concept “Starbucks” could be represented either by a dedicated monolithic feature or by the separate “star” and “coffee” features, but a linear model cannot tell whether the two words are being composed or simply appear together.

PolySAE preserves the linear encoder of standard SAEs, ensuring that each sparse code coefficient (z_i) remains a direct linear projection of the input activation. This satisfies the interpretability requirement that each latent dimension corresponds to a specific direction in activation space, which can be visualized, clustered, and intervened upon. The novelty lies in extending the decoder to a third‑order polynomial expansion: \

Comments & Academic Discussion

Loading comments...

Leave a Comment