ReDiStory: Region-Disentangled Diffusion for Consistent Visual Story Generation

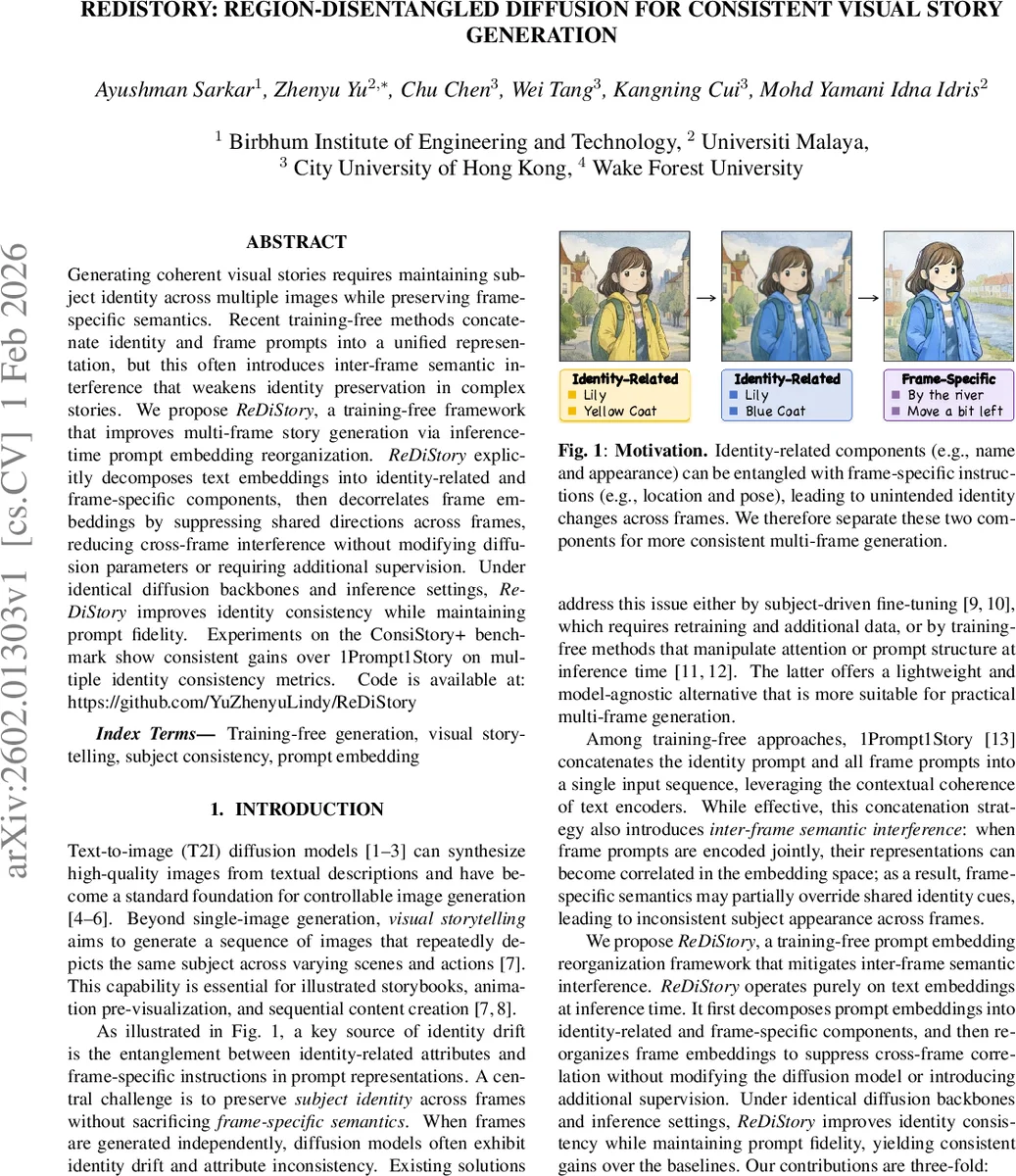

Generating coherent visual stories requires maintaining subject identity across multiple images while preserving frame-specific semantics. Recent training-free methods concatenate identity and frame prompts into a unified representation, but this often introduces inter-frame semantic interference that weakens identity preservation in complex stories. We propose ReDiStory, a training-free framework that improves multi-frame story generation via inference-time prompt embedding reorganization. ReDiStory explicitly decomposes text embeddings into identity-related and frame-specific components, then decorrelates frame embeddings by suppressing shared directions across frames. This reduces cross-frame interference without modifying diffusion parameters or requiring additional supervision. Under identical diffusion backbones and inference settings, ReDiStory improves identity consistency while maintaining prompt fidelity. Experiments on the ConsiStory+ benchmark show consistent gains over 1Prompt1Story on multiple identity consistency metrics. Code is available at: https://github.com/YuZhenyuLindy/ReDiStory

💡 Research Summary

ReDiStory tackles the persistent problem of identity drift in multi‑frame visual storytelling without any model fine‑tuning. The authors observe that existing training‑free approaches such as 1Prompt1Story concatenate an identity prompt with all frame prompts and feed the combined text to a frozen text‑to‑image diffusion model. While this leverages the contextual coherence of large language encoders, it also creates inter‑frame semantic interference: the embeddings of different frames become correlated, allowing frame‑specific instructions to overwrite shared identity cues, which leads to inconsistent appearances across the generated story.

The proposed framework operates entirely at inference time on the text embeddings. First, each concatenated prompt (identity ⊕ frame n) is encoded with the model’s text encoder, producing a token‑level embedding matrix Eₙ. This matrix is explicitly split into two parts: E_id, containing the rows that correspond to the identity tokens (identical for every frame), and E_fⁿ, containing the rows for the frame‑specific tokens. This decomposition makes it possible to treat the shared identity information and the per‑frame information asymmetrically.

The second step decorrelates the frame‑specific embeddings across the story. For each frame n, the method computes a corrected embedding ˜E_fⁿ by subtracting the average projection of E_fⁿ onto the subspaces spanned by all other frame embeddings E_f^m (m ≠ n). Formally: ˜E_fⁿ = E_fⁿ − (1/(N‑1)) ∑{m≠n} Proj{E_f^m}(E_fⁿ). This operation removes directions that are common to multiple frames, thereby suppressing the shared semantic components that cause interference while preserving the unique content of each frame. The final prompt embedding for generation is then ˜Eₙ =

Comments & Academic Discussion

Loading comments...

Leave a Comment