Interaction-Consistent Object Removal via MLLM-Based Reasoning

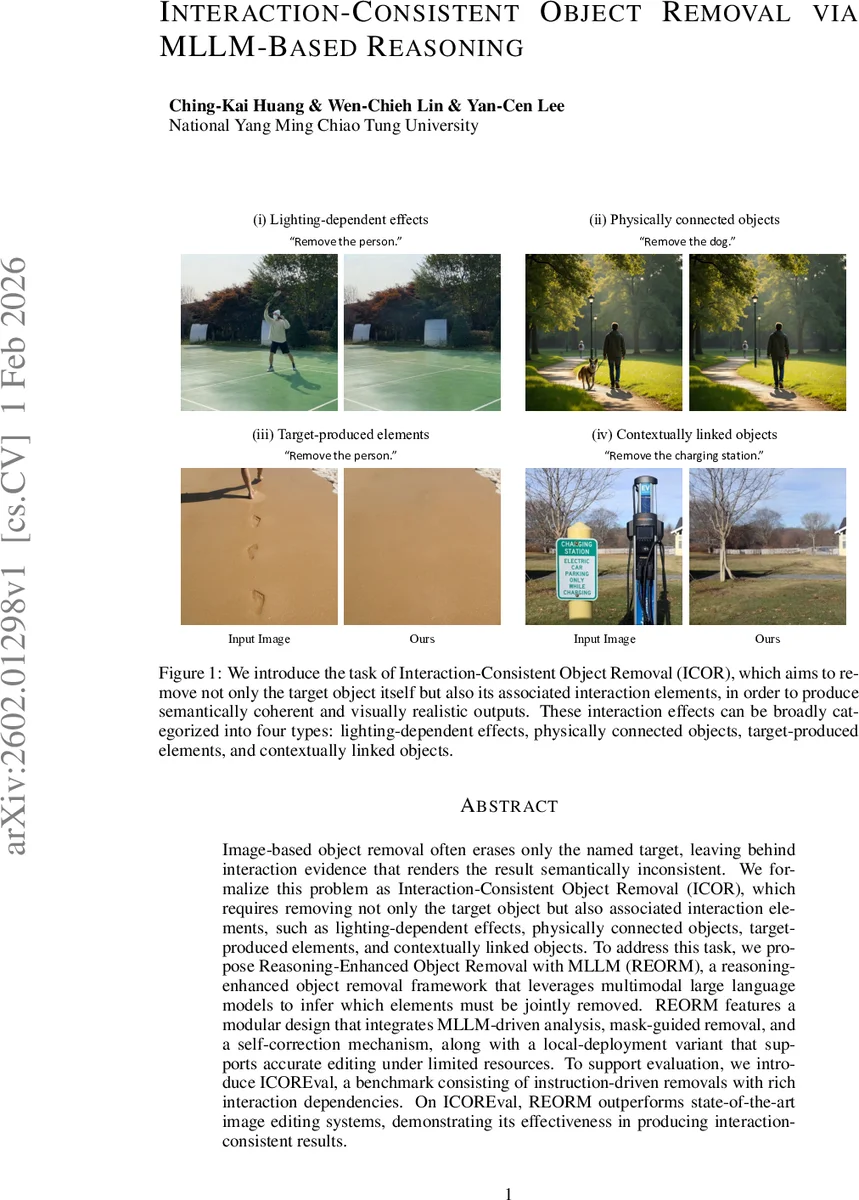

Image-based object removal often erases only the named target, leaving behind interaction evidence that renders the result semantically inconsistent. We formalize this problem as Interaction-Consistent Object Removal (ICOR), which requires removing not only the target object but also associated interaction elements, such as lighting-dependent effects, physically connected objects, targetproduced elements, and contextually linked objects. To address this task, we propose Reasoning-Enhanced Object Removal with MLLM (REORM), a reasoningenhanced object removal framework that leverages multimodal large language models to infer which elements must be jointly removed. REORM features a modular design that integrates MLLM-driven analysis, mask-guided removal, and a self-correction mechanism, along with a local-deployment variant that supports accurate editing under limited resources. To support evaluation, we introduce ICOREval, a benchmark consisting of instruction-driven removals with rich interaction dependencies. On ICOREval, REORM outperforms state-of-the-art image editing systems, demonstrating its effectiveness in producing interactionconsistent results.

💡 Research Summary

The paper tackles a fundamental shortcoming of current image‑based object removal methods: they typically erase only the explicitly named target, leaving behind shadows, reflections, attached accessories, footprints, signs, or other contextual cues that make the edited image semantically incoherent. To address this, the authors formalize a new task called Interaction‑Consistent Object Removal (ICOR). ICOR requires that, when an instruction such as “remove the dog” is given, the system must also eliminate any elements that would become illogical or visually odd after the dog disappears. The authors categorize these interaction elements into four groups: (i) lighting‑dependent effects (shadows, reflections), (ii) physically connected objects (leash, bag), (iii) target‑produced elements (footprints, sand depressions), and (iv) contextually linked objects (signs, displays that refer to the target).

To solve ICOR, the paper introduces REORM (Reasoning‑Enhanced Object Removal with Multimodal Large Language Models). REORM is a modular pipeline that leverages the commonsense reasoning capabilities of a multimodal LLM (specifically GPT‑4o) to infer which additional elements must be removed. The pipeline consists of three main stages:

-

MLLM‑Driven Analysis – The instruction is fed to the MLLM with a chain‑of‑thought prompt. The model first identifies the target and then reasons about all dependent elements, outputting a textual list. This reasoning step is crucial because it goes beyond simple object detection; it explicitly models cause‑effect relationships in the scene.

-

Open‑Vocabulary Segmentation & Mask‑Guided Removal – The list of elements is passed to Grounded‑SAM, an open‑vocabulary segmentation model, which produces binary masks for each item. These masks are then supplied to a diffusion‑based mask‑guided removal model (ObjectClear). By constraining the inpainting to the exact regions identified by the MLLM, the method preserves the surrounding background and avoids unintended alterations.

-

MLLM‑Controlled Self‑Correction – After the first removal pass, a second MLLM (also GPT‑4o) simulates the expected post‑removal scene in textual form and compares it with the edited image. Any residual inconsistencies are compiled into a correction list, re‑segmented, and removed with a second inpainting model (Attentive Eraser). This verification loop compensates for artifacts or hallucinations that diffusion models may introduce, especially when weaker inpainting back‑ends are used.

Recognizing the need for on‑device deployment, the authors also propose a lightweight variant. In this version, a smaller open‑source MLLM replaces GPT‑4o, and the reasoning process is split into multiple sub‑steps via prompt chaining. Additionally, a hybrid MLLM‑LLM collaboration is employed, where a compact LLM handles simpler sub‑tasks while the MLLM focuses on the more nuanced reasoning. This design enables the entire pipeline to run on a single GPU with modest memory, making it suitable for privacy‑sensitive or offline applications.

To evaluate the approach, the authors construct ICOREval, a benchmark consisting of paired images, natural‑language removal instructions, and ground‑truth edited images that respect all four interaction categories. ICOREval contains over a thousand diverse scenes, ranging from indoor settings with attached accessories to outdoor environments with shadows and footprints. Experiments show that REORM outperforms state‑of‑the‑art object removal systems (e.g., Jiang et al., 2025; Sun et al., 2025) and recent MLLM‑augmented editors on standard image quality metrics (PSNR, SSIM, LPIPS) as well as on a human‑rated “interaction consistency” score. Notably, REORM achieves >95 % precision in removing lighting‑dependent effects and physically connected objects, and its local deployment variant incurs less than a 10 % performance drop compared to the cloud‑based full model.

The paper’s contributions are fourfold: (1) defining the ICOR task and a taxonomy of interaction effects, (2) proposing a reasoning‑centric pipeline that integrates MLLM inference with open‑vocabulary segmentation and mask‑guided diffusion, (3) introducing a self‑correction loop that leverages the same MLLM for verification, and (4) releasing the ICOREval benchmark to spur further research.

Limitations include the heavy reliance on the MLLM’s reasoning accuracy; mis‑identified dependencies can propagate errors through the pipeline. Moreover, the current system handles only static 2‑D images and may struggle with complex physical phenomena such as multiple reflections or translucency. Future work could explore interactive human‑in‑the‑loop correction, domain‑specific multimodal pre‑training to strengthen commonsense knowledge, and extensions to 3‑D or video contexts where temporal cues aid interaction modeling.

In summary, REORM demonstrates that embedding high‑level commonsense reasoning into the object removal workflow enables semantically coherent edits that respect the broader scene context, opening a new direction for intelligent, interaction‑aware image manipulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment