BicKD: Bilateral Contrastive Knowledge Distillation

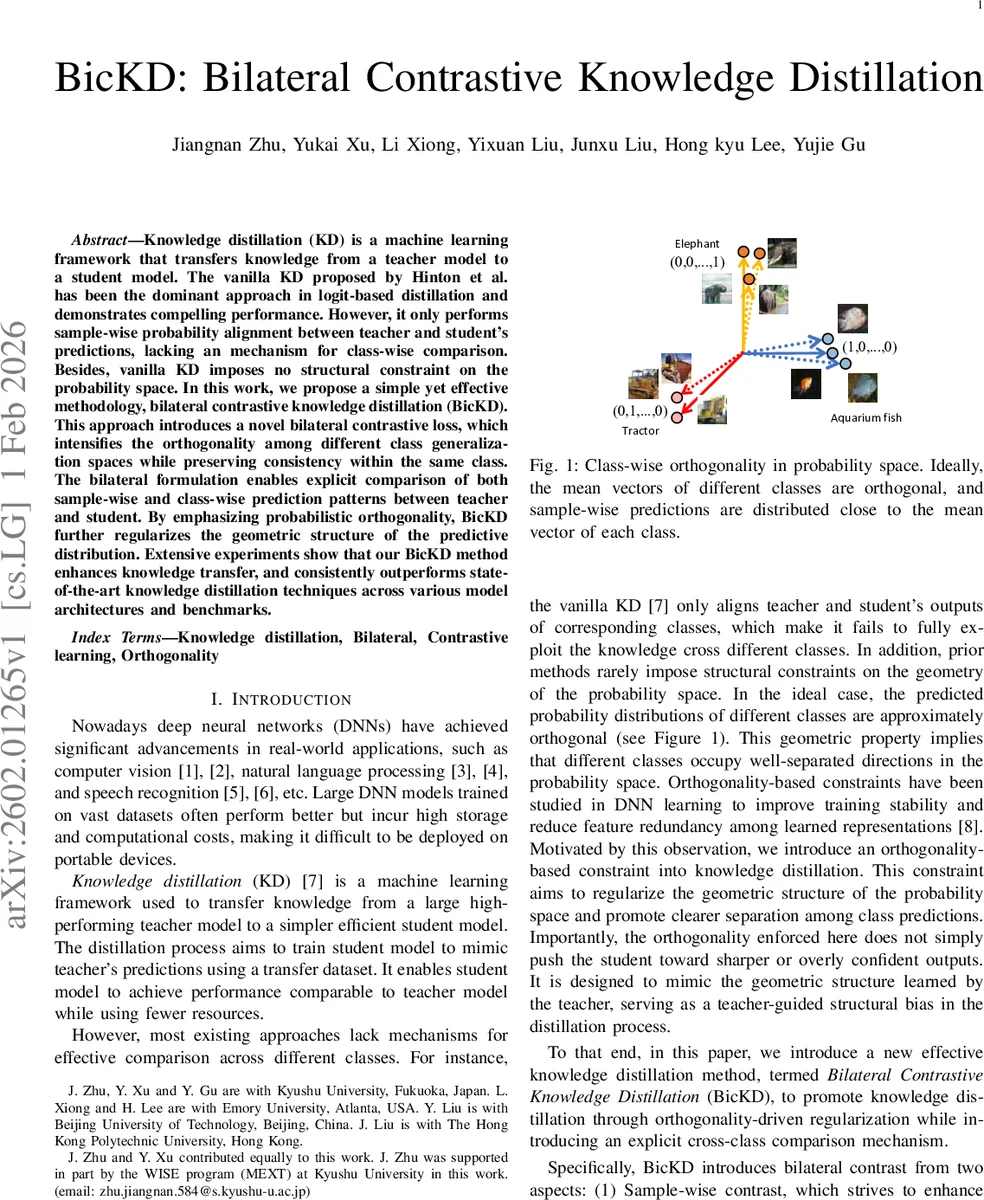

Knowledge distillation (KD) is a machine learning framework that transfers knowledge from a teacher model to a student model. The vanilla KD proposed by Hinton et al. has been the dominant approach in logit-based distillation and demonstrates compelling performance. However, it only performs sample-wise probability alignment between teacher and student’s predictions, lacking an mechanism for class-wise comparison. Besides, vanilla KD imposes no structural constraint on the probability space. In this work, we propose a simple yet effective methodology, bilateral contrastive knowledge distillation (BicKD). This approach introduces a novel bilateral contrastive loss, which intensifies the orthogonality among different class generalization spaces while preserving consistency within the same class. The bilateral formulation enables explicit comparison of both sample-wise and class-wise prediction patterns between teacher and student. By emphasizing probabilistic orthogonality, BicKD further regularizes the geometric structure of the predictive distribution. Extensive experiments show that our BicKD method enhances knowledge transfer, and consistently outperforms state-of-the-art knowledge distillation techniques across various model architectures and benchmarks.

💡 Research Summary

The paper introduces Bilateral Contrastive Knowledge Distillation (BicKD), a novel framework that augments traditional logit‑based knowledge distillation with both sample‑wise and class‑wise contrastive objectives. Conventional KD, exemplified by Hinton et al.’s vanilla KD, aligns teacher and student predictions only on a per‑sample basis using Kullback‑Leibler (KL) divergence, ignoring any structural relationship among different classes and providing no geometric regularization of the probability simplex. BicKD addresses these gaps by explicitly encouraging orthogonality between class probability vectors while preserving consistency within the same class.

The method defines two complementary contrastive components. First, sample‑wise contrast consists of (i) a standard KL alignment term that forces the student’s prediction for a given sample to match the teacher’s, and (ii) a “sample‑wise orthogonality amplification” (SOA) loss that maximizes the cosine distance between the student’s prediction on sample i and the teacher’s prediction on any sample j whose ground‑truth label differs from i. This pushes predictions of different classes farther apart in the probability space. Second, class‑wise contrast introduces (i) a “class‑wise orthogonality amplification” (COA) loss that maximizes the cosine distance between the student’s column vector for class j and the teacher’s column vector for any other class k ≠ j, and (ii) a class‑wise alignment (CA) loss based on L1 distance that pulls together the student’s and teacher’s column vectors for the same class.

Both orthogonality terms use the cosine distance D(u,v)=1‑cos(u,v), which attains its maximum value of 1 when the vectors are orthogonal. By maximizing D, BicKD explicitly enforces the geometric property that class probability vectors occupy near‑orthogonal directions, a pattern observed in well‑trained high‑capacity teachers that produce low‑entropy, near‑one‑hot outputs. The total training objective combines cross‑entropy, KL, SOA, COA, and CA terms with tunable weights, allowing the practitioner to balance discriminative alignment against structural regularization.

Algorithm 1 outlines the training loop: for each mini‑batch the teacher and student forward passes generate logits; the four loss components are computed; they are summed according to the hyper‑parameters; and the student parameters are updated by stochastic gradient descent (or Adam). Because all contrastive operations are performed directly on the logits (B × C matrices), BicKD incurs negligible additional memory overhead compared with vanilla KD and avoids the costly feature‑level storage required by methods such as CRD.

Extensive experiments were conducted on CIFAR‑10/100, Tiny‑ImageNet, and ImageNet‑1k using a variety of teacher‑student pairs (e.g., ResNet‑110 → ResNet‑20, MobileNetV2 → MobileNetV1, ViT‑Base → ViT‑Small). BicKD consistently outperformed strong baselines—including vanilla KD, DIST, RLD, WKD‑L, and CRD—by 0.5–2.3 percentage points in top‑1 accuracy. The gains were especially pronounced when the teacher‑student capacity gap was large. Additional evaluations under noisy labels and reduced data regimes demonstrated that the orthogonality regularization improves robustness and generalization.

Ablation studies confirmed that each component (SOA, COA, CA) contributes positively; removing any of them leads to measurable performance drops. Substituting cosine distance with Euclidean distance weakened the orthogonality effect, highlighting the importance of the angular metric. Sensitivity analysis showed that reasonable ranges for temperature τ (≈2–4) and loss weights (α, β, γ) yield stable results, though careful tuning is still advisable.

Potential limitations include the O(C²) cost of computing all pairwise class‑wise distances when the number of classes C is very large (e.g., language models with thousands of tokens). The authors suggest possible mitigations such as sampling or block‑wise contrast. Moreover, overly aggressive orthogonality could push the student toward over‑confident predictions, but the L1 alignment term counteracts this tendency.

In summary, BicKD offers a principled and lightweight extension to logit‑based knowledge distillation by jointly aligning predictions at the sample level and shaping the global class geometry through bilateral contrast. It delivers consistent empirical improvements without extra memory burden, making it a practical tool for model compression and deployment. Future work may explore scaling the class‑wise contrast to massive label spaces and extending the bilateral paradigm to intermediate feature representations for even richer teacher‑student interactions.

Comments & Academic Discussion

Loading comments...

Leave a Comment