Latent Reasoning VLA: Latent Thinking and Prediction for Vision-Language-Action Models

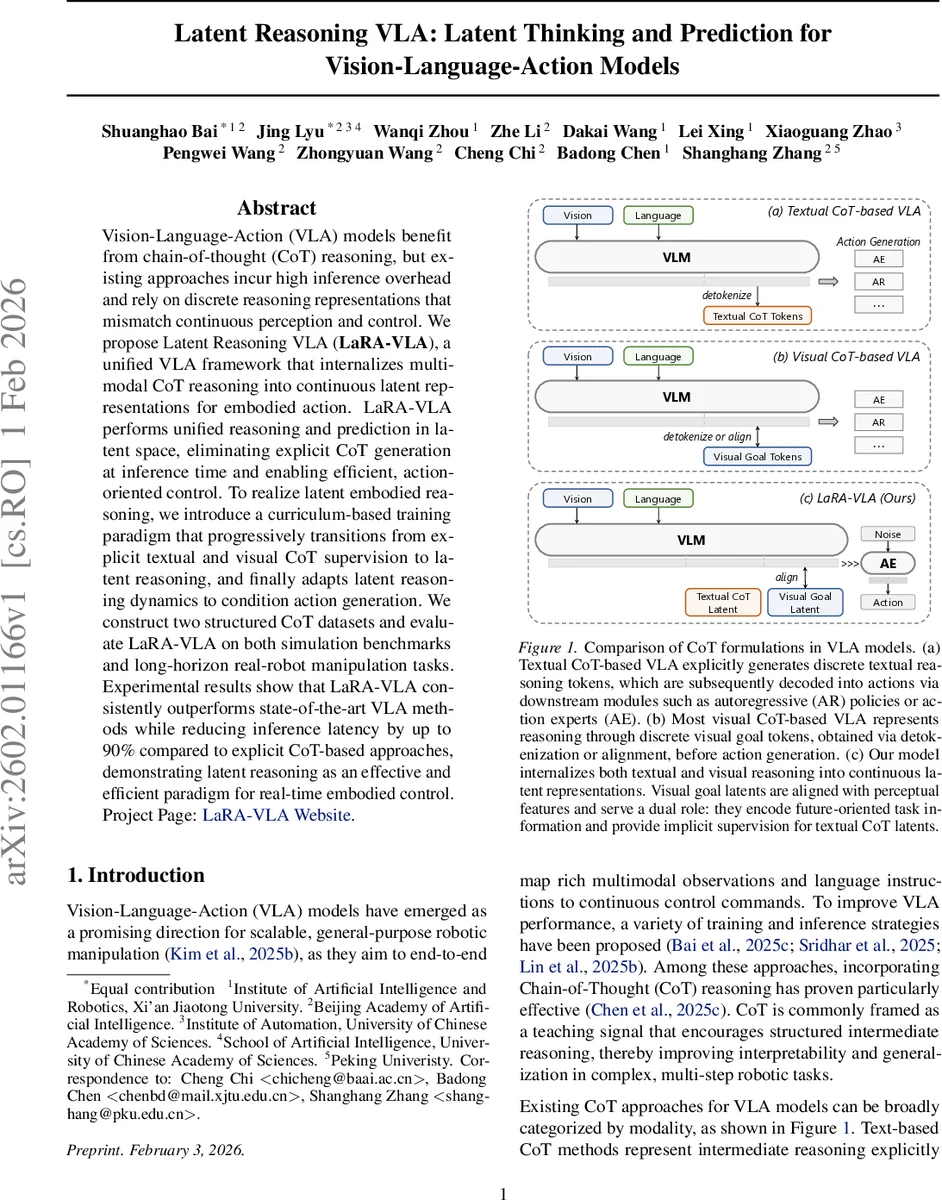

Vision-Language-Action (VLA) models benefit from chain-of-thought (CoT) reasoning, but existing approaches incur high inference overhead and rely on discrete reasoning representations that mismatch continuous perception and control. We propose Latent Reasoning VLA (\textbf{LaRA-VLA}), a unified VLA framework that internalizes multi-modal CoT reasoning into continuous latent representations for embodied action. LaRA-VLA performs unified reasoning and prediction in latent space, eliminating explicit CoT generation at inference time and enabling efficient, action-oriented control. To realize latent embodied reasoning, we introduce a curriculum-based training paradigm that progressively transitions from explicit textual and visual CoT supervision to latent reasoning, and finally adapts latent reasoning dynamics to condition action generation. We construct two structured CoT datasets and evaluate LaRA-VLA on both simulation benchmarks and long-horizon real-robot manipulation tasks. Experimental results show that LaRA-VLA consistently outperforms state-of-the-art VLA methods while reducing inference latency by up to 90% compared to explicit CoT-based approaches, demonstrating latent reasoning as an effective and efficient paradigm for real-time embodied control. Project Page: \href{https://loveju1y.github.io/Latent-Reasoning-VLA/}{LaRA-VLA Website}.

💡 Research Summary

The paper introduces LaRA‑VLA (Latent Reasoning Vision‑Language‑Action), a novel framework that internalizes chain‑of‑thought (CoT) reasoning into continuous latent representations, eliminating the need for explicit textual or visual token generation at inference time. Existing VLA approaches that incorporate CoT suffer from two major drawbacks: (1) textual CoT requires long autoregressive generation, leading to high memory consumption, KV‑cache overload, and inference latencies that drop control frequencies to 1–5 Hz, which is unsuitable for real‑time robotics; (2) both textual and visual CoT are expressed as discrete tokens (language tokens or VQ‑based visual tokens), creating a representational mismatch with the inherently continuous perception and action spaces of embodied agents.

LaRA‑VLA addresses these issues by adopting a latent‑reasoning paradigm. During training, the model first learns explicit multimodal CoT (textual reasoning steps and future visual predictions) using a large vision‑language model backbone (Qwen3‑VL). A three‑stage curriculum then gradually replaces discrete textual CoT with compact continuous latent vectors while keeping visual reasoning aligned with the same visual encoder’s latent space. An exponential moving average (EMA) encoder serves as a stable target network for visual latent prediction, preventing representation collapse. In the final stage, the learned latent “thought steps” directly condition a dedicated action expert—a 16‑layer diffusion transformer—that outputs continuous robot control commands, removing any token‑level action generation.

To support this paradigm, the authors build an automated annotation pipeline that extracts semantic anchors (using Qwen3‑VL) and temporal anchors (based on gripper state changes) from demonstration videos. GroundingDINO and SAM3 provide object bounding boxes, while motion reasoning is derived from end‑effector trajectories. This pipeline yields two structured CoT datasets, LIBERO‑LaRA and Bridge‑LaRA, covering simulated environments, and a real‑world long‑horizon manipulation dataset collected on physical hardware.

Experimental evaluation shows that LaRA‑VLA consistently outperforms state‑of‑the‑art CoT‑based VLA methods (e.g., ECoT, DreamVLA, ThinkAct) on both simulation benchmarks and real‑robot tasks. Notably, inference latency drops by up to 90 % (from ~0.9 s to ~0.09 s per step), enabling control frequencies above 10 Hz. Token length is reduced to less than 30 % of that required by explicit CoT, and GPU memory usage falls by roughly 40 %. Success rates improve by 7–12 % across diverse manipulation scenarios, demonstrating that latent reasoning aligns more naturally with continuous perception and action.

In summary, LaRA‑VLA establishes latent reasoning as an effective and efficient paradigm for Vision‑Language‑Action models, offering a scalable path toward real‑time, long‑horizon robotic control. Future work may explore broader multimodal datasets, generalization across robot platforms, and methods for interpreting the latent reasoning dynamics.

Comments & Academic Discussion

Loading comments...

Leave a Comment