UniForce: A Unified Latent Force Model for Robot Manipulation with Diverse Tactile Sensors

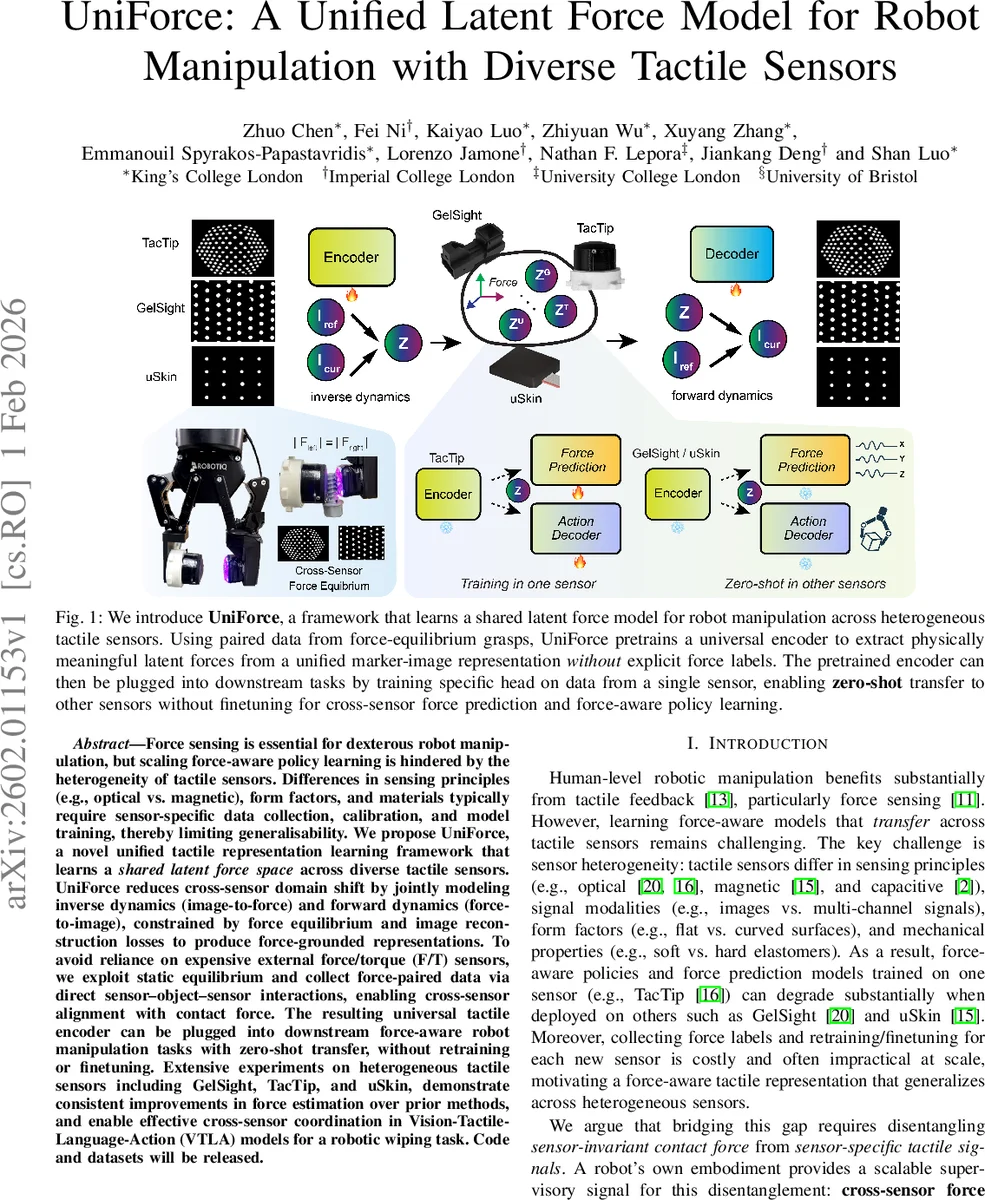

Force sensing is essential for dexterous robot manipulation, but scaling force-aware policy learning is hindered by the heterogeneity of tactile sensors. Differences in sensing principles (e.g., optical vs. magnetic), form factors, and materials typically require sensor-specific data collection, calibration, and model training, thereby limiting generalisability. We propose UniForce, a novel unified tactile representation learning framework that learns a shared latent force space across diverse tactile sensors. UniForce reduces cross-sensor domain shift by jointly modeling inverse dynamics (image-to-force) and forward dynamics (force-to-image), constrained by force equilibrium and image reconstruction losses to produce force-grounded representations. To avoid reliance on expensive external force/torque (F/T) sensors, we exploit static equilibrium and collect force-paired data via direct sensor–object–sensor interactions, enabling cross-sensor alignment with contact force. The resulting universal tactile encoder can be plugged into downstream force-aware robot manipulation tasks with zero-shot transfer, without retraining or finetuning. Extensive experiments on heterogeneous tactile sensors including GelSight, TacTip, and uSkin, demonstrate consistent improvements in force estimation over prior methods, and enable effective cross-sensor coordination in Vision-Tactile-Language-Action (VTLA) models for a robotic wiping task. Code and datasets will be released.

💡 Research Summary

Force sensing is a cornerstone of dexterous robot manipulation, yet the diversity of tactile sensors—optical (GelSight, TacTip), magnetic (uSkin), and others—creates a severe bottleneck for scaling force‑aware policies. Different transduction principles, form factors, and elastomer properties lead to sensor‑specific raw signals, requiring separate data collection, calibration, and model training for each device. This paper introduces UniForce, a unified latent force model that learns a shared, force‑grounded representation across heterogeneous tactile sensors without relying on external force/torque (F/T) sensors.

The key insight is to exploit quasi‑static force equilibrium during a bilateral grasp. When two fingers equipped with different sensors press symmetrically on an object, physics dictates that the contact forces are equal in magnitude and opposite in direction, regardless of sensor modality. UniForce treats this equilibrium as an implicit supervisory signal, enabling label‑free cross‑sensor alignment.

UniForce is built as a conditional variational auto‑encoder (CVAE). The encoder receives a pair of images: a reference frame (undeformed skin) and a contact frame (deformed skin). Both frames are patchified into tokens and processed by a Causal Spatiotemporal Transformer, which captures spatial marker structures and temporal deformation while preserving causality (the contact frame may attend to the reference, but not vice‑versa). The encoder outputs a patch‑wise latent force map z ∈ ℝ^{N_p×6} (three force components and three torque components per patch) as a diagonal‑Gaussian posterior (mean μ, log‑variance log σ²).

The decoder reconstructs the contact image conditioned on the same reference image and the latent force. By adding a linear projection of z to the reference embedding, the decoder can generate both self‑reconstruction (same sensor) and cross‑sensor reconstruction (different sensor) outputs. This dual reconstruction forces z to retain only force‑relevant information while discarding sensor‑specific appearance such as marker density or taxel layout.

Training optimizes three losses: (1) Reconstruction loss combining L1 pixel error and LPIPS to faithfully reproduce marker deformations; (2) KL divergence regularizing the posterior toward a unit Gaussian; (3) Equilibrium loss enforcing L2 similarity between the latent forces inferred from the left and right fingers (‖z_L − z_R‖²). The total loss is L_total = L_recon + λ_kl L_KL + λ_eq L_eq.

Data collection avoids any external F/T hardware. TacTip is fixed on the right finger as an anchor; the left finger alternates between GelSight and uSkin, yielding two paired datasets (GelSight‑TacTip and uSkin‑TacTip). The robot performs Cartesian‑space impedance control, gently grasping and then slowly dragging or rotating the object to induce tangential deformation while maintaining quasi‑static conditions. For each of eight indenter shapes, 1,000 frames are recorded, resulting in ~8,000 frames per sensor pair, collected in under 30 minutes.

Experiments evaluate (a) force estimation accuracy, (b) zero‑shot cross‑sensor transfer, and (c) integration into Vision‑Tactile‑Language‑Action (VTLA) models for a wiping task. UniForce consistently outperforms prior image‑to‑image translation methods, achieving Pearson correlation coefficients > 0.85 between latent dimensions and ground‑truth force components. In zero‑shot transfer, a model trained on GelSight data predicts forces from uSkin inputs with less than 10 % performance degradation. When plugged into a VTLA pipeline, UniForce‑encoded tactile tokens enable a robot to wipe a surface with 92 % coverage, matching or exceeding baselines that require sensor‑specific retraining.

The paper’s contributions are threefold: (1) a physics‑grounded, label‑free force‑equilibrium data collection pipeline; (2) a CVAE framework that learns a universal latent force space shared across vision‑based and non‑vision‑based tactile sensors; (3) demonstration of zero‑shot transfer for both force estimation and force‑aware policy learning. Limitations include reliance on quasi‑static conditions and the current focus on symmetric two‑finger grasps. Future work could extend the approach to dynamic contacts, multi‑finger hands, and asymmetric objects, further broadening the applicability of unified tactile perception in robotic manipulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment