LightCity: An Urban Dataset for Outdoor Inverse Rendering and Reconstruction under Multi-illumination Conditions

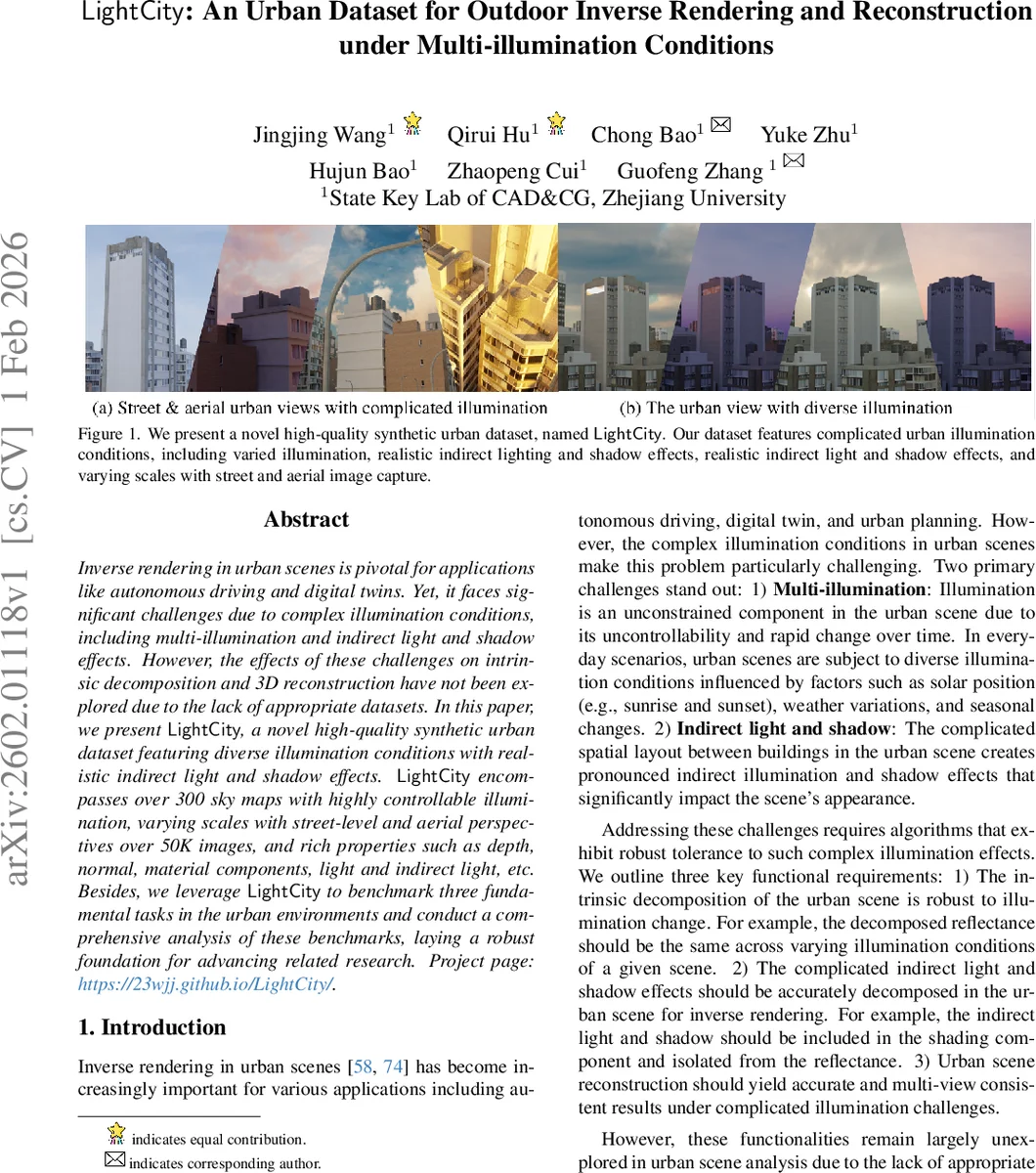

Inverse rendering in urban scenes is pivotal for applications like autonomous driving and digital twins. Yet, it faces significant challenges due to complex illumination conditions, including multi-illumination and indirect light and shadow effects. However, the effects of these challenges on intrinsic decomposition and 3D reconstruction have not been explored due to the lack of appropriate datasets. In this paper, we present LightCity, a novel high-quality synthetic urban dataset featuring diverse illumination conditions with realistic indirect light and shadow effects. LightCity encompasses over 300 sky maps with highly controllable illumination, varying scales with street-level and aerial perspectives over 50K images, and rich properties such as depth, normal, material components, light and indirect light, etc. Besides, we leverage LightCity to benchmark three fundamental tasks in the urban environments and conduct a comprehensive analysis of these benchmarks, laying a robust foundation for advancing related research.

💡 Research Summary

LightCity is a newly introduced synthetic urban dataset designed to address the lack of comprehensive benchmarks for outdoor inverse rendering and 3D reconstruction under complex illumination conditions. The authors identify two major challenges in urban scenes: (1) multi‑illumination, where lighting varies dramatically due to time of day, weather, and seasonal changes, and (2) indirect light and shadow, which arise from dense building layouts and cause view‑dependent shading effects. Existing datasets either focus on single objects, small garden scenes, or provide only rudimentary illumination control, making them unsuitable for evaluating intrinsic decomposition, multi‑view inverse rendering, or reconstruction in realistic city environments.

To fill this gap, LightCity comprises over 300 HDRI sky maps covering the full diurnal cycle, allowing precise manipulation of sky texture, rotation, and intensity. The dataset contains more than 50 000 high‑resolution (1024 × 768) images captured from both street‑level and aerial viewpoints, organized into hierarchical city blocks (levels A–F) with 450 object categories and 80 material properties. Each image is accompanied by a rich set of ground‑truth channels generated via Blender Cycles’ physically‑based rendering pipeline: albedo, shading, roughness, metallic, specular, normal, depth, semantic masks, and separate direct/indirect lighting passes. Camera trajectories are sampled uniformly (circular and grid patterns) and adaptively (combining street and aerial views), with COLMAP‑based pre‑reconstruction used to ensure sufficient overlap and to filter out low‑information frames.

The authors benchmark three fundamental tasks using LightCity. For intrinsic image decomposition, they show that state‑of‑the‑art models trained on existing datasets fail to maintain albedo consistency across varying illumination, whereas fine‑tuning on LightCity improves illumination‑invariant albedo prediction and yields more accurate shading. In multi‑view inverse rendering, they evaluate methods that assume a single unknown illumination per scene; results reveal substantial material estimation errors and difficulty disentangling view‑dependent indirect lighting and shadows. Notably, 3D Gaussian Splatting (3DGS) achieves better geometric consistency than Neural Radiance Fields (NeRF) but suffers from color artifacts under multiple lighting conditions. Finally, for urban reconstruction, they demonstrate that varying illumination degrades depth and normal accuracy and reduces multi‑view consistency, highlighting the need for illumination‑robust algorithms.

Through extensive quantitative and qualitative analysis, the paper establishes that diverse illumination and indirect lighting are critical factors that current algorithms do not adequately handle in urban contexts. LightCity’s controllable lighting, multi‑scale viewpoints, and comprehensive annotations provide a unified benchmark for advancing physically‑based rendering, illumination estimation, and digital‑twin creation. The authors suggest future work on domain adaptation to bridge the synthetic‑real gap, lightweight real‑time rendering models, and extending the dataset with dynamic weather and moving objects to further challenge and guide research in outdoor inverse rendering.

Comments & Academic Discussion

Loading comments...

Leave a Comment