Transforming Vehicle Diagnostics: A Multimodal Approach to Error Patterns Prediction

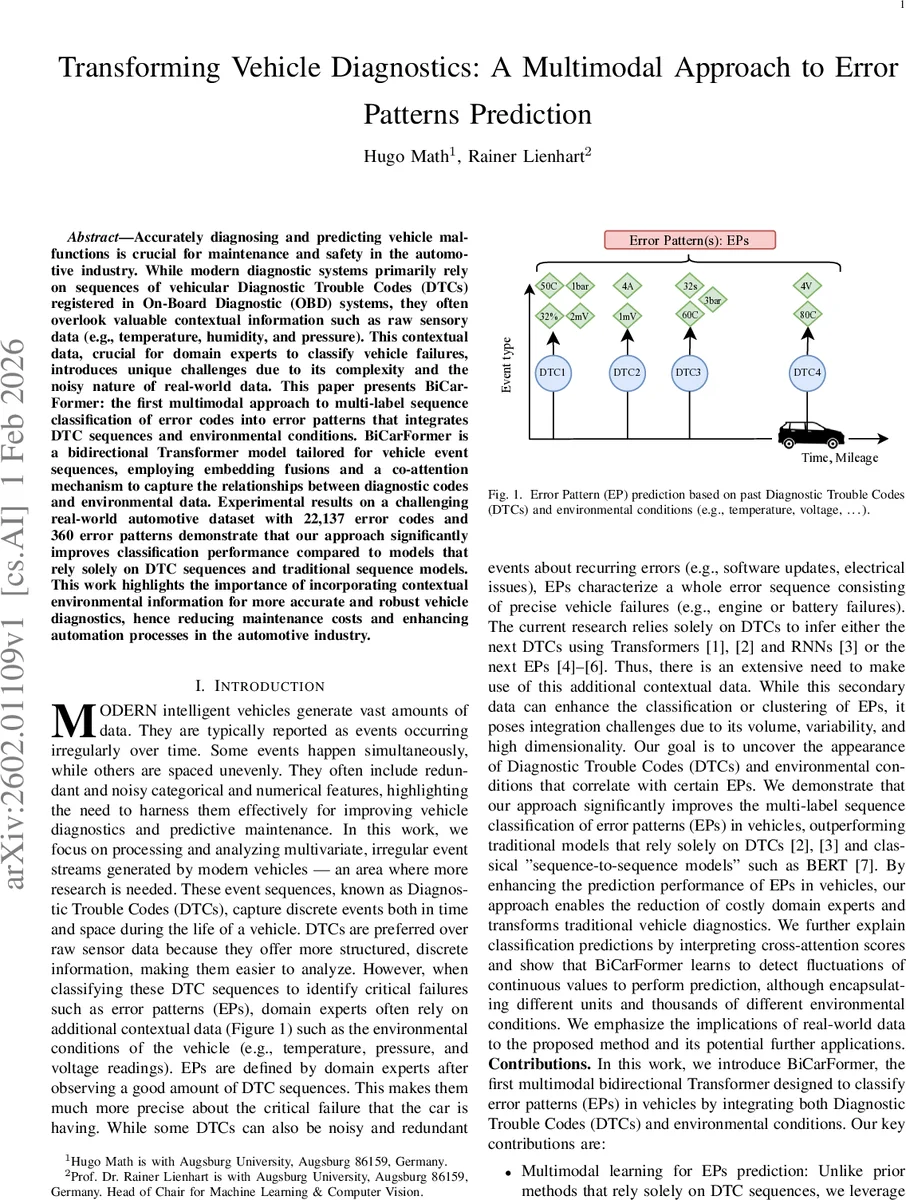

Accurately diagnosing and predicting vehicle malfunctions is crucial for maintenance and safety in the automotive industry. While modern diagnostic systems primarily rely on sequences of vehicular Diagnostic Trouble Codes (DTCs) registered in On-Board Diagnostic (OBD) systems, they often overlook valuable contextual information such as raw sensory data (e.g., temperature, humidity, and pressure). This contextual data, crucial for domain experts to classify vehicle failures, introduces unique challenges due to its complexity and the noisy nature of real-world data. This paper presents BiCarFormer: the first multimodal approach to multi-label sequence classification of error codes into error patterns that integrates DTC sequences and environmental conditions. BiCarFormer is a bidirectional Transformer model tailored for vehicle event sequences, employing embedding fusions and a co-attention mechanism to capture the relationships between diagnostic codes and environmental data. Experimental results on a challenging real-world automotive dataset with 22,137 error codes and 360 error patterns demonstrate that our approach significantly improves classification performance compared to models that rely solely on DTC sequences and traditional sequence models. This work highlights the importance of incorporating contextual environmental information for more accurate and robust vehicle diagnostics, hence reducing maintenance costs and enhancing automation processes in the automotive industry.

💡 Research Summary

This paper introduces BiCarFormer, the first multimodal bidirectional Transformer designed to classify vehicle error patterns (EPs) by jointly processing Diagnostic Trouble Code (DTC) sequences and contextual environmental sensor data (temperature, pressure, voltage, etc.). Traditional vehicle diagnostic systems rely solely on DTC sequences, ignoring the rich contextual information that domain experts routinely use to pinpoint failures. The authors argue that integrating this auxiliary data is essential for accurate, real‑world diagnostics, yet it poses challenges due to high cardinality, variable presence, and large volume of environmental measurements.

The dataset comprises five million anonymized vehicle histories, each containing on average 150 ± 90 DTCs and a parallel environmental condition stream of roughly 2,275 ± 2,310 entries per vehicle. Every DTC event includes an ECU identifier, a base error code, a fault byte, a timestamp, and mileage. In addition, each event is associated with a variable‑length list of environmental triples (description, value, unit). The target labels consist of 360 EPs, modeled as a multi‑label classification problem.

BiCarFormer’s architecture proceeds as follows. First, DTC tokens are embedded with a learned token embedding and positional encoding. Environmental conditions are separately embedded: the textual description (high‑cardinality categorical), the numeric value (heterogeneous types), and the unit (e.g., °C, bar). These embeddings are concatenated rather than summed, preserving distinct feature dimensions. The concatenated multimodal token sequence is fed into a bidirectional Transformer encoder. Crucially, a co‑attention (cross‑attention) layer is inserted between the DTC and environmental streams, allowing each modality to attend to the other in both directions. This mechanism captures interactions such as “rising temperature coinciding with a specific fault byte” that are otherwise invisible to a single‑modality model.

Because the environmental stream can be an order of magnitude longer than the DTC stream, the authors adopt efficiency tricks: Linformer‑style low‑rank projection of keys/values and sparse attention patterns (LongFormer‑style) to reduce the quadratic complexity of vanilla self‑attention. The final hidden representation of the

Comments & Academic Discussion

Loading comments...

Leave a Comment