Probing RLVR training instability through the lens of objective-level hacking

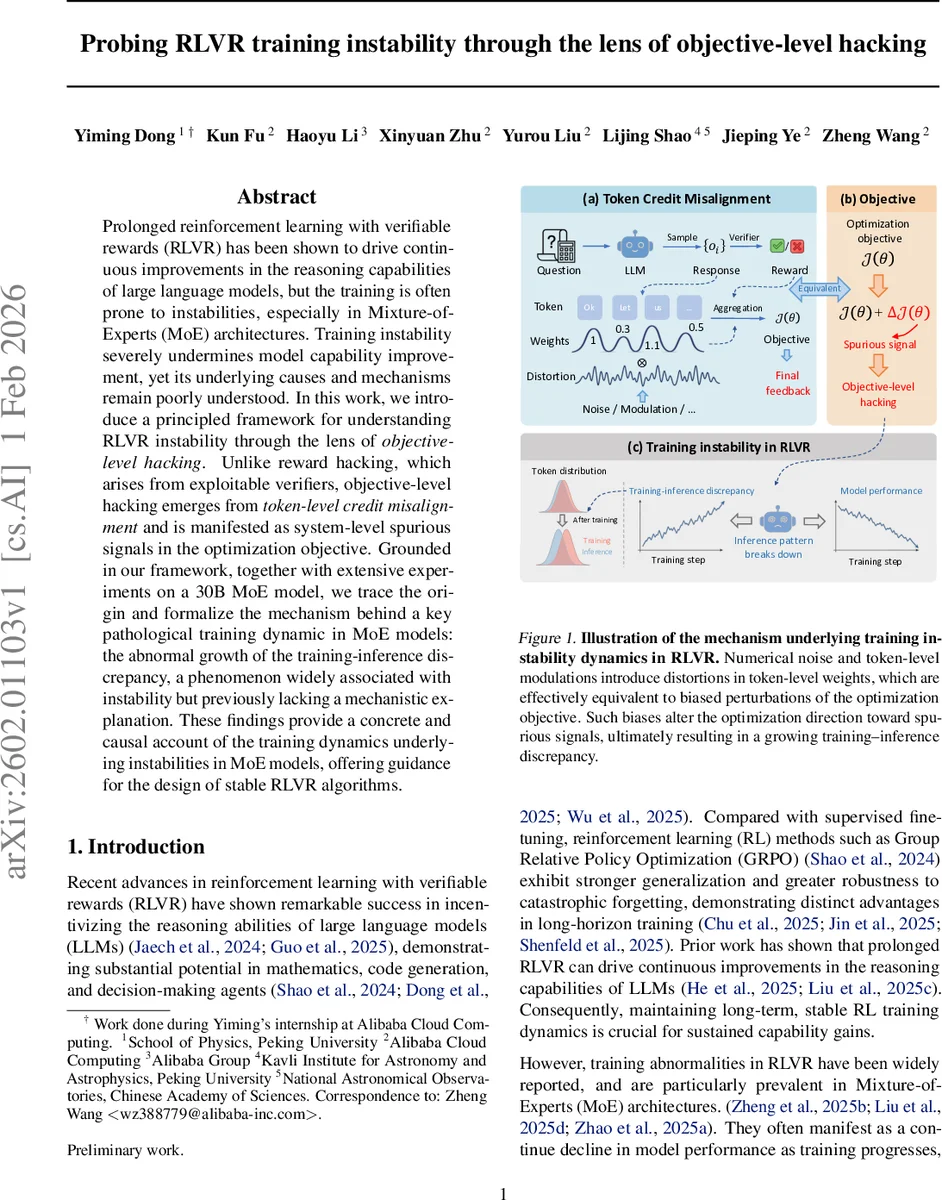

Prolonged reinforcement learning with verifiable rewards (RLVR) has been shown to drive continuous improvements in the reasoning capabilities of large language models, but the training is often prone to instabilities, especially in Mixture-of-Experts (MoE) architectures. Training instability severely undermines model capability improvement, yet its underlying causes and mechanisms remain poorly understood. In this work, we introduce a principled framework for understanding RLVR instability through the lens of objective-level hacking. Unlike reward hacking, which arises from exploitable verifiers, objective-level hacking emerges from token-level credit misalignment and is manifested as system-level spurious signals in the optimization objective. Grounded in our framework, together with extensive experiments on a 30B MoE model, we trace the origin and formalize the mechanism behind a key pathological training dynamic in MoE models: the abnormal growth of the training-inference discrepancy, a phenomenon widely associated with instability but previously lacking a mechanistic explanation. These findings provide a concrete and causal account of the training dynamics underlying instabilities in MoE models, offering guidance for the design of stable RLVR algorithms.

💡 Research Summary

The paper tackles a pressing problem in the field of large‑language‑model (LLM) reinforcement learning with verifiable rewards (RLVR): prolonged training often becomes unstable, especially when the model uses a Mixture‑of‑Experts (MoE) architecture. While prior work has reported the phenomenon, a mechanistic understanding has been lacking. The authors introduce a new conceptual framework called “objective‑level hacking” to explain why instability arises.

Objective‑level hacking is defined as a misalignment between the token‑level credit that the RL algorithm assigns and the true contribution of each token to the final reward. This misalignment injects spurious signals directly into the optimization objective, causing the optimizer to follow a biased direction that amplifies the gap between the token distributions used during rollout generation (inference) and those used during gradient computation (training). The authors contrast this with classic “reward hacking,” which stems from exploitable verifiers; instead, objective‑level hacking originates from the model’s own internal credit assignment.

The technical core starts with Group Relative Policy Optimization (GRPO), a PPO‑derived algorithm that samples a group of responses per question and optimizes a clipped surrogate loss. The paper formalizes the ideal objective J(θ) as an expectation over the training token distribution π_train. In practice, because rollout generation often uses a slightly different inference engine π_infer, the effective objective becomes J′(θ) = E_{π_infer}

Comments & Academic Discussion

Loading comments...

Leave a Comment