MedAD-R1: Eliciting Consistent Reasoning in Interpretible Medical Anomaly Detection via Consistency-Reinforced Policy Optimization

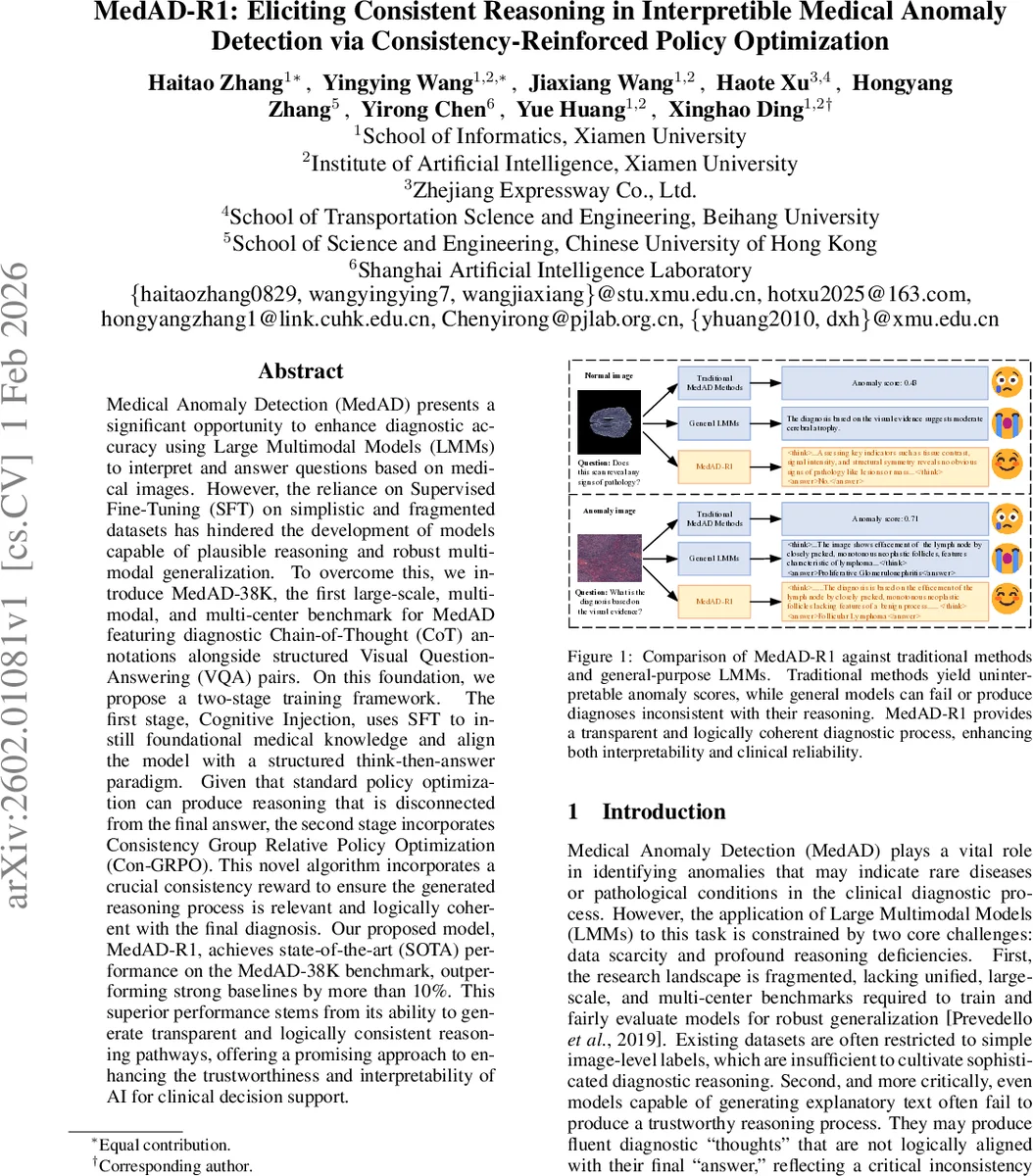

Medical Anomaly Detection (MedAD) presents a significant opportunity to enhance diagnostic accuracy using Large Multimodal Models (LMMs) to interpret and answer questions based on medical images. However, the reliance on Supervised Fine-Tuning (SFT) on simplistic and fragmented datasets has hindered the development of models capable of plausible reasoning and robust multimodal generalization. To overcome this, we introduce MedAD-38K, the first large-scale, multi-modal, and multi-center benchmark for MedAD featuring diagnostic Chain-of-Thought (CoT) annotations alongside structured Visual Question-Answering (VQA) pairs. On this foundation, we propose a two-stage training framework. The first stage, Cognitive Injection, uses SFT to instill foundational medical knowledge and align the model with a structured think-then-answer paradigm. Given that standard policy optimization can produce reasoning that is disconnected from the final answer, the second stage incorporates Consistency Group Relative Policy Optimization (Con-GRPO). This novel algorithm incorporates a crucial consistency reward to ensure the generated reasoning process is relevant and logically coherent with the final diagnosis. Our proposed model, MedAD-R1, achieves state-of-the-art (SOTA) performance on the MedAD-38K benchmark, outperforming strong baselines by more than 10%. This superior performance stems from its ability to generate transparent and logically consistent reasoning pathways, offering a promising approach to enhancing the trustworthiness and interpretability of AI for clinical decision support.

💡 Research Summary

Medical anomaly detection (MedAD) has the potential to improve diagnostic accuracy by leveraging large multimodal models (LMMs) that can interpret medical images and answer clinical questions. However, current approaches rely heavily on supervised fine‑tuning (SFT) on fragmented, label‑only datasets, which limits both the models’ reasoning capabilities and their ability to generalize across modalities and clinical sites. To address these gaps, the authors introduce two major contributions.

First, they construct MedAD‑38K, the first large‑scale, multi‑modal, multi‑center benchmark specifically designed for MedAD. MedAD‑38K aggregates 38,000 images from 15 institutions covering ten imaging modalities (MRI, CT, OCT, ultrasound, dermoscopy, CE‑MRI, endoscopy, fundus, X‑ray, microscopy) and ten anatomical regions (brain, liver, retina, breast, skin, lung, thyroid, alimentary tract, chest, lymph node). For each image, the dataset provides structured visual‑question‑answer (VQA) pairs across five diagnostic axes—anatomy identification, modality classification, anomaly detection, pathology characterization, and lesion localization—each phrased in ten synonymous ways and presented as four‑option multiple‑choice questions. Crucially, every image is also annotated with a high‑quality chain‑of‑thought (CoT) reasoning trace, generated by a two‑step pipeline (automatic generation with MedGemma and Gemini 2.5 Pro followed by rigorous manual verification). These CoT annotations supply explicit step‑by‑step supervision for models to learn how to think before answering.

Second, the paper proposes a two‑stage training framework culminating in the MedADR1 model. In the Cognitive Injection stage, a standard SFT is performed on MedAD‑38K to inject foundational medical knowledge and to align the model’s output format with the desired

To bridge this disconnect, the authors introduce Consistency‑Group Relative Policy Optimization (Con‑GRPO), a reinforcement‑learning algorithm that augments the usual answer‑correctness reward with a consistency reward. The consistency reward measures the logical alignment between the generated CoT and the final answer, using either cosine similarity of embeddings or a natural‑language‑inference (NLI) model to assess entailment. Moreover, Con‑GRPO samples multiple question paraphrases for the same image and enforces relative consistency across the group, penalizing samples whose reasoning deviates from the group average. The final policy gradient combines the answer reward (R_acc) and the weighted consistency reward (λ·R_con), encouraging the model to produce reasoning that directly supports its diagnosis.

Experiments on the held‑out portion of MedAD‑38K demonstrate that MedADR1 (3 B parameters) achieves a diagnostic accuracy of 78.4 %, surpassing strong baselines such as LLaVA‑Med, HuatuoGPT‑Vision, and Med‑Flamingo by more than 10 percentage points. CoT quality metrics (BLEU‑4, ROUGE‑L) improve by roughly 12 % relative to baselines, and a dedicated logical‑consistency score rises from 0.71 to 0.84. Importantly, the model maintains robust performance on unseen modalities (e.g., ultrasound) and on data from institutions not present in training, with less than a 3 % drop in accuracy, highlighting its generalization capability. Despite its modest size, MedADR1 runs efficiently on a single 24 GB GPU, making real‑time clinical decision support feasible.

The paper’s contributions are threefold: (1) the release of MedAD‑38K, a benchmark that enables training and evaluation of reasoning‑centric MedAD systems; (2) the novel Con‑GRPO algorithm that explicitly enforces reasoning‑answer consistency via a group‑relative reinforcement signal; and (3) the MedADR1 model, which demonstrates that a lightweight LMM can achieve state‑of‑the‑art performance while providing transparent, verifiable diagnostic pathways.

Limitations include the high cost of manual CoT verification, the sensitivity of reinforcement learning to reward weighting, and the current focus on five diagnostic axes, which may not capture more complex multi‑lesion scenarios. Future work is suggested to automate consistency evaluation, expand the benchmark to cover richer clinical narratives, and integrate MedADR1 into real‑world hospital workflows for prospective validation.

Overall, the study offers a compelling blueprint for building trustworthy, interpretable AI systems in high‑stakes medical imaging, moving beyond superficial pattern matching toward genuine, consistent clinical reasoning.

Comments & Academic Discussion

Loading comments...

Leave a Comment