Bias in the Ear of the Listener: Assessing Sensitivity in Audio Language Models Across Linguistic, Demographic, and Positional Variations

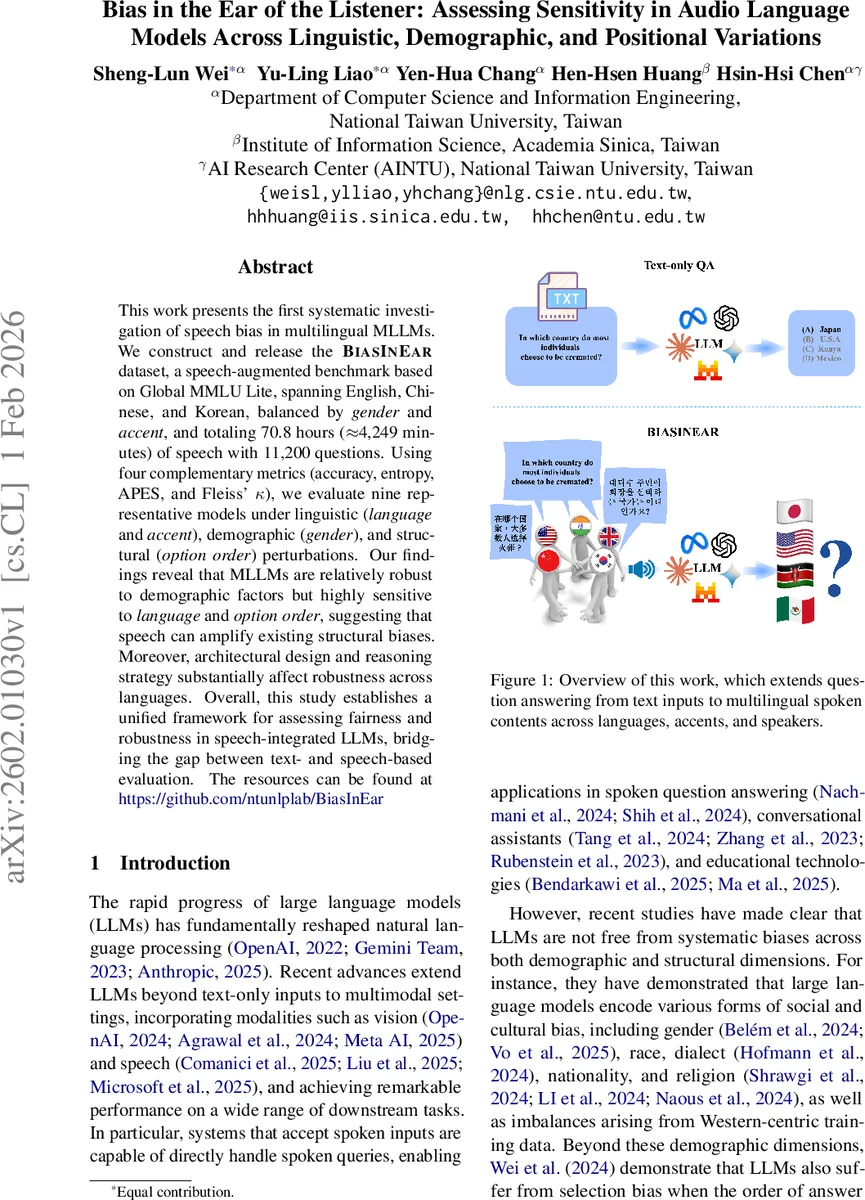

This work presents the first systematic investigation of speech bias in multilingual MLLMs. We construct and release the BiasInEar dataset, a speech-augmented benchmark based on Global MMLU Lite, spanning English, Chinese, and Korean, balanced by gender and accent, and totaling 70.8 hours ($\approx$4,249 minutes) of speech with 11,200 questions. Using four complementary metrics (accuracy, entropy, APES, and Fleiss’ $κ$), we evaluate nine representative models under linguistic (language and accent), demographic (gender), and structural (option order) perturbations. Our findings reveal that MLLMs are relatively robust to demographic factors but highly sensitive to language and option order, suggesting that speech can amplify existing structural biases. Moreover, architectural design and reasoning strategy substantially affect robustness across languages. Overall, this study establishes a unified framework for assessing fairness and robustness in speech-integrated LLMs, bridging the gap between text- and speech-based evaluation. The resources can be found at https://github.com/ntunlplab/BiasInEar.

💡 Research Summary

This paper presents the first systematic study of speech‑driven bias in multilingual multimodal large language models (MLLMs). Building on the Global MMLU Lite benchmark, the authors create and release the BiasInEar dataset, a spoken‑question answering corpus that covers English, Chinese, and Korean. The dataset is carefully balanced across three axes: language (three languages), accent (American, British, Indian for English; Beijing and Northeastern for Chinese; Seoul and Jeolla for Korean), and gender (male “Orus” and female “Zephyr” synthetic voices). In total it contains 70.8 hours of high‑quality speech (≈4,249 minutes) and 11,200 multiple‑choice questions, each paired with both text and audio versions of the question stem and four answer options.

To generate the audio, the authors first rewrite each original question and its options using GPT‑OSS 120B so that mathematical symbols, chemical formulas, and other non‑verbal tokens become naturally readable. The rewritten text is then fed to the Gemini 2.5 Flash Preview TTS system with explicit language and accent prompts, guaranteeing consistent pronunciation across the three languages. Quality control proceeds in two stages: (1) automatic screening by transcribing each clip with Whisper Large v3 and Omnilingual ASR, computing a minimum word‑error‑rate (WER) and discarding clips with WER > 0.6; (2) human verification of a stratified sample from each non‑zero WER bin, rating clips as “Correct”, “Acceptable”, or “Incorrect”. Over 90 % of sampled clips receive a “Correct” rating, confirming that the majority of the audio is faithful to the rewritten text.

The experimental protocol evaluates nine representative MLLMs—including Gemini 2.5 Flash, Gemini 2.5 Flash Lite, Gemini 2.0 Flash, Gemini 2.0 Flash Lite, Gemma 3n E2B, Gemma 3n E4B, Voxtral Small, Voxtral Mini, and Phi‑4—under four controlled perturbations: (i) language change, (ii) accent change, (iii) speaker gender change, and (iv) answer‑option order reversal (original A‑B‑C‑D versus reversed D‑C‑B‑A). Each question can thus appear in up to 28 distinct audio configurations, allowing the authors to probe not only overall accuracy but also the stability of model predictions across conditions.

Four complementary metrics are employed: (1) Accuracy (raw correct‑answer rate); (2) Entropy, the normalized Shannon entropy of the model’s probability distribution over the four options, measuring uncertainty; (3) APES (Average Pairwise Entropy Shift), which quantifies how much entropy changes when moving between levels of a given variable (e.g., male vs. female voice); and (4) Fleiss’ κ, a chance‑corrected agreement statistic that captures consistency of the final chosen answer across perturbations. Higher entropy and APES indicate greater variability, while higher κ indicates stronger consistency.

Key findings:

- Gender robustness: Across all models, swapping male and female synthetic voices produces negligible differences in accuracy (<0.5 % gap) and minimal changes in entropy, APES, and κ. This suggests that current TTS and ASR pipelines already mitigate gender‑related acoustic variation.

- Language and accent sensitivity: Switching languages or accents consistently raises entropy (by 0.02–0.04) and lowers κ (by 0.03–0.08). Korean, a medium‑resource language in the set, exhibits the largest entropy increase, implying that language‑specific phonetic and prosodic characteristics affect downstream reasoning. Accent changes (e.g., American vs. Indian English, Beijing vs. Northeastern Mandarin) show similar trends, confirming that acoustic diversity propagates into the LLM’s decision‑making.

- Option‑order bias: Reversing the answer‑option order dramatically reduces Fleiss’ κ (average drop ≈0.15) and, for several models, pushes κ close to zero, evidencing a strong selection bias that persists in the speech modality. This mirrors prior text‑only findings but demonstrates that speech input can amplify the effect.

- Model‑specific behavior: Gemini and Gemma families display higher entropy and lower κ, indicating more unstable predictions under perturbations. Voxtral models, especially the larger variants, achieve lower entropy and higher κ, suggesting more confident and consistent outputs. Phi‑4 shows a wide inter‑quartile range, reflecting heterogeneous behavior across questions. Applying chain‑of‑thought (CoT) prompting modestly reduces entropy and improves κ, hinting that explicit reasoning steps can mitigate some instability.

- Cultural vs. neutral questions: When separating culturally sensitive (CS) from culturally agnostic (CA) items, CS questions yield slightly higher entropy and lower κ across models, indicating that cultural context adds another layer of difficulty that speech input can exacerbate.

The authors conclude that while current MLLMs are relatively robust to demographic (gender) variation, they remain vulnerable to linguistic (language, accent) and structural (option order) factors. Speech therefore does not merely inherit textual biases but can amplify them, especially when the acoustic front‑end introduces language‑specific errors. The paper recommends expanding training data to include a broader spectrum of low‑resource languages and dialects, integrating error‑aware multi‑task learning that jointly optimizes ASR, TTS, and LLM components, and exploring architectural or prompting strategies (e.g., CoT) that reduce reliance on superficial input ordering.

Overall, the work contributes (1) a publicly released, high‑quality multilingual spoken QA benchmark (BiasInEar), (2) a unified evaluation framework that combines accuracy, uncertainty, and agreement metrics, and (3) a detailed empirical portrait of how speech‑enabled LLMs behave across linguistic, demographic, and positional dimensions. These resources lay the groundwork for future research on fairness, robustness, and bias mitigation in multimodal language technologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment