SRVAU-R1: Enhancing Video Anomaly Understanding via Reflection-Aware Learning

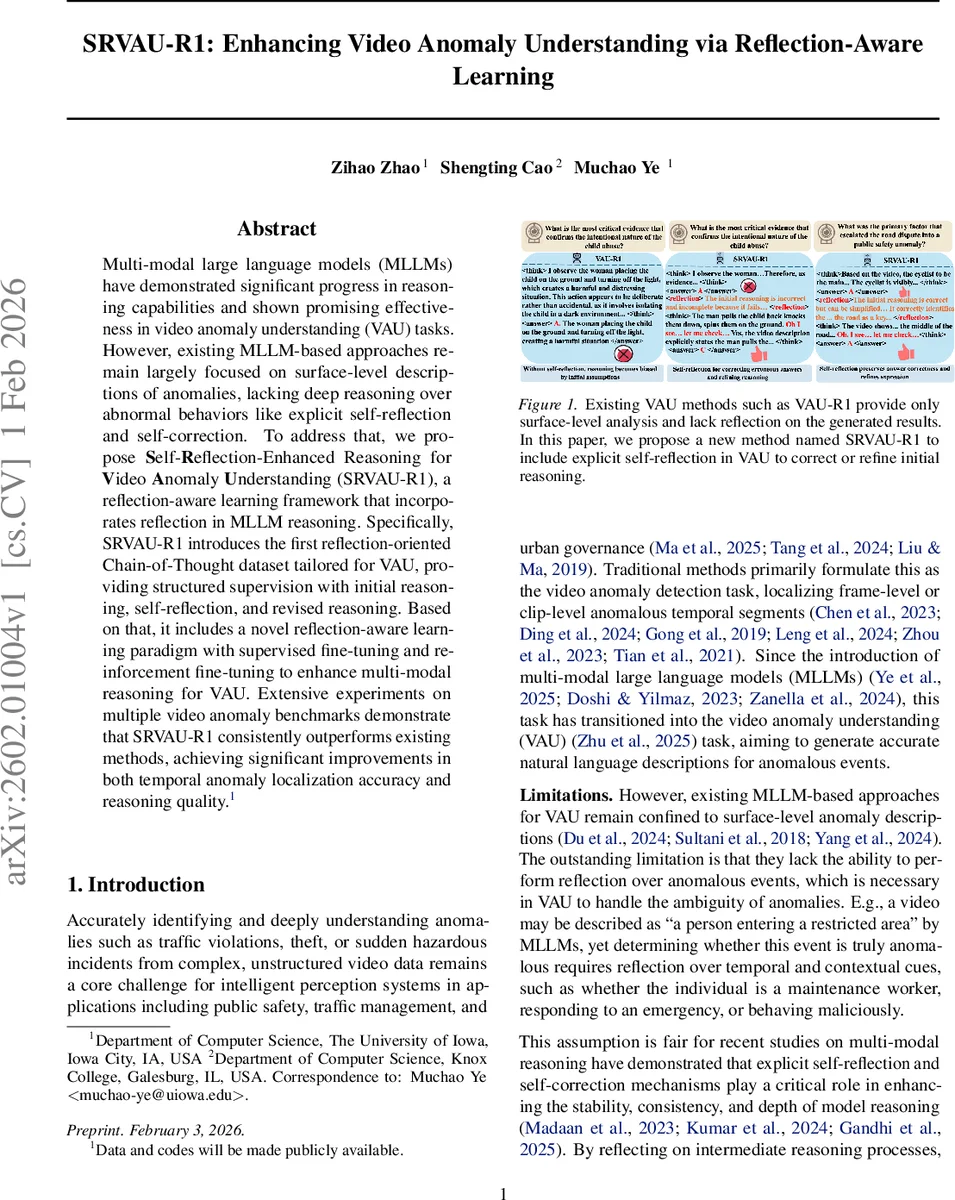

Multi-modal large language models (MLLMs) have demonstrated significant progress in reasoning capabilities and shown promising effectiveness in video anomaly understanding (VAU) tasks. However, existing MLLM-based approaches remain largely focused on surface-level descriptions of anomalies, lacking deep reasoning over abnormal behaviors like explicit self-reflection and self-correction. To address that, we propose Self-Reflection-Enhanced Reasoning for Video Anomaly Understanding (SRVAU-R1), a reflection-aware learning framework that incorporates reflection in MLLM reasoning. Specifically, SRVAU-R1 introduces the first reflection-oriented Chain-of-Thought dataset tailored for VAU, providing structured supervision with initial reasoning, self-reflection, and revised reasoning. Based on that, it includes a novel reflection-aware learning paradigm with supervised fine-tuning and reinforcement fine-tuning to enhance multi-modal reasoning for VAU. Extensive experiments on multiple video anomaly benchmarks demonstrate that SRVAU-R1 consistently outperforms existing methods, achieving significant improvements in both temporal anomaly localization accuracy and reasoning quality.

💡 Research Summary

The paper introduces SRVAU‑R1, a novel framework that equips multi‑modal large language models (MLLMs) with explicit self‑reflection and self‑correction capabilities for the task of Video Anomaly Understanding (VAU). While recent MLLM‑based VAU methods have succeeded in generating surface‑level descriptions of anomalous events, they lack the ability to reason deeply about temporal context, causal relationships, and ambiguous visual cues. SRVAU‑R1 addresses this gap through three intertwined contributions: a reflection‑oriented Chain‑of‑Thought (CoT) dataset, a two‑stage training pipeline (supervised fine‑tuning followed by reinforcement fine‑tuning), and a composite reward design that jointly optimizes task correctness, reflection quality, and temporal localization accuracy.

Reflection‑Oriented Data Construction

The authors first generate initial reasoning (a₁) for each video‑question pair using a base multimodal model (Qwen2.5‑VL‑3B). These initial outputs, together with ground‑truth annotations, are fed to a higher‑capacity teacher model (Qwen3‑VL‑30B) that produces two complementary signals: (1) an explicit self‑reflection text (r) that pinpoints errors such as mis‑interpreted evidence, temporal mis‑alignment, or missing causal links, and (2) a revised reasoning (a₂) that corrects the identified flaws. Each training sample is thus structured as (video, question, a₁, r, a₂) and includes explicit markup tags (e.g.,

Two‑Stage Learning Paradigm

Stage 1 – Supervised Fine‑Tuning (SFT): Using the reflection‑augmented dataset, the policy model is trained with a standard negative log‑likelihood loss, conditioning on the reflection segment. This “cold‑start” phase injects basic self‑assessment abilities before any reinforcement learning, stabilizing later policy updates and preventing degenerate reflection behaviors.

Stage 2 – Reflection‑Aware Reinforcement Fine‑Tuning (RFT): The authors adopt Group Relative Policy Optimization (GRPO) as the backbone RL algorithm. For each input query, a group of candidate responses is sampled; each candidate receives a composite reward:

- Task Reward (R_task): combines format consistency (0.5) and answer accuracy (0.5).

- Reflection Reward (R_reflection): evaluates correct use of the

tag (I_ref = 0.25), the effectiveness of the reflection in improving the final answer (I_eff, ranging from –0.25 to +0.5), and a brevity regularizer f_len that penalizes overly long reflections. - Temporal IoU Reward (R_tIoU): measures the Intersection‑over‑Union between the model‑predicted anomalous segment and the ground‑truth temporal interval, directly encouraging precise localization.

The total reward is R_total = α·R_task + β·R_reflection + γ·R_tIoU, with α, β, γ balancing the three objectives. This design forces the model to generate concise, well‑structured reasoning, to self‑evaluate and correct its own mistakes, and to align its temporal predictions with the true anomaly windows.

Empirical Evaluation

SRVAU‑R1 is evaluated on several public VAU benchmarks (e.g., ShanghaiTech, UCF‑Crime, XD‑Violence). Across all datasets, the proposed method outperforms prior state‑of‑the‑art approaches (including VAU‑R1 and VAD‑R1) by 4.2 %–7.8 % in anomaly detection accuracy. Language‑generation metrics (BLEU‑4, ROUGE‑L) also show significant gains, indicating higher quality explanations. Ablation studies confirm that each component—reflection‑oriented data, the SFT cold‑start, and the reflection‑aware reward—contributes positively; removing the reflection reward, for instance, drops temporal IoU by ~6 %. Qualitative examples illustrate the model’s ability to reconsider an initial claim (“a person enters a restricted area”) by reflecting on contextual cues (e.g., uniform, emergency response) and revising its verdict, thereby demonstrating genuine meta‑cognitive behavior.

Limitations and Future Work

The dataset construction relies heavily on a powerful teacher model; any biases present in the teacher may propagate to the training data. The weighting of the composite reward (α, β, γ) is manually tuned and may need adaptation for different domains. Moreover, the current framework focuses on single‑event anomalies; extending it to multi‑event, long‑term causal chains remains an open challenge.

Conclusion

SRVAU‑R1 pioneers the integration of self‑reflection into multimodal reasoning for video anomaly understanding. By explicitly teaching models how to critique and amend their own reasoning, and by reinforcing these abilities through a carefully crafted RL reward, the system achieves both higher detection accuracy and more trustworthy, interpretable explanations. This advancement opens the door to more reliable AI surveillance and safety systems that can not only spot anomalies but also articulate why they are anomalous, mirroring human‑like reflective reasoning.

Comments & Academic Discussion

Loading comments...

Leave a Comment