Error Taxonomy-Guided Prompt Optimization

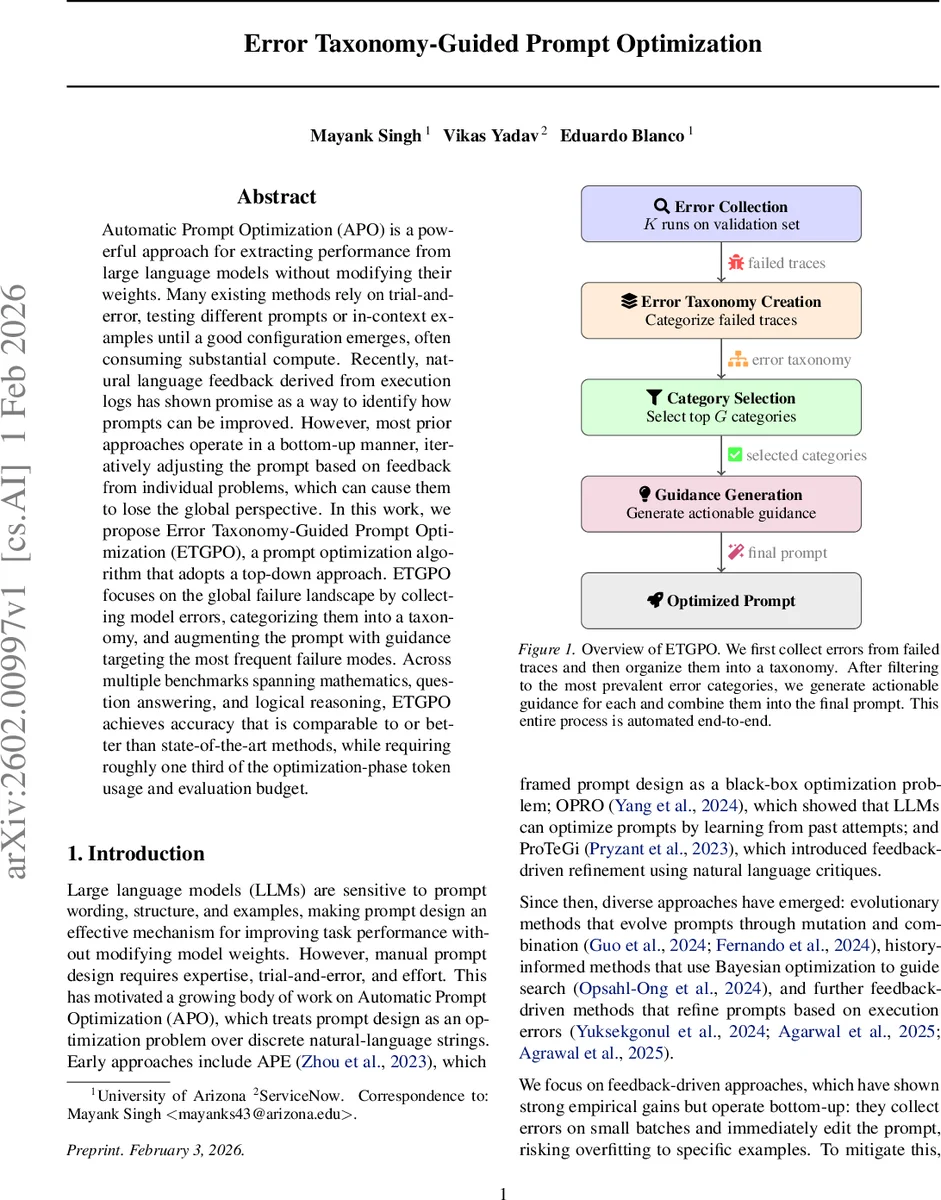

Automatic Prompt Optimization (APO) is a powerful approach for extracting performance from large language models without modifying their weights. Many existing methods rely on trial-and-error, testing different prompts or in-context examples until a good configuration emerges, often consuming substantial compute. Recently, natural language feedback derived from execution logs has shown promise as a way to identify how prompts can be improved. However, most prior approaches operate in a bottom-up manner, iteratively adjusting the prompt based on feedback from individual problems, which can cause them to lose the global perspective. In this work, we propose Error Taxonomy-Guided Prompt Optimization (ETGPO), a prompt optimization algorithm that adopts a top-down approach. ETGPO focuses on the global failure landscape by collecting model errors, categorizing them into a taxonomy, and augmenting the prompt with guidance targeting the most frequent failure modes. Across multiple benchmarks spanning mathematics, question answering, and logical reasoning, ETGPO achieves accuracy that is comparable to or better than state-of-the-art methods, while requiring roughly one third of the optimization-phase token usage and evaluation budget.

💡 Research Summary

Automatic Prompt Optimization (APO) seeks to improve the performance of large language models (LLMs) by refining the textual prompt rather than altering model weights. Existing APO techniques fall into three broad families: feedback‑driven methods that iteratively edit prompts based on errors observed on small batches, evolutionary approaches that maintain a population of candidate prompts and evolve them through mutation and recombination, and history‑informed methods that use past prompt‑score trajectories (e.g., Bayesian optimization) to propose new candidates. While feedback‑driven methods have shown strong empirical gains, they typically operate in a bottom‑up fashion: they collect errors on a few examples, immediately edit the prompt, and re‑evaluate. This can lead to over‑fitting to idiosyncratic failures and requires repeated validation, which is computationally expensive.

The paper introduces Error Taxonomy‑Guided Prompt Optimization (ETGPO), a top‑down APO algorithm that first builds a global view of the model’s failure modes before making any prompt edits. ETGPO proceeds through four stages:

-

Error Collection – The backbone LLM is run on the validation set K times to capture stochastic variations. All failed reasoning traces, together with problem statements, correct answers, and model predictions, are stored in a set F.

-

Error Taxonomy Creation – An optimizer LLM (a more capable model) processes the failed traces in batches of size B. For each trace it identifies the point where reasoning first goes wrong, classifies the nature of the error (e.g., algebraic sign mistake, incorrect heuristic generalization), and explains why the error leads to a wrong answer. The optimizer groups traces into categories, producing for each category a self‑contained description, a representative example, an error type label, and a short rationale. This yields a taxonomy C of error categories with associated prevalence statistics.

-

Error Category Selection – Categories that appear in only a single problem are filtered out to avoid over‑specialization. The remaining categories are sorted by failure count, and the top G (default 10) most frequent categories are retained as the target error modes for guidance generation.

-

Guidance Generation – The optimizer LLM is prompted with the selected categories and asked to produce actionable guidance for each. Each guidance block follows a template: (i) a concise description of the error, (ii) concrete “wrong” and “correct” examples, and (iii) step‑by‑step advice to avoid the pitfall. A short preamble introducing the guidance is also generated. All guidance blocks are concatenated and appended to the original system prompt, yielding the optimized prompt P*.

Key innovations of ETGPO include:

- Global error modeling: By aggregating errors across the entire validation set, ETGPO captures systematic weaknesses rather than isolated outliers.

- Taxonomy‑driven editing: Prompt edits are driven by high‑impact error categories, reducing the number of required iterations.

- Token efficiency: The taxonomy and guidance are produced in a small number of LLM calls, dramatically lowering the total input‑plus‑output token count during optimization.

The authors evaluate ETGPO using GPT‑4.1‑mini as the backbone model and GPT‑4.1 as the optimizer. Seven benchmarks spanning mathematics (AIME, HMMT), general knowledge (MMLU‑Pro), music (Musique), and logical reasoning (AR‑LSA, FOLIO) are used. Results (mean accuracy ± confidence interval) show that ETGPO matches or exceeds state‑of‑the‑art methods such as MIPR Ov2 and GEP A on all but one benchmark, achieving the highest average accuracy of 69.08 %. Notably, on logical reasoning tasks ETGPO reaches 69.08 % compared to 67.71 % (GEP A) and 67.20 % (MIPR Ov2).

In terms of computational cost, ETGPO’s optimization phase consumes the fewest tokens across all datasets. For example, on the AIME benchmark ETGPO uses roughly 10 K tokens, whereas MIPR Ov2 consumes about 46 K tokens (≈ 4.6× more) and GEP A about 24 K tokens (≈ 2.5× more). This reduction stems from the single‑shot taxonomy creation and guidance generation, as opposed to the many candidate evaluations required by evolutionary or history‑informed approaches.

The paper acknowledges several limitations. The quality of the error taxonomy depends heavily on the optimizer LLM’s ability to correctly analyze and categorize failures; misclassifications could propagate into ineffective guidance. The fixed G parameter may omit less frequent but still important error modes, especially in highly heterogeneous domains. Moreover, the current experiments focus on a single backbone model; extending ETGPO to multi‑model ensembles or to tasks with multimodal inputs remains open.

Future work suggested includes:

- Adaptive selection of G based on entropy or coverage metrics, allowing the method to scale with dataset complexity.

- Ensembling multiple optimizer LLMs to improve robustness of taxonomy creation.

- Meta‑learning of error categories so that once a taxonomy is built for a domain, it can be reused or fine‑tuned for related tasks, reducing the need for repeated error collection.

- Online updating where the optimized prompt is periodically re‑evaluated on new data, and emerging error categories are incorporated without restarting the whole pipeline.

In conclusion, ETGPO demonstrates that a top‑down, taxonomy‑guided approach to prompt optimization can achieve state‑of‑the‑art performance while using a fraction of the computational budget required by existing methods. By turning the “error log” into a structured knowledge base and translating the most common failure patterns into concise, actionable guidance, ETGPO offers a practical, scalable solution for improving LLM performance across diverse tasks without any model fine‑tuning.

Comments & Academic Discussion

Loading comments...

Leave a Comment