DeALOG: Decentralized Multi-Agents Log-Mediated Reasoning Framework

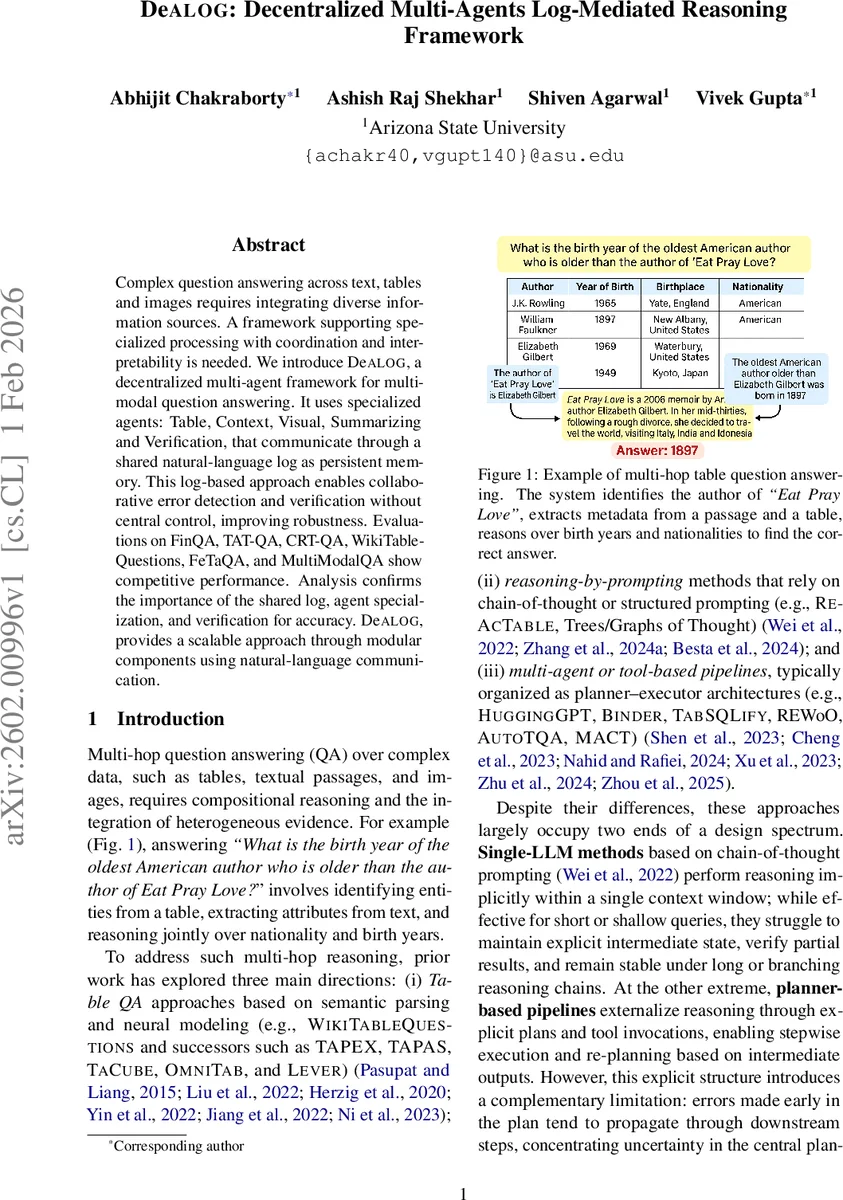

Complex question answering across text, tables and images requires integrating diverse information sources. A framework supporting specialized processing with coordination and interpretability is needed. We introduce DeALOG, a decentralized multi-agent framework for multimodal question answering. It uses specialized agents: Table, Context, Visual, Summarizing and Verification, that communicate through a shared natural-language log as persistent memory. This log-based approach enables collaborative error detection and verification without central control, improving robustness. Evaluations on FinQA, TAT-QA, CRT-QA, WikiTableQuestions, FeTaQA, and MultiModalQA show competitive performance. Analysis confirms the importance of the shared log, agent specialization, and verification for accuracy. DeALOG, provides a scalable approach through modular components using natural-language communication.

💡 Research Summary

DeALOG (Decentralized Multi‑Agents Log‑Mediated Reasoning Framework) tackles the challenge of answering complex, multi‑hop questions that require integrating heterogeneous evidence from tables, text passages, and images. Instead of relying on a single large language model (LLM) that must keep all intermediate reasoning in its own context window, or on a planner‑based pipeline that orchestrates a fixed sequence of tool calls, DeALOG distributes the work among five specialized agents: TableAgent, ContextAgent, VisualAgent, SummarizingAgent, and VerificationAgent.

All agents share a persistent, append‑only natural‑language log that records entries as (Agent, Type, Content, meta). Types include LOOKUP (table cell values), QUOTE (text spans), VISUAL (OCR or caption descriptions), SUMMARY (progress reports), ANSWER (final answer), and FLAG/OK (verification outcomes). The meta field stores step indices, timestamps, and provenance information (e.g., table row/column identifiers, document offsets, image IDs). Because every agent can read the entire log, they can make informed decisions about when to contribute, using a lightweight “should_act” heuristic.

The workflow proceeds in rounds managed by a simple scheduler. In each round Table, Context, and Visual agents are offered a turn; if an agent deems the current log relevant, it appends new evidence. When new evidence appears—or after a patience threshold without updates—the SummarizingAgent reads the log, synthesizes a concise summary, and may emit an ANSWER. The VerificationAgent then checks the proposed answer against the evidence in the log, recomputing arithmetic, confirming unit consistency, and ensuring that quoted spans actually support the claim. If verification succeeds, an OK token terminates the process; if a discrepancy is found, a FLAG token triggers a “second‑chance” re‑engagement where the relevant agents are asked to fill the missing or incorrect piece. This loop enables distributed error detection and correction without a central planner, reducing error propagation and improving robustness.

To keep the log within the LLM’s context window, older entries are compressed into SUMMARY stubs while preserving citations. A learned gating policy—implemented as a logistic regression classifier trained on prior runs—examines log‑derived features (presence of images, summarizer confidence, number of new entries, verification flags) to decide whether another round is worthwhile, thereby limiting unnecessary LLM calls.

Experiments were conducted on six multimodal QA benchmarks: FinQA, TAT‑QA, CRT‑QA, WikiTableQuestions, FeTaQA, and MultiModalQA. All methods used the same backbone models (LLaMA‑3 8B, Mistral 7B, Qwen‑3 8B) and the same BM25 + MiniLM retriever for input filtering, ensuring a fair comparison. Accuracy (exact match) and catastrophic‑error rates were reported with 95 % bootstrap confidence intervals.

DeALOG achieved the highest or near‑highest scores on most datasets, notably reaching 80 % EM on both FeTaQA and FinQA with the LLaMA‑3 8B backbone—outperforming strong baselines such as Chain‑of‑Thought prompting, AutoTQA, ReAct‑able, and even specialized table‑QA models like TableCritic. On FinQA, DeALOG matched the performance of a Program‑of‑Thought system that required an external calculator, demonstrating that the collaborative multi‑agent approach can compensate for numerical reasoning weaknesses inherent in LLMs.

Ablation studies confirmed the critical role of the shared log (removing it dropped performance by ~6 pp), the VerificationAgent (removing it caused a ~5 pp drop), and agent specialization (replacing the three modality agents with a single generic agent reduced accuracy by ~8 pp). These results underscore that transparent, auditable intermediate evidence and peer verification are the main drivers of DeALOG’s robustness.

Limitations are acknowledged: visual‑heavy questions depend heavily on OCR and caption quality; the current implementation executes agents sequentially due to API constraints, so true parallelism and speed gains await a thread‑safe, asynchronous logging infrastructure; and the natural‑language log, while human‑readable, can introduce parsing noise compared to structured graph or triple representations.

Future work will explore richer structured logs (e.g., knowledge graphs), tighter integration with external tools such as calculators or databases, and fully asynchronous multi‑agent execution to improve latency.

In summary, DeALOG presents a novel, planner‑free architecture where decentralized agents collaborate through a shared natural‑language log, achieving state‑of‑the‑art performance on diverse multimodal QA tasks while offering interpretability, auditability, and resilience to intermediate errors—an important step toward more modular, trustworthy AI reasoning systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment