HERMES: A Holistic End-to-End Risk-Aware Multimodal Embodied System with Vision-Language Models for Long-Tail Autonomous Driving

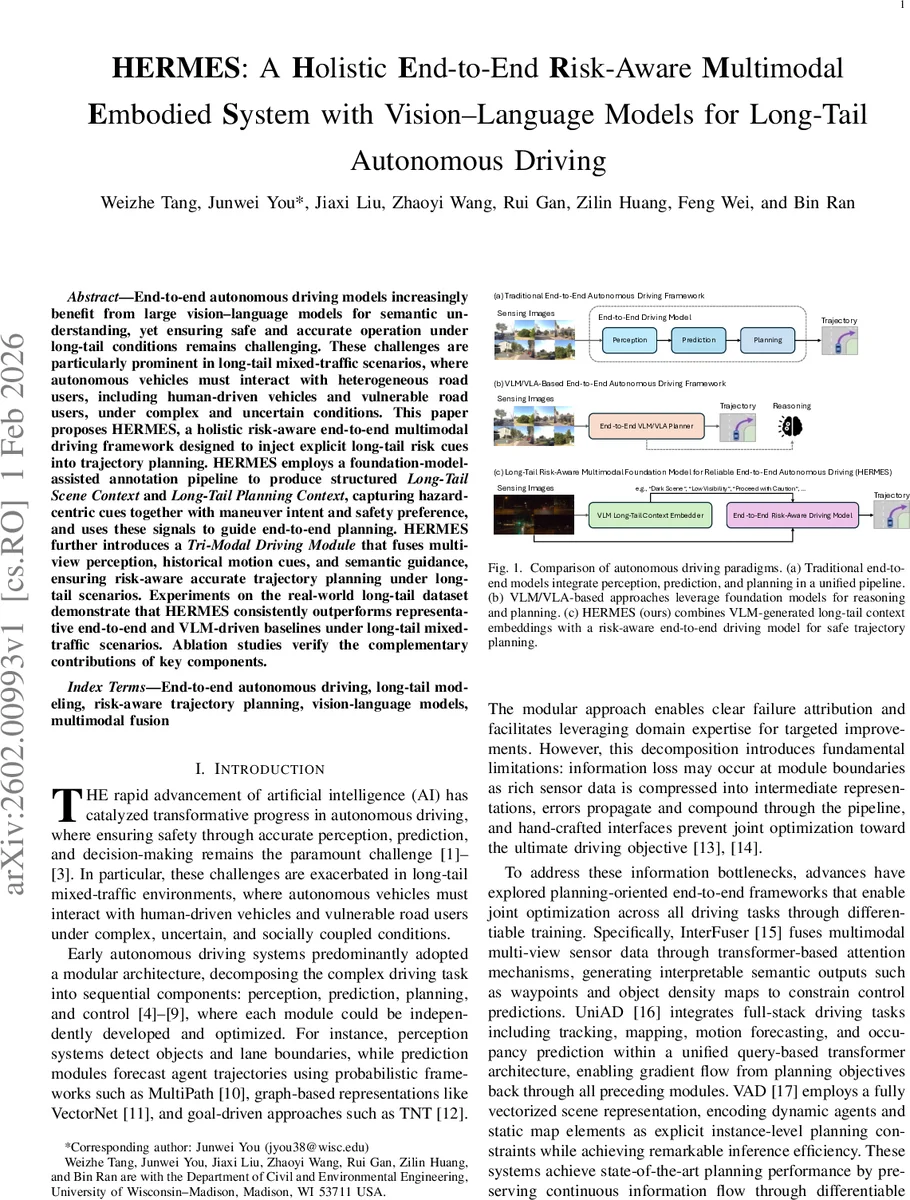

End-to-end autonomous driving models increasingly benefit from large vision–language models for semantic understanding, yet ensuring safe and accurate operation under long-tail conditions remains challenging. These challenges are particularly prominent in long-tail mixed-traffic scenarios, where autonomous vehicles must interact with heterogeneous road users, including human-driven vehicles and vulnerable road users, under complex and uncertain conditions. This paper proposes HERMES, a holistic risk-aware end-to-end multimodal driving framework designed to inject explicit long-tail risk cues into trajectory planning. HERMES employs a foundation-model-assisted annotation pipeline to produce structured Long-Tail Scene Context and Long-Tail Planning Context, capturing hazard-centric cues together with maneuver intent and safety preference, and uses these signals to guide end-to-end planning. HERMES further introduces a Tri-Modal Driving Module that fuses multi-view perception, historical motion cues, and semantic guidance, ensuring risk-aware accurate trajectory planning under long-tail scenarios. Experiments on the real-world long-tail dataset demonstrate that HERMES consistently outperforms representative end-to-end and VLM-driven baselines under long-tail mixed-traffic scenarios. Ablation studies verify the complementary contributions of key components.

💡 Research Summary

HERMES is a holistic, risk‑aware, end‑to‑end autonomous driving framework that explicitly incorporates long‑tail safety cues generated by large vision‑language models (VLMs) into trajectory planning. The authors first construct a teacher–student pipeline. In the teacher stage, a cloud‑based VLM (e.g., Qwen‑VL‑Flash) processes eight synchronized camera views together with a short history of vehicle states. Carefully crafted prompts ask the model to produce two structured textual annotations: (1) Long‑Tail Scene Context, which describes rare or hazardous elements such as occlusions, abnormal interactions, and unusual objects from multiple viewpoints; and (2) Long‑Tail Planning Context, which encodes a risk level, high‑level driving intent (straight, left, right, unknown), and explicit planning directives (e.g., “slow down”, “change lane”) together with a rationale. These annotations are pre‑computed for the training set, effectively augmenting the original WOD‑E2E long‑tail dataset with rich semantic guidance.

The student component, called the Tri‑Modal Driving Module, fuses three modalities at inference time: (i) multi‑view visual features extracted by a vision transformer, (ii) temporal embeddings of past ego‑motion (position, velocity, acceleration over the last 16 frames), and (iii) the embeddings of the two VLM‑generated contexts. Visual features are first cross‑attended with the Scene Context embedding, which highlights safety‑critical regions. The temporal encoder summarizes motion dynamics, and the resulting visual‑temporal representation is merged with the high‑level intent vector. Finally, a planning‑context fusion layer incorporates the Planning Context embedding, and a risk‑aware loss function applies higher penalties for deviations in high‑risk scenarios. The output is a sequence of trajectory waypoints generated in real time (≈30 fps).

Key contributions include: (1) a novel VLM‑driven annotation pipeline that automatically generates structured, risk‑centric natural‑language labels for long‑tail events, reducing manual labeling effort; (2) a three‑branch fusion architecture that preserves fine‑grained visual information while explicitly injecting VLM‑derived safety signals; and (3) a risk‑weighted planning objective that aligns the learned trajectory with the risk level indicated by the VLM. Experiments on the extended WOD‑E2E dataset show that HERMES reduces average displacement error (ADE) by 12.4 % and final displacement error (FDE) by 15.1 % compared with strong baselines such as InterFuser, UniAD, VAD, GPT‑Driver, and DriveVLM. In high‑risk situations, collision rates drop from 3.2 % to 2.1 % (≈35 % reduction) and speed‑violation rates decrease from 18 % to 11 %.

Ablation studies confirm the importance of each component: removing the textual contexts degrades ADE to 0.55 m and raises collisions to 4.5 %; collapsing the tri‑modal fusion to a single visual stream increases ADE to 0.51 m and raises high‑risk collisions by ~20 %; and training without the risk‑weighted loss leads to a 30 % increase in speed violations under hazardous conditions. The authors discuss limitations, noting that VLM inference is currently performed offline; on‑board deployment would require a lightweight distilled model or efficient on‑device VLM. Moreover, the risk assessment relies on the VLM’s pre‑trained knowledge, suggesting that domain‑specific fine‑tuning could further improve reliability.

In summary, HERMES demonstrates a practical pathway to embed large‑scale semantic reasoning into real‑time autonomous driving, bridging the gap between high‑level language understanding and low‑level control. By systematically integrating VLM‑generated long‑tail risk cues into a tri‑modal fusion planner, the framework achieves superior safety and accuracy in rare, high‑impact scenarios, marking a significant step forward for risk‑aware end‑to‑end autonomous vehicles.

Comments & Academic Discussion

Loading comments...

Leave a Comment