One-Shot Real-World Demonstration Synthesis for Scalable Bimanual Manipulation

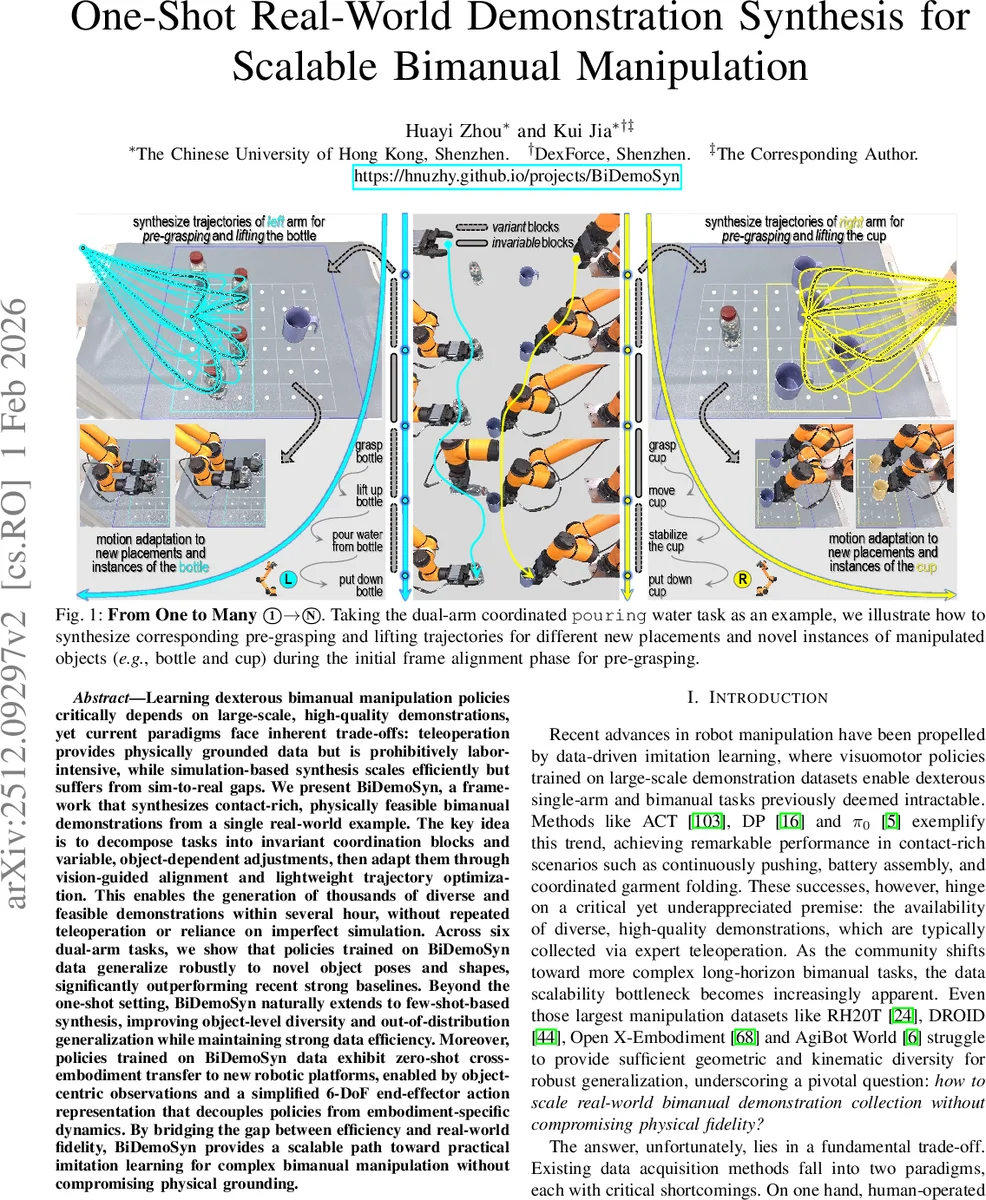

Learning dexterous bimanual manipulation policies critically depends on large-scale, high-quality demonstrations, yet current paradigms face inherent trade-offs: teleoperation provides physically grounded data but is prohibitively labor-intensive, while simulation-based synthesis scales efficiently but suffers from sim-to-real gaps. We present BiDemoSyn, a framework that synthesizes contact-rich, physically feasible bimanual demonstrations from a single real-world example. The key idea is to decompose tasks into invariant coordination blocks and variable, object-dependent adjustments, then adapt them through vision-guided alignment and lightweight trajectory optimization. This enables the generation of thousands of diverse and feasible demonstrations within several hour, without repeated teleoperation or reliance on imperfect simulation. Across six dual-arm tasks, we show that policies trained on BiDemoSyn data generalize robustly to novel object poses and shapes, significantly outperforming recent strong baselines. Beyond the one-shot setting, BiDemoSyn naturally extends to few-shot-based synthesis, improving object-level diversity and out-of-distribution generalization while maintaining strong data efficiency. Moreover, policies trained on BiDemoSyn data exhibit zero-shot cross-embodiment transfer to new robotic platforms, enabled by object-centric observations and a simplified 6-DoF end-effector action representation that decouples policies from embodiment-specific dynamics. By bridging the gap between efficiency and real-world fidelity, BiDemoSyn provides a scalable path toward practical imitation learning for complex bimanual manipulation without compromising physical grounding.

💡 Research Summary

This paper introduces “BiDemoSyn,” a novel framework designed to overcome the critical bottleneck in imitation learning for complex bimanual manipulation: the scarcity of large-scale, high-quality demonstration data. The core problem is a fundamental trade-off: teleoperation provides physically accurate but labor-intensive data, while simulation-based synthesis scales well but suffers from sim-to-real gaps due to unrealistic dynamics and visuals.

BiDemoSyn addresses this by synthesizing thousands of diverse, physically feasible bimanual demonstrations from just a single real-world example. The key innovation is a three-stage process that algorithmically amplifies task semantics. First, the Deconstruction stage analyzes the provided demonstration trajectory and decomposes it into semantically meaningful blocks. These are categorized into invariant coordination blocks (task-specific primitives like screwing or lifting that remain structurally consistent) and variable, object-dependent blocks (like pre-grasp approaches that must adapt to object geometry and pose). These blocks are further refined into minimal “Atomic Execution Primitives (AEPs)” for modular adaptation.

Second, the Vision-based Initial Frame Alignment stage enables generalization to novel scenes. For a new object placement or instance, an open-vocabulary detector segments the target object. Instead of relying on heavy CAD models, a lightweight geometric method using image moments and PCA estimates the object’s 6D pose from its mask and depth data. A rigid transformation is then computed to align the original demonstration’s object frame with this new pose, automatically adapting the start of variable blocks like grasp positions.

Third, the Trajectory Modulation and Optimization stage ensures physical admissibility. The aligned trajectory is processed through a lightweight, physics-aware trajectory optimizer. This optimizer enforces real-world constraints such as joint limits, self-collision avoidance, and dual-arm coordination, ensuring every synthesized trajectory is executable on the real robot. This entire pipeline operates in the physical domain, avoiding any simulator and its associated realism gaps.

The authors validate BiDemoSyn across six contact-rich dual-arm tasks (e.g., bottle cap insertion, coordinated pouring). Policies trained using a diffusion model on data synthesized from a single demo significantly outperform strong baselines (including using only the original demo or simulation-synthesized data) in generalizing to novel object poses and shapes. The framework naturally extends to a few-shot setting, further improving object-level diversity and out-of-distribution generalization. A notable additional finding is that policies trained on BiDemoSyn data exhibit zero-shot cross-embodiment transfer to a different robotic platform. This is enabled by the use of object-centric observations (e.g., point clouds) and a simplified 6-DoF end-effector action space, which decouples the policy from the specific dynamics of the training robot.

In summary, BiDemoSyn bridges the gap between data scalability and real-world fidelity. By providing a simulator-free method to generate massive, physically-grounded demonstration datasets from minimal human input, it offers a scalable and practical path forward for imitation learning in complex bimanual manipulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment