Zero-Shot Video Deraining with Video Diffusion Models

Existing video deraining methods are often trained on paired datasets, either synthetic, which limits their ability to generalize to real-world rain, or captured by static cameras, which restricts their effectiveness in dynamic scenes with background and camera motion. Furthermore, recent works in fine-tuning diffusion models have shown promising results, but the fine-tuning tends to weaken the generative prior, limiting generalization to unseen cases. In this paper, we introduce the first zero-shot video deraining method for complex dynamic scenes that does not require synthetic data nor model fine-tuning, by leveraging a pretrained text-to-video diffusion model that demonstrates strong generalization capabilities. By inverting an input video into the latent space of diffusion models, its reconstruction process can be intervened and pushed away from the model’s concept of rain using negative prompting. At the core of our approach is an attention switching mechanism that we found is crucial for maintaining dynamic backgrounds as well as structural consistency between the input and the derained video, mitigating artifacts introduced by naive negative prompting. Our approach is validated through extensive experiments on real-world rain datasets, demonstrating substantial improvements over prior methods and showcasing robust generalization without the need for supervised training.

💡 Research Summary

The paper tackles the long‑standing challenge of video rain removal without relying on synthetic paired data or costly fine‑tuning of large diffusion models. Instead, it leverages a pretrained text‑to‑video diffusion model (specifically a transformer‑based MM‑DiT architecture such as CogVideoX) in a completely training‑free, zero‑shot manner. The method proceeds in three stages: (1) video inversion, (2) negative‑prompt guided reconstruction, and (3) attention‑switching.

In the inversion stage the authors adopt DDPM‑based inversion rather than DDIM, because DDPM faithfully reconstructs high‑frequency details and large objects, achieving PSNR around 30 dB on real videos. The inversion yields a sequence of noisy latents (x_t) and the corresponding noise maps (z_t) that can be used to reconstruct the original video by stepping backward from a high‑noise timestep (T) to (t=0).

During reconstruction the model is run twice for each timestep: once with a null (no‑condition) prompt, producing a “clean” latent trajectory, and once with a rain‑related textual condition (e.g., “rain”, “heavy rain”). The difference between the two score estimates (\epsilon_{\text{null}} - \epsilon_{\text{rain}}) is amplified by a scalar (\lambda) and added to the original score, exactly mirroring classifier‑free guidance. This negative‑prompt guidance pushes the diffusion trajectory away from the rain concept while preserving the underlying scene. A skip‑timestep (t_s) is introduced so that early diffusion steps follow the original reconstruction path, preventing excessive deviation and loss of fidelity.

A naive application of the above guidance, however, introduces background distortions and temporal flickering because the diffusion model’s attention layers jointly process text and visual tokens. To solve this, the authors propose an attention‑switching mechanism. In selected MM‑DiT blocks (typically 3–5), the key and value matrices derived from the null text embedding ((K_{\text{text}}^0, V_{\text{text}}^0)) replace those derived from the rain text embedding ((K_{\text{text}}^c, V_{\text{text}}^c)). This selective swapping disables the rain‑conditioned cross‑attention while leaving the self‑attention on visual tokens untouched, thereby preserving spatial structure and motion consistency.

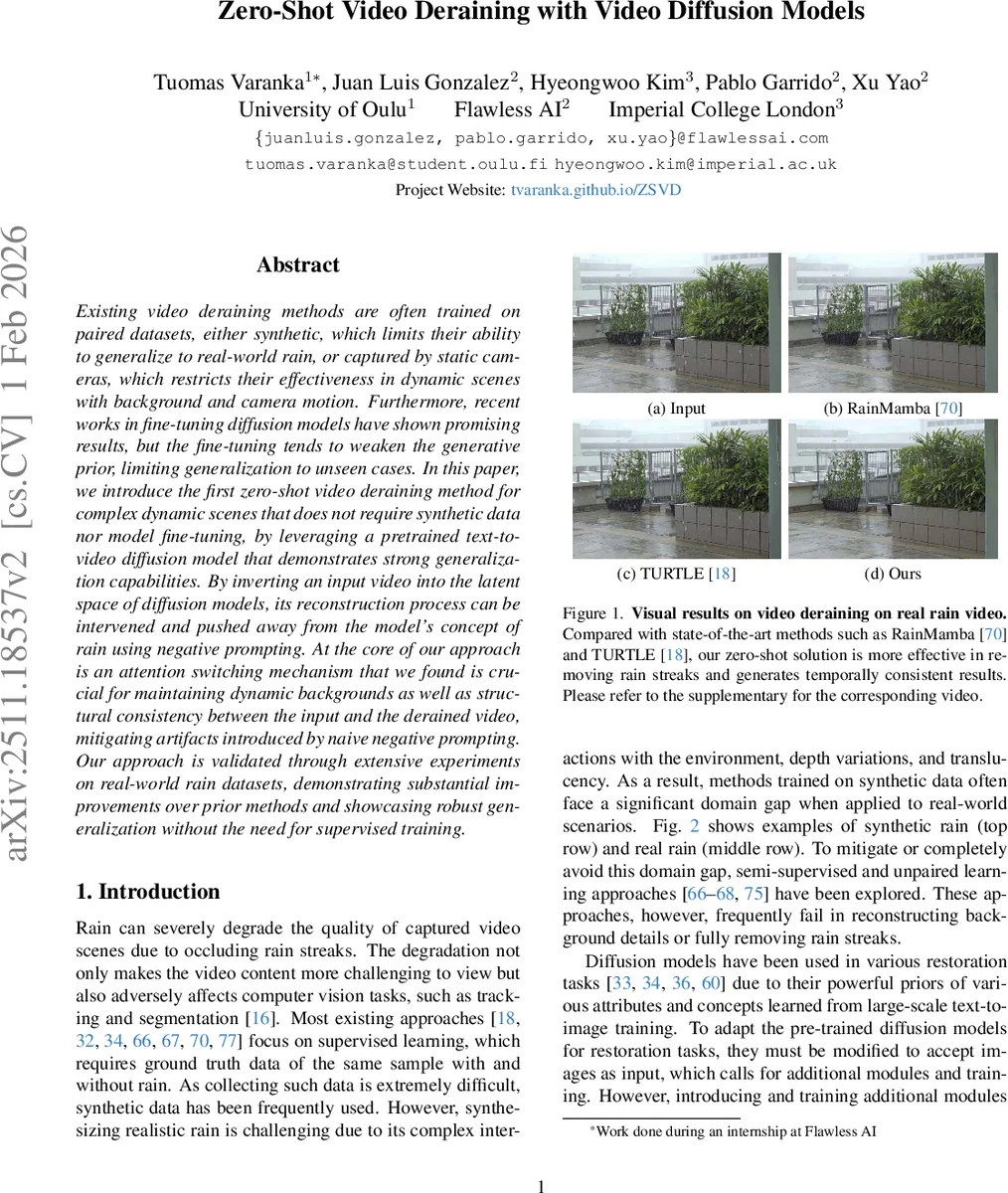

Extensive experiments on several real‑world rain datasets (including NTURain, RealRain, and a curated set of 1 K rainy video crops) demonstrate that the proposed zero‑shot method outperforms state‑of‑the‑art supervised and unsupervised baselines such as RainMamba, TUR‑TLE, and WeatherWeaver. Quantitatively, it achieves higher PSNR/SSIM and lower LPIPS, and qualitatively it produces temporally stable videos with minimal artifacts. Ablation studies confirm that both negative prompting and attention switching are essential: removing either component degrades performance significantly.

The contributions are threefold: (1) the first training‑free diffusion‑based video restoration framework that eliminates the need for synthetic data; (2) a novel attention‑switching technique for joint‑attention transformer diffusion models that balances background preservation with degradation removal; and (3) a systematic analysis of prompt design, showing that disentangling the rain concept via negative prompts is crucial for high‑quality deraining. The authors suggest that the same paradigm could be extended to other adverse weather effects (e.g., fog, snow) and to multimodal conditioning (depth, optical flow), opening a new direction for zero‑shot video restoration using large generative priors.

Comments & Academic Discussion

Loading comments...

Leave a Comment