Compute as Teacher: Turning Inference Compute Into Reference-Free Supervision

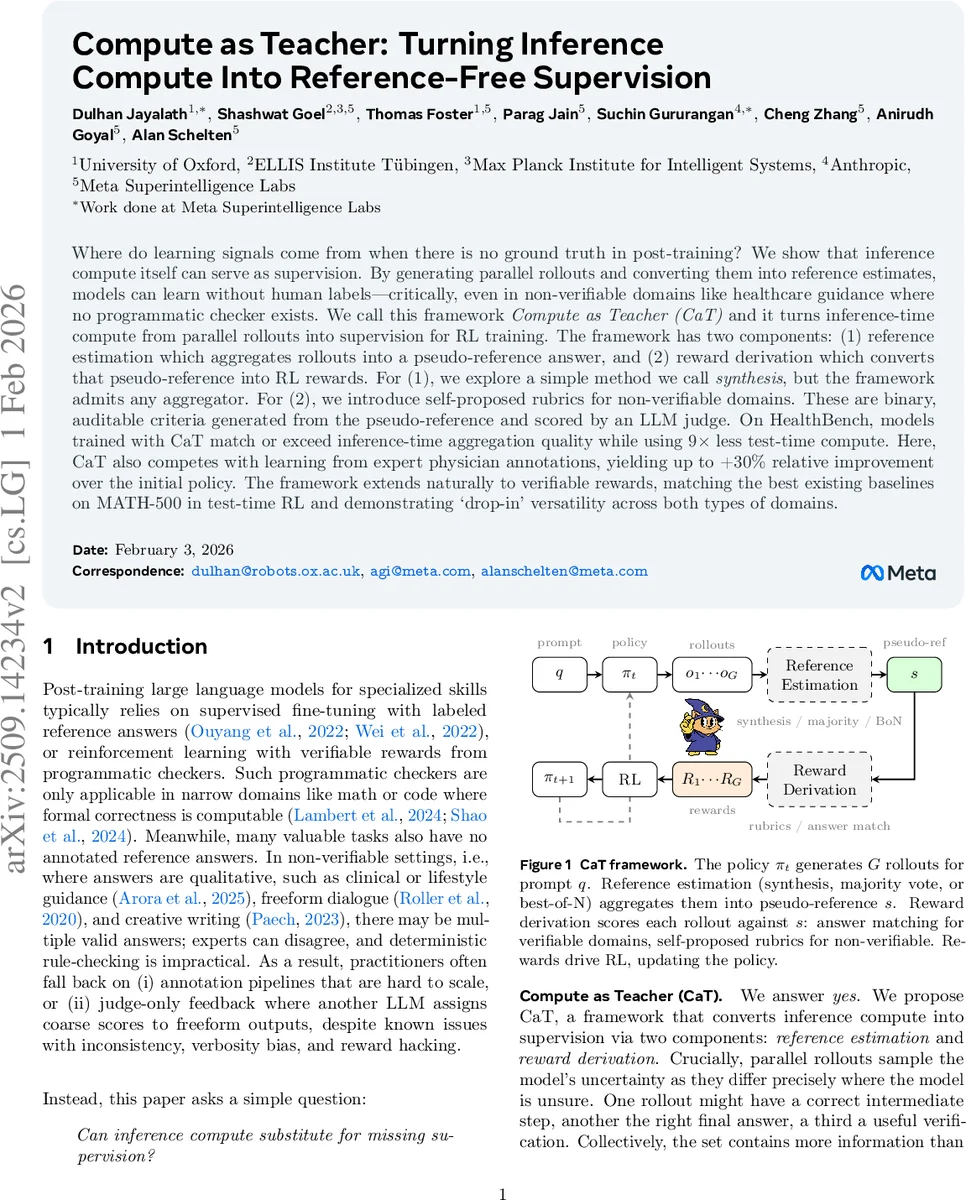

Where do learning signals come from when there is no ground truth in post-training? We show that inference compute itself can serve as supervision. By generating parallel rollouts and converting them into reference estimates, models can learn without human labels-critically, even in non-verifiable domains like healthcare guidance where no programmatic checker exists. We call this framework Compute as Teacher (CaT) and it turns inference-time compute from parallel rollouts into supervision for RL training. The framework has two components: (1) reference estimation which aggregates rollouts into a pseudo-reference answer, and (2) reward derivation which converts that pseudo-reference into RL rewards. For (1), we explore a simple method we call synthesis, but the framework admits any aggregator. For (2), we introduce self-proposed rubrics for non-verifiable domains. These are binary, auditable criteria generated from the pseudo-reference and scored by an LLM judge. On HealthBench, models trained with CaT match or exceed inference-time aggregation quality while using 9x less test-time compute. Here, CaT also competes with learning from expert physician annotations, yielding up to +30% relative improvement over the initial policy. The framework extends naturally to verifiable rewards, matching the best existing baselines on MATH-500 in test-time RL and demonstrating ‘drop-in’ versatility across both types of domains.

💡 Research Summary

The paper introduces Compute as Teacher (CaT), a general framework for post‑training large language models (LLMs) that have no human‑annotated reference answers. CaT converts the compute spent during inference into supervision by generating multiple parallel rollouts for the same prompt, aggregating them into a pseudo‑reference, and then deriving reinforcement‑learning (RL) rewards from that pseudo‑reference. The framework consists of two interchangeable components: (1) reference estimation, which can be a simple majority‑vote or best‑of‑N selector, or a generative “synthesis” step where a frozen anchor model (the initial policy) conditions on the whole rollout set and produces a new response that reconciles information across rollouts; (2) reward derivation, which differs for verifiable and non‑verifiable domains. In verifiable settings (e.g., math, code) the reward is a binary match between a rollout’s answer and the pseudo‑reference. In non‑verifiable settings (e.g., medical or lifestyle advice) the paper proposes self‑proposed rubrics: the anchor model extracts a set of binary criteria from the pseudo‑reference, and an independent judge LLM evaluates each rollout against each criterion. The reward is the fraction of criteria satisfied. This decomposition yields more reliable, auditable, and less surface‑form‑biased feedback than direct LLM‑as‑judge scoring. Experiments are conducted on three model families (Gemma‑3‑4B, Qwen‑3‑4B, Llama‑3.1‑8B) across two benchmarks: HealthBench (non‑verifiable) and MATH‑500 (verifiable). On HealthBench, CaT‑trained models achieve 12‑18 % higher ROUGE/Likelihood scores than self‑consistency or best‑of‑N baselines, and match or surpass expert physician‑annotated fine‑tuning while reducing test‑time compute by up to 9×. On MATH‑500, CaT matches or slightly exceeds state‑of‑the‑art RLHF methods that rely on programmatic reward functions, again amortizing the parallel‑rollout compute into model weights. Ablation studies show (i) separating the anchor from the current policy improves learning efficiency, (ii) synthesis can produce answers that differ from the majority yet are more correct, and (iii) the number of rubric criteria balances reward signal stability and noise. Limitations include dependence on the quality of the anchor model for rubric generation and the inherent subjectivity of “correctness” in non‑verifiable domains, suggesting the need for human oversight in high‑risk applications. Future work may involve human‑in‑the‑loop rubric refinement, multimodal extensions, and broader domain testing. Overall, CaT demonstrates that inference‑time compute can be repurposed as a teacher, enabling reference‑free RL that works across both verifiable and non‑verifiable tasks while dramatically cutting inference cost.

Comments & Academic Discussion

Loading comments...

Leave a Comment