ReasonVQA: A Multi-hop Reasoning Benchmark with Structural Knowledge for Visual Question Answering

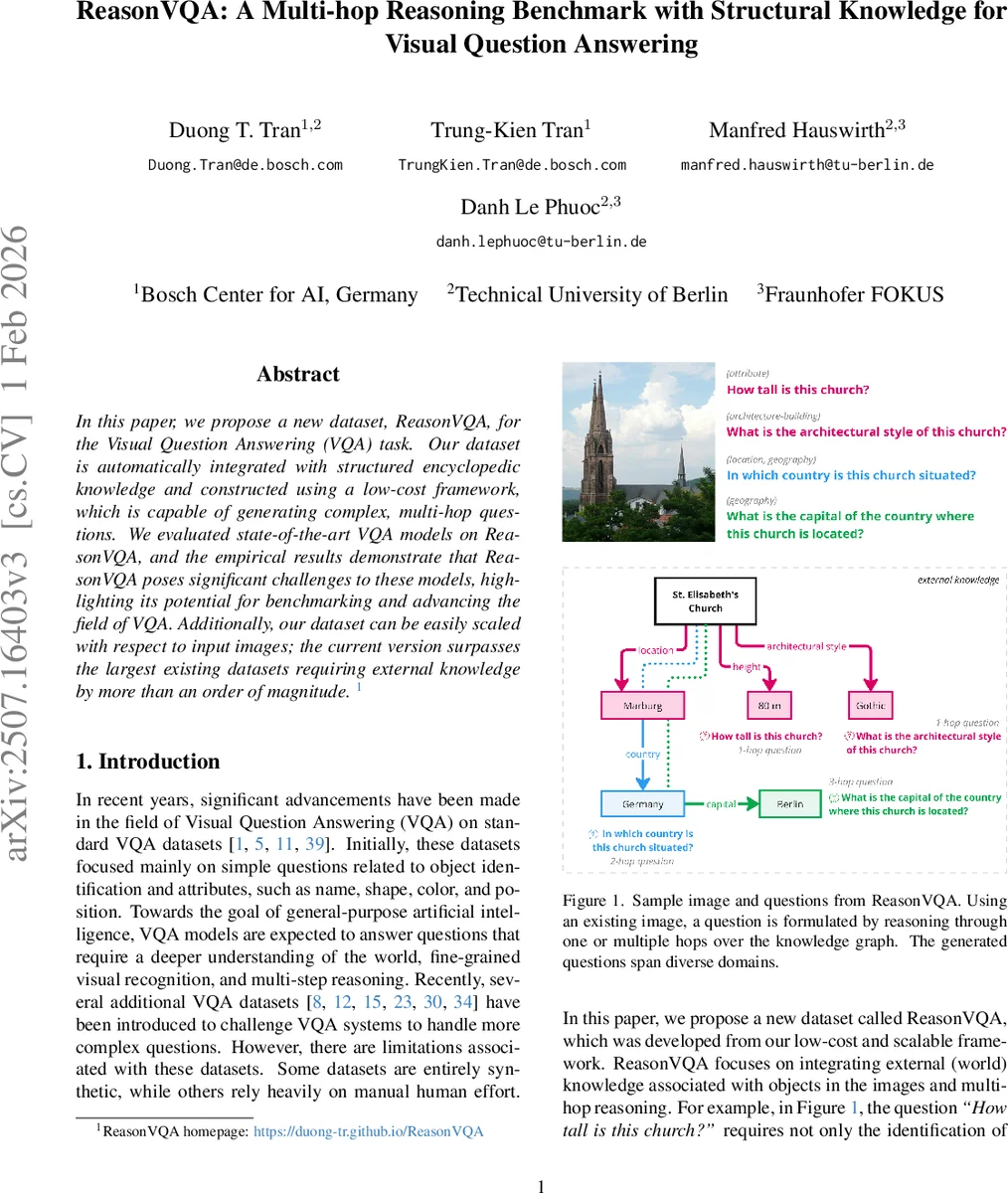

In this paper, we propose a new dataset, ReasonVQA, for the Visual Question Answering (VQA) task. Our dataset is automatically integrated with structured encyclopedic knowledge and constructed using a low-cost framework, which is capable of generating complex, multi-hop questions. We evaluated state-of-the-art VQA models on ReasonVQA, and the empirical results demonstrate that ReasonVQA poses significant challenges to these models, highlighting its potential for benchmarking and advancing the field of VQA. Additionally, our dataset can be easily scaled with respect to input images; the current version surpasses the largest existing datasets requiring external knowledge by more than an order of magnitude.

💡 Research Summary

ReasonVQA introduces a large‑scale visual question answering benchmark that explicitly requires external encyclopedic knowledge and multi‑hop reasoning. The dataset is built automatically through a three‑stage pipeline: (1) external knowledge integration, (2) question generation, and (3) dataset construction with answer‑distribution balancing. In the integration stage, objects annotated in Visual Genome (VG) and Google Landmark Dataset v2 (GLDv2) are linked to Wikidata entities using WordNet synsets, NLTK, and SPARQL queries. This yields structured facts such as “designer”, “height”, “date of birth”, etc., for each visual object.

Question generation relies on manually crafted templates that are filled with entity class names and property values. One‑hop questions are formed by inserting a single property into a template (e.g., “Who designed this skyscraper?”). Multi‑hop questions are created by nesting sub‑clauses derived from additional relations, enabling 2‑hop and 3‑hop queries (e.g., “What is the capital of the country where this church is located?”). For each correct answer, three distractors are generated according to answer type (fixed set, date, numeric, literal) to produce challenging multiple‑choice items.

To mitigate answer bias, the authors adopt a head‑tail smoothing procedure inspired by GQA: for each property group, the most frequent answers are progressively removed together with their associated questions, while preserving a minimum‑maximum ratio between consecutive answer frequencies. Images are also balanced so that no single image dominates the question count. The final split maintains identical answer distributions across training (70 %) and test (30 %) sets by categorizing images according to their two most frequent answers.

The latest version of ReasonVQA contains 598 K images and 4.2 M questions, making it an order of magnitude larger than existing external‑knowledge VQA datasets such as OK‑VQA (14 K) or KVQA (≈100 K). Questions are distributed as roughly 45 % one‑hop, 35 % two‑hop, and 20 % three‑hop, covering 20 knowledge domains (person, architecture, history, science, etc.). A balanced subset (ReasonVQA‑B) is also released for rapid benchmarking.

State‑of‑the‑art multimodal models—including LXMERT, ViLT, OFA, and the vision‑language version of Flamingo—were evaluated, as well as prompting approaches using GPT‑4. All models struggled, achieving at best ~30 % overall accuracy, with performance dropping below 15 % on three‑hop questions. Error analysis indicates that while visual‑language alignment is partially successful, the retrieval and integration of external facts remain major bottlenecks.

Key contributions are: (1) a scalable, low‑cost automatic generation pipeline that can be extended to new image sources and knowledge bases; (2) systematic answer‑distribution balancing to reduce dataset bias; (3) explicit multi‑hop question design that quantifies reasoning depth. The authors suggest future work on dynamic knowledge‑graph traversal within models, retrieval‑augmented generation pipelines, and cross‑domain transfer learning to improve external‑knowledge utilization. In sum, ReasonVQA provides a realistic, challenging benchmark that exposes current multimodal models’ limitations and offers a fertile ground for advancing VQA research toward genuine reasoning over visual and world knowledge.

Comments & Academic Discussion

Loading comments...

Leave a Comment