DP-Fusion: Token-Level Differentially Private Inference for Large Language Models

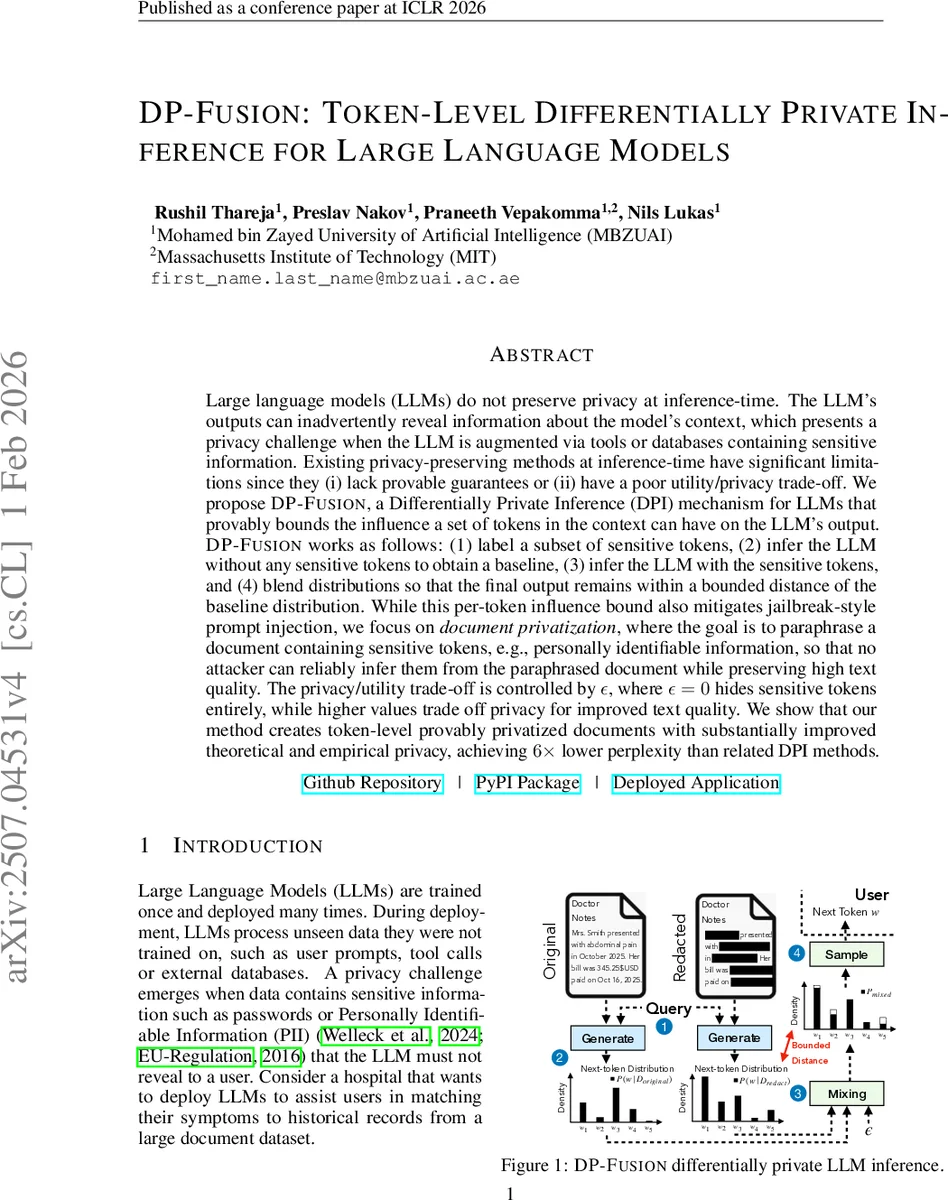

Large language models (LLMs) do not preserve privacy at inference-time. The LLM’s outputs can inadvertently reveal information about the model’s context, which presents a privacy challenge when the LLM is augmented via tools or databases containing sensitive information. Existing privacy-preserving methods at inference-time have significant limitations since they (i) lack provable guarantees or (ii) have a poor utility/privacy trade-off. We propose DP-Fusion, a Differentially Private Inference (DPI) mechanism for LLMs that provably bounds the influence a set of tokens in the context can have on the LLM’s output. DP-Fusion works as follows: (1) label a subset of sensitive tokens, (2) infer the LLM without any sensitive tokens to obtain a baseline, (3) infer the LLM with the sensitive tokens, and (4) blend distributions so that the final output remains within a bounded distance of the baseline distribution. While this per-token influence bound also mitigates jailbreak-style prompt injection, we focus on \emph{document privatization}, where the goal is to paraphrase a document containing sensitive tokens, e.g., personally identifiable information, so that no attacker can reliably infer them from the paraphrased document while preserving high text quality. The privacy/utility trade-off is controlled by $ε$, where $ε=0$ hides sensitive tokens entirely, while higher values trade off privacy for improved text quality. We show that our method creates token-level provably privatized documents with substantially improved theoretical and empirical privacy, achieving $6\times$ lower perplexity than related DPI methods.

💡 Research Summary

DP‑Fusion addresses a critical gap in the privacy landscape of large language models (LLMs) by providing a provably differentially private inference (DPI) mechanism that operates at the token level. Existing inference‑time privacy techniques fall into two broad categories: (a) data‑centric approaches that scrub or replace sensitive tokens, and (b) model‑centric approaches that add noise to logits or clip them. Both suffer from either a lack of formal guarantees or a severe utility loss, especially when the goal is to paraphrase entire documents while preserving readability and meaning.

The core idea of DP‑Fusion is to bound the influence of any set of sensitive tokens on the model’s output distribution by a user‑specified privacy budget ε. The method proceeds in four steps: (1) a local tagger (e.g., NER or a domain‑specific classifier) marks all tokens that belong to predefined privacy groups (names, dates, IDs, etc.). (2) The LLM is run twice on the same prompt: once on the original document containing the sensitive tokens, and once on a redacted version where those tokens are removed. (3) For each generation step the next‑token probability distributions from the two runs, (P_{\text{orig}}) and (P_{\text{redact}}), are linearly mixed to produce a final distribution (P_{\text{mix}} = \lambda P_{\text{orig}} + (1-\lambda) P_{\text{redact}}). (4) The mixing coefficient λ is chosen so that the statistical distance (total variation or KL‑divergence) between (P_{\text{mix}}) and the baseline (P_{\text{redact}}) does not exceed ε. In practice λ is found via a simple bisection search, guaranteeing that the resulting mechanism satisfies (ε,δ)‑DP for the chosen δ (often set to zero).

By anchoring the mixture to a completely redacted baseline, DP‑Fusion avoids the “noise‑only” approach of DP‑Decoding, which dilutes the entire language model signal, and the “logit‑clipping” approach of DP‑Prompt, which provides only weak guarantees when the attacker knows the model internals. Instead, the redacted baseline preserves the surrounding context, while the mixing step injects just enough information from the original distribution to keep the text fluent. The theoretical analysis shows that for any adjacent inputs differing in a single sensitive token, the output distributions differ by at most ε, which directly bounds an adversary’s advantage in token‑recovery games.

The authors formalize an adaptive gray‑box attacker model: the attacker knows the DP‑Fusion algorithm, the exact LLM weights, and can observe the privatized output as well as the redacted document (i.e., everything except the targeted privacy group). The attacker’s goal is to recover the missing tokens. The paper defines a Token‑Recovery Game and derives the attacker’s advantage as the difference between the success probability given the privatized output and the baseline success probability (trivial leakage). Under DP‑Fusion, this advantage is provably limited by ε, regardless of the attacker’s computational power.

Empirically, DP‑Fusion is evaluated on several realistic datasets: synthetic medical notes, legal transcripts, and news articles. Baselines include (i) pure scrubbing, (ii) prompt‑engineered paraphrasing, (iii) DP‑Decoding (uniform mixing), and (iv) DP‑Prompt (logit clipping). Across all settings, DP‑Fusion achieves dramatically lower perplexity—up to six times lower than the best prior DPI method—while maintaining comparable or better human‑rated fluency and factual consistency. In privacy attacks, when ε is set to 0.1, the attacker’s token‑recovery accuracy drops to near random guessing (≈20% for a candidate set of size five), confirming the theoretical bound. The framework also supports group‑wise ε values, allowing fine‑grained protection (e.g., stricter ε for names than for dates).

From a systems perspective, DP‑Fusion adds only modest overhead: two forward passes per generation step and a lightweight linear interpolation. The authors demonstrate that with batched inference on a single GPU the latency increase is under 15 ms per token, making the approach viable for real‑time applications.

In summary, DP‑Fusion delivers a practical, provably private inference mechanism for LLMs that balances rigorous differential privacy guarantees with high‑quality text generation. By leveraging a redacted baseline and controlled distribution mixing, it mitigates the privacy risks of token leakage while preserving the utility needed for downstream tasks such as document summarization, question answering, or conversational agents. This work represents a significant step toward deploying LLM‑powered services that comply with strict privacy regulations (e.g., GDPR, HIPAA) without sacrificing user experience.

Comments & Academic Discussion

Loading comments...

Leave a Comment