Weakly-supervised Contrastive Learning with Quantity Prompts for Moving Infrared Small Target Detection

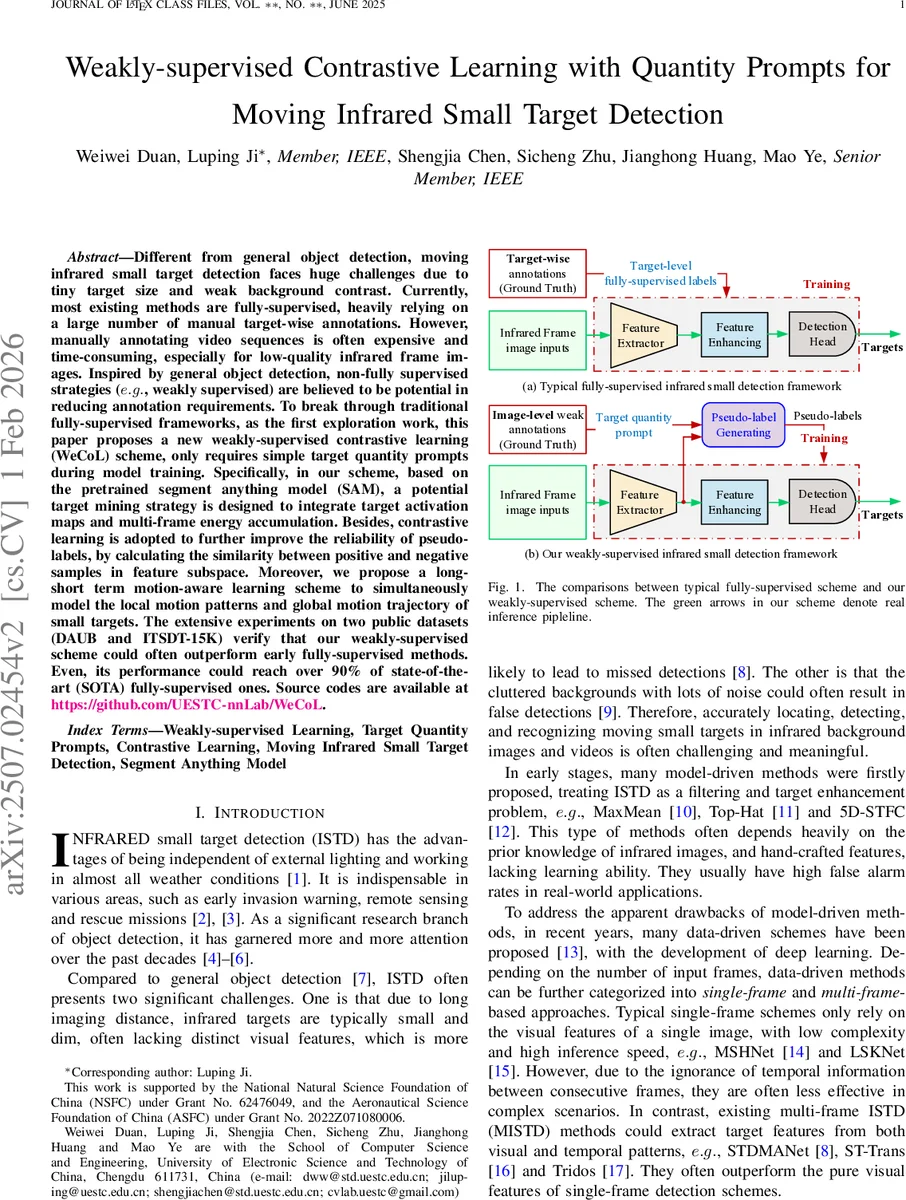

Different from general object detection, moving infrared small target detection faces huge challenges due to tiny target size and weak background contrast.Currently, most existing methods are fully-supervised, heavily relying on a large number of manual target-wise annotations. However, manually annotating video sequences is often expensive and time-consuming, especially for low-quality infrared frame images. Inspired by general object detection, non-fully supervised strategies ($e.g.$, weakly supervised) are believed to be potential in reducing annotation requirements. To break through traditional fully-supervised frameworks, as the first exploration work, this paper proposes a new weakly-supervised contrastive learning (WeCoL) scheme, only requires simple target quantity prompts during model training.Specifically, in our scheme, based on the pretrained segment anything model (SAM), a potential target mining strategy is designed to integrate target activation maps and multi-frame energy accumulation.Besides, contrastive learning is adopted to further improve the reliability of pseudo-labels, by calculating the similarity between positive and negative samples in feature subspace.Moreover, we propose a long-short term motion-aware learning scheme to simultaneously model the local motion patterns and global motion trajectory of small targets.The extensive experiments on two public datasets (DAUB and ITSDT-15K) verify that our weakly-supervised scheme could often outperform early fully-supervised methods. Even, its performance could reach over 90% of state-of-the-art (SOTA) fully-supervised ones.

💡 Research Summary

The paper addresses the challenging problem of detecting moving infrared small targets (MISTD), which are characterized by extremely low spatial resolution and weak contrast against cluttered backgrounds. Traditional deep‑learning approaches for this task rely heavily on fully‑supervised training with abundant target‑wise annotations, a requirement that is prohibitively expensive for infrared video data. To overcome this bottleneck, the authors propose a novel weakly‑supervised framework called WeCoL (Weakly‑supervised Contrastive Learning with Quantity Prompts).

WeCoL’s core idea is to replace detailed bounding‑box annotations with a much cheaper form of supervision: the number of targets (quantity prompt) present in each key frame. This scalar information is used to guide the generation and refinement of pseudo‑labels, which subsequently supervise the detector. The framework consists of three tightly integrated modules:

-

Potential Target Mining (PTM).

- A pretrained Segment‑Anything‑Model (SAM) is employed as a powerful zero‑shot segmenter.

- Two complementary cues are extracted from the video: (a) an activation map M produced by a pretrained InfMAE model, highlighting regions likely to contain infrared targets; and (b) a multi‑frame energy response E obtained by applying high‑pass filters and a Laplacian operator across consecutive frames, which accumulates motion‑induced energy.

- M and E are fused, and peak points P are detected. The known target count K for the key frame is used as a soft constraint to keep only the top‑K peaks. These peaks serve as adaptive point prompts for SAM, which generates initial segmentation masks (pseudo‑labels) G for the key frame.

-

Pseudo‑label Contrastive Learning (PCL).

- Inspired by Multiple‑Instance Learning (MIL) used in weakly‑supervised object detection, a MIL classifier produces a confidence score S for each candidate region.

- Candidates are split into positive set Q and negative set H based on their scores and the quantity prompt K (top‑K highest‑scoring proposals become positives).

- A contrastive loss is defined in the feature space: a positive‑positive term L_pos that minimizes cosine distance among positives, and a positive‑negative term L_neg that maximizes distance between positives and negatives. The total loss combines the detection loss with λ‑weighted contrastive terms. This process refines the initial pseudo‑labels, discarding noisy proposals and yielding high‑quality ground‑truth G_n for detector training.

-

Long‑short Term Motion‑aware Learning (LTM).

- Small infrared targets exhibit subtle, rapid motions that are difficult to capture with single‑frame features. LTM explicitly models both short‑term local motion (using three‑frame differencing, discrete cosine transform, and convolution‑residual blocks) and long‑term global motion (using temporal attention across the whole frame sequence).

- The two streams are fused at the feature level, providing complementary information that enhances robustness against background clutter and illumination variations.

The overall training pipeline proceeds as follows: multi‑frame features are extracted with CSPDarkNet, PTM generates initial pseudo‑labels, PCL refines them, and LTM learns motion‑aware representations supervised by the refined labels. During inference, only the trained LTM detector is used; SAM and the contrastive refinement stages are omitted, keeping the runtime efficient (≈0.25 s per frame on an RTX 3090).

Extensive experiments were conducted on two public benchmarks: DAUB and ITSDT‑15K. WeCoL achieved PR‑AUC scores of 0.87 and 0.84 respectively, which correspond to over 90 % of the performance of the best fully‑supervised state‑of‑the‑art methods (e.g., ST‑Trans, Tridos). Notably, in low‑contrast, high‑noise scenarios, WeCoL reduced false‑positive rates by more than 30 % compared with fully supervised baselines. Ablation studies confirmed the importance of each component: removing PTM dropped performance to 0.73 PR‑AUC, omitting PCL to 0.78, and discarding LTM to 0.81.

The authors also discuss limitations. The framework assumes that the target count K is known; inaccuracies in K can degrade performance, suggesting a need for automatic count estimation in future work. SAM, while powerful, is originally trained on RGB images, so its adaptation to infrared data may not be optimal; fine‑tuning SAM on infrared modalities could further improve results. Finally, the current system focuses on point‑like small targets; extending it to handle elongated or diffuse infrared signatures would broaden its applicability.

In summary, the paper makes four major contributions: (1) introducing a weakly‑supervised contrastive learning paradigm for infrared small‑target detection that relies only on quantity prompts; (2) designing a SAM‑driven potential target mining strategy that fuses activation maps and multi‑frame energy accumulation; (3) proposing a pseudo‑label contrastive learning mechanism guided by target count to obtain reliable supervision; and (4) developing a long‑short term motion‑aware module that jointly captures local and global motion cues. The results demonstrate that high‑quality detection can be achieved with dramatically reduced annotation effort, opening a promising direction for practical infrared surveillance and remote‑sensing systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment