Bridging GANs and Bayesian Neural Networks via Partial Stochasticity

Generative Adversarial Networks (GANs) are popular and successful generative models. Despite their success, optimization is notoriously challenging. In this work, we explain the success and limitations of GANs by casting them as Bayesian neural networks with partial stochasticity. This interpretation allows us to establish conditions of universal approximation and to rewrite the adversarial-style optimization of several variants of GANs as the optimization of a proxy for the likelihood obtained by marginalizing out the stochastic variables. Following this interpretation, the need for regularization becomes apparent, and we propose to adopt strategies to smooth the loss landscape and methods to search for solutions with minimum description length, which are associated with flat minima and good generalization. Results obtained on a wide range of experiments indicate that these strategies lead to performance improvements and pave the way to a deeper understanding of GANs.

💡 Research Summary

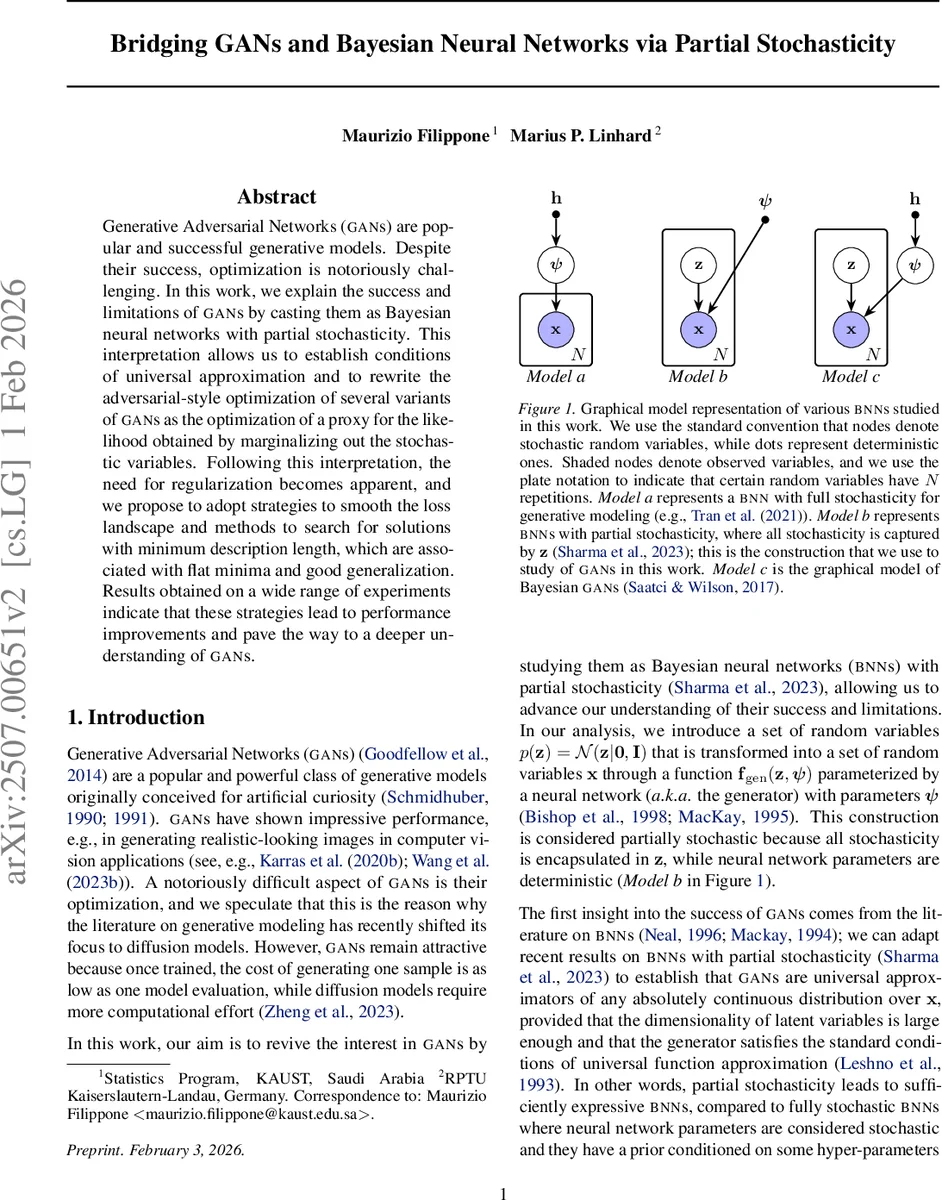

This paper offers a fresh probabilistic perspective on Generative Adversarial Networks (GANs) by casting them as Bayesian Neural Networks (BNNs) with partial stochasticity: the latent variables z are random, while the network parameters ψ are deterministic and subject to optimization. This formulation corresponds to “Model b” in the authors’ graphical taxonomy and contrasts with fully stochastic BNNs where both weights and latent variables are random. By leveraging recent results on partially stochastic BNNs, the authors prove that GANs are universal approximators of any absolutely continuous distribution over the data space, provided the latent dimensionality P is at least as large as the data dimensionality D and the generator satisfies standard universal approximation conditions.

The core technical contribution is the reinterpretation of the GAN training objective as a sample‑based proxy for the intractable marginal likelihood p(X|ψ)=∫p(X|Z,ψ)p(Z)dZ. By integrating out z, the marginal likelihood can be expressed as a KL divergence between the true data distribution π(x) and the model distribution p(x|ψ). Since p(x|ψ) lacks a closed‑form density, direct KL minimization is infeasible. The authors show that the various divergences used in popular GAN variants—Jensen‑Shannon (original GAN), f‑divergences (f‑GAN), 1‑Wasserstein distance (W‑GAN), and Maximum Mean Discrepancy (MMD‑GAN)—are precisely tractable surrogates for this KL, each requiring a discriminator (or kernel function) to estimate the divergence from samples. In this view, the discriminator is not an intrinsic component of the latent‑variable model but an auxiliary tool for estimating a statistical distance between π and p.

A critical insight is that, because GAN training essentially performs a maximum‑likelihood‑like estimation, there is no built‑in mechanism to prevent over‑fitting. The paper demonstrates this with a synthetic 2‑D Gaussian experiment: when the latent dimension P<D the model cannot capture the data distribution; when P>D the model can, but overly complex generators converge to sharp minima (large top Hessian eigenvalues), whereas a generator with just enough capacity finds a flat minimum and yields better generalization.

To address over‑fitting and the instability of sharp minima, the authors propose three complementary regularization strategies:

- Likelihood Relaxation – Replace the Dirac delta likelihood (which makes the loss landscape degenerate) with a small‑variance Gaussian, smoothing the objective.

- Gradient Regularization – Penalize the norm of gradients of both generator and discriminator (or Jacobian regularization), encouraging flatter regions of the loss surface.

- Approximate Bayesian Inference – Apply Monte‑Carlo Dropout (MCD) to the generator and discriminator, interpreting dropout as variational inference. This introduces a posterior over ψ, providing an implicit ensemble effect that regularizes the model.

Extensive experiments across image synthesis benchmarks (including high‑resolution datasets) show that each of these techniques, individually and especially in combination, improves standard GAN metrics such as FID, Inception Score, and Precision‑Recall. Moreover, models trained with flat‑minimum search and MCD exhibit greater training stability, reduced mode collapse, and faster convergence.

In summary, the paper makes four major contributions:

- Derivation of GAN objectives from first‑principles – showing they are proxies for a marginalized likelihood.

- Theoretical guarantees – establishing universal approximation conditions for GANs viewed as partially stochastic BNNs.

- A unified understanding of regularization – linking likelihood relaxation, gradient penalties, and Bayesian dropout to flat minima and minimum description length.

- Empirical validation – demonstrating that these insights translate into tangible performance gains on a wide range of generative tasks.

By bridging GANs and Bayesian neural networks through partial stochasticity, the work not only clarifies why GANs succeed and where they fail, but also provides a principled roadmap for designing more robust, generalizable generative models.

Comments & Academic Discussion

Loading comments...

Leave a Comment