Conflicts in Texts: Data, Implications and Challenges

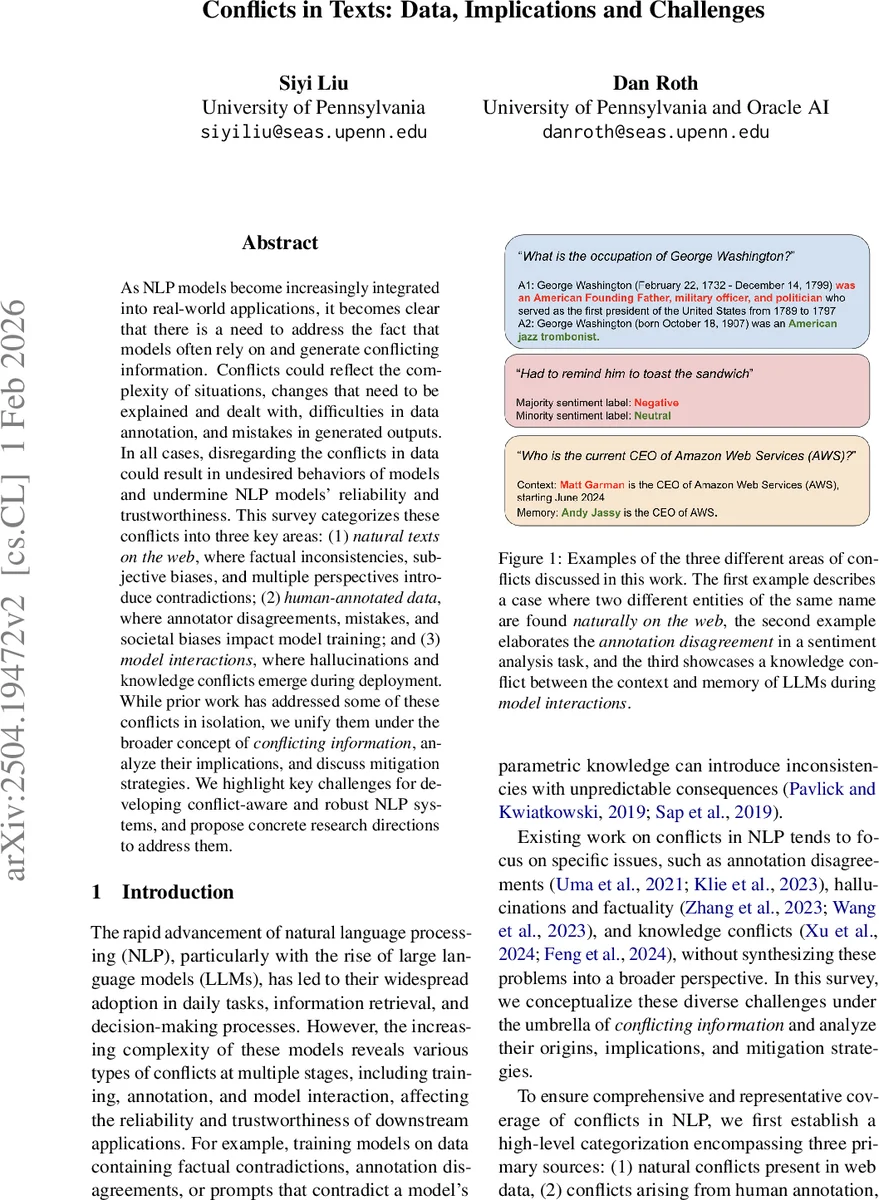

As NLP models become increasingly integrated into real-world applications, it becomes clear that there is a need to address the fact that models often rely on and generate conflicting information. Conflicts could reflect the complexity of situations, changes that need to be explained and dealt with, difficulties in data annotation, and mistakes in generated outputs. In all cases, disregarding the conflicts in data could result in undesired behaviors of models and undermine NLP models’ reliability and trustworthiness. This survey categorizes these conflicts into three key areas: (1) natural texts on the web, where factual inconsistencies, subjective biases, and multiple perspectives introduce contradictions; (2) human-annotated data, where annotator disagreements, mistakes, and societal biases impact model training; and (3) model interactions, where hallucinations and knowledge conflicts emerge during deployment. While prior work has addressed some of these conflicts in isolation, we unify them under the broader concept of conflicting information, analyze their implications, and discuss mitigation strategies. We highlight key challenges and future directions for developing conflict-aware NLP systems that can reason over and reconcile conflicting information more effectively.

💡 Research Summary

The paper “Conflicts in Texts: Data, Implications and Challenges” provides a comprehensive survey of the various forms of conflict that arise in natural language processing (NLP) pipelines and their impact on model reliability, fairness, and trustworthiness. The authors organize conflicts into three high‑level sources: (1) natural texts on the web, (2) human‑annotated data, and (3) model interactions. For each source they identify sub‑categories, trace representative works, discuss the practical implications, and summarize mitigation strategies.

1. Conflicts in Natural Web Texts

These conflicts stem from the inherent messiness of publicly available text. The authors distinguish factual conflicts (ambiguities, contradictory evidence, temporal or geographic dependence) and opinion‑related conflicts (multiple perspectives, framing bias). They cite datasets such as AmbigQA (which quantifies question ambiguity), SituatedQA (temporal/geographic context), WhoQA (entity‑name disambiguation), Con‑traDoc (internal contradictions in long documents), and PERSPECTRUM (multi‑viewpoint discovery). Empirical findings show that large language models (LLMs) often fail to detect or correctly answer ambiguous or contradictory queries, suffering from confirmation bias that favors internally memorized knowledge over external evidence. The paper reviews mitigation approaches including uncertainty estimation with selective abstention, “disambiguate‑then‑answer” pipelines, time‑aware models, and fine‑tuning with human‑written explanations.

2. Conflicts in Human‑Annotated Data

Annotation disagreements are pervasive across both subjective tasks (sentiment analysis, hate‑speech detection) and seemingly objective tasks (natural language inference). The survey highlights that majority‑vote labeling can mask high‑disagreement examples, leading models to over‑confidently predict incorrect labels. Moreover, societal biases (race, gender, geography) embedded in annotations propagate unfair behavior in downstream models. Mitigation strategies discussed include multi‑label learning, modeling annotator uncertainty, fairness‑aware loss functions, and improving annotation guidelines to capture the nuance of human disagreement.

3. Conflicts in Model Interactions

When models are deployed, two major conflict types emerge: knowledge conflicts (between parametric memory and external context) and hallucinations (factual or contextual). Studies such as Longpre et al. (2021) demonstrate that LLMs often rely on memorized facts even when contradictory evidence is presented, leading to confirmation bias. Hallucinations degrade the performance of retrieval‑augmented generation (RAG) pipelines and can produce misinformation. The authors summarize countermeasures like separating parametric and contextual knowledge, retrieval‑augmentation, hallucination detection classifiers, and knowledge‑graph verification.

Taxonomy and Methodology

Figure 2 in the paper presents a taxonomy that maps each conflict type to its origins, implications, and mitigation techniques. The authors describe a systematic literature‑crawling method (seed papers, citation chaining, targeted database searches) to ensure coverage. Tables 1‑3 compile datasets, model architectures, and evaluation metrics for each conflict category.

Implications

Ignoring conflicts leads to degraded model accuracy, over‑confidence, cultural bias, and reduced user trust. For example, factual conflicts can cause a QA system to return contradictory answers, while opinion conflicts can result in biased summarization. Annotation conflicts can embed systemic inequities, and model‑interaction conflicts can generate harmful misinformation.

Future Directions

The survey outlines several research avenues: (a) automatic detection and quantification of conflicts across corpora; (b) conflict‑aware training objectives that explicitly model uncertainty and disagreement; (c) multi‑objective optimization balancing factual correctness, perspective diversity, and fairness; (d) human‑in‑the‑loop mechanisms where users can flag or resolve conflicts; and (e) expanding evaluation to multilingual and culturally diverse settings to mitigate Western‑centric bias.

In summary, the paper unifies disparate strands of conflict‑related research under a single framework, providing a valuable roadmap for building conflict‑aware NLP systems that can reason about, reconcile, and ultimately reduce the adverse effects of contradictory information in data, annotations, and model behavior.

Comments & Academic Discussion

Loading comments...

Leave a Comment