DenseFormer: Learning Dense Depth Map from Sparse Depth and Image via Conditional Diffusion Model

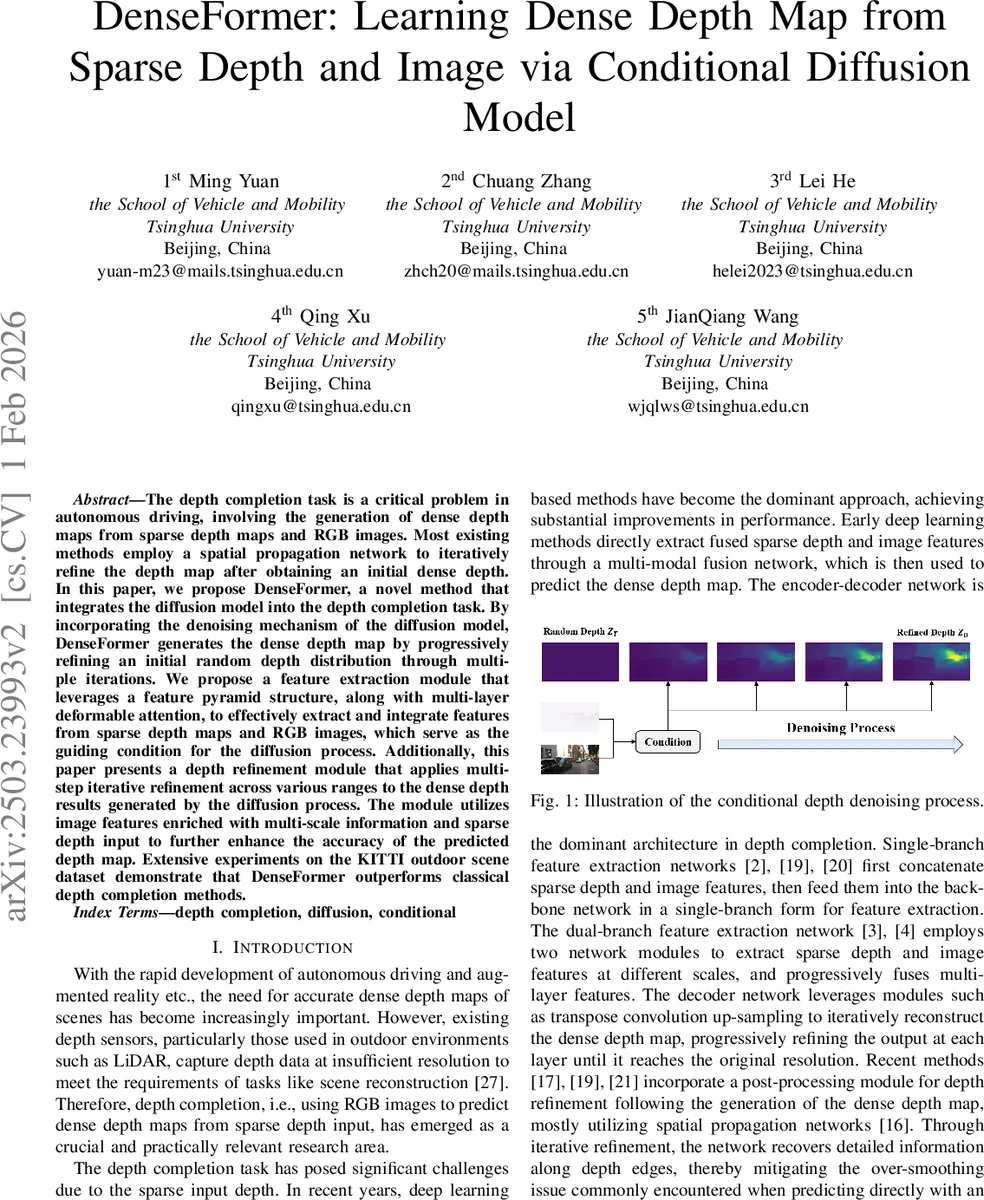

The depth completion task is a critical problem in autonomous driving, involving the generation of dense depth maps from sparse depth maps and RGB images. Most existing methods employ a spatial propagation network to iteratively refine the depth map after obtaining an initial dense depth. In this paper, we propose DenseFormer, a novel method that integrates the diffusion model into the depth completion task. By incorporating the denoising mechanism of the diffusion model, DenseFormer generates the dense depth map by progressively refining an initial random depth distribution through multiple iterations. We propose a feature extraction module that leverages a feature pyramid structure, along with multi-layer deformable attention, to effectively extract and integrate features from sparse depth maps and RGB images, which serve as the guiding condition for the diffusion process. Additionally, this paper presents a depth refinement module that applies multi-step iterative refinement across various ranges to the dense depth results generated by the diffusion process. The module utilizes image features enriched with multi-scale information and sparse depth input to further enhance the accuracy of the predicted depth map. Extensive experiments on the KITTI outdoor scene dataset demonstrate that DenseFormer outperforms classical depth completion methods.

💡 Research Summary

DenseFormer introduces a novel conditional diffusion framework for the depth completion problem, where sparse LiDAR measurements and an RGB image are used to generate a dense depth map. Unlike conventional pipelines that first predict an initial dense depth with an encoder‑decoder network and then refine it using spatial propagation networks (SPNs), DenseFormer treats depth completion as a generative denoising process. The method consists of three main components.

-

Guidance Feature Extraction – A ResNet backbone extracts multi‑scale visual features from the RGB image, while a Feature Pyramid Network (FPN) processes the sparse depth map at several resolutions. To fuse these modalities efficiently, a deformable attention mechanism is employed. Deformable attention learns dynamic sampling offsets, allowing the network to focus on salient regions without the quadratic cost of standard self‑attention, which is crucial for high‑resolution inputs. The fused multi‑scale features constitute the conditional guidance (cond) for the diffusion model.

-

Diffusion Process Module – The core generative engine follows the denoising diffusion probabilistic model (DDPM) paradigm, but conditioned on the guidance features. Starting from a random depth distribution (z_T), the network (\mu_\theta(z_t, t, cond)) predicts the previous timestep’s depth (z_{t-1}) using a lightweight U‑Net architecture. DDIM (Deterministic Denoising Diffusion Implicit Models) inference is adopted to accelerate sampling while preserving quality. Each denoising step integrates multi‑scale conditional information, progressively shaping the random initialization into a plausible dense depth map.

-

Depth Refinement Module – After the diffusion stage, a post‑processing block refines the output using a spatial propagation network that is also guided by the deformable‑attention‑enhanced features and the original sparse depth. Multi‑range neighbor sets and learned affinity weights are used to update each pixel, improving edge sharpness and reducing over‑smoothing.

Extensive experiments on the KITTI benchmark demonstrate that DenseFormer outperforms state‑of‑the‑art depth completion methods across all standard metrics (RMSE, MAE, iRMSE). Ablation studies confirm the contribution of each design choice: the pyramid‑based depth encoder, deformable attention fusion, the number of diffusion steps, and the refinement network all provide measurable gains. Moreover, the architecture remains computationally efficient, making it suitable for real‑time autonomous driving scenarios.

In summary, DenseFormer pioneers the application of conditional diffusion models to outdoor depth completion, showing that a generative denoising perspective can effectively handle the sparsity and noise inherent in LiDAR data while leveraging rich visual cues. By unifying diffusion‑based generation with traditional propagation‑based refinement, the paper offers a compelling new direction for high‑quality, real‑time depth reconstruction in autonomous systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment