Vectra: A New Metric, Dataset, and Model for Visual Quality Assessment in E-Commerce In-Image Machine Translation

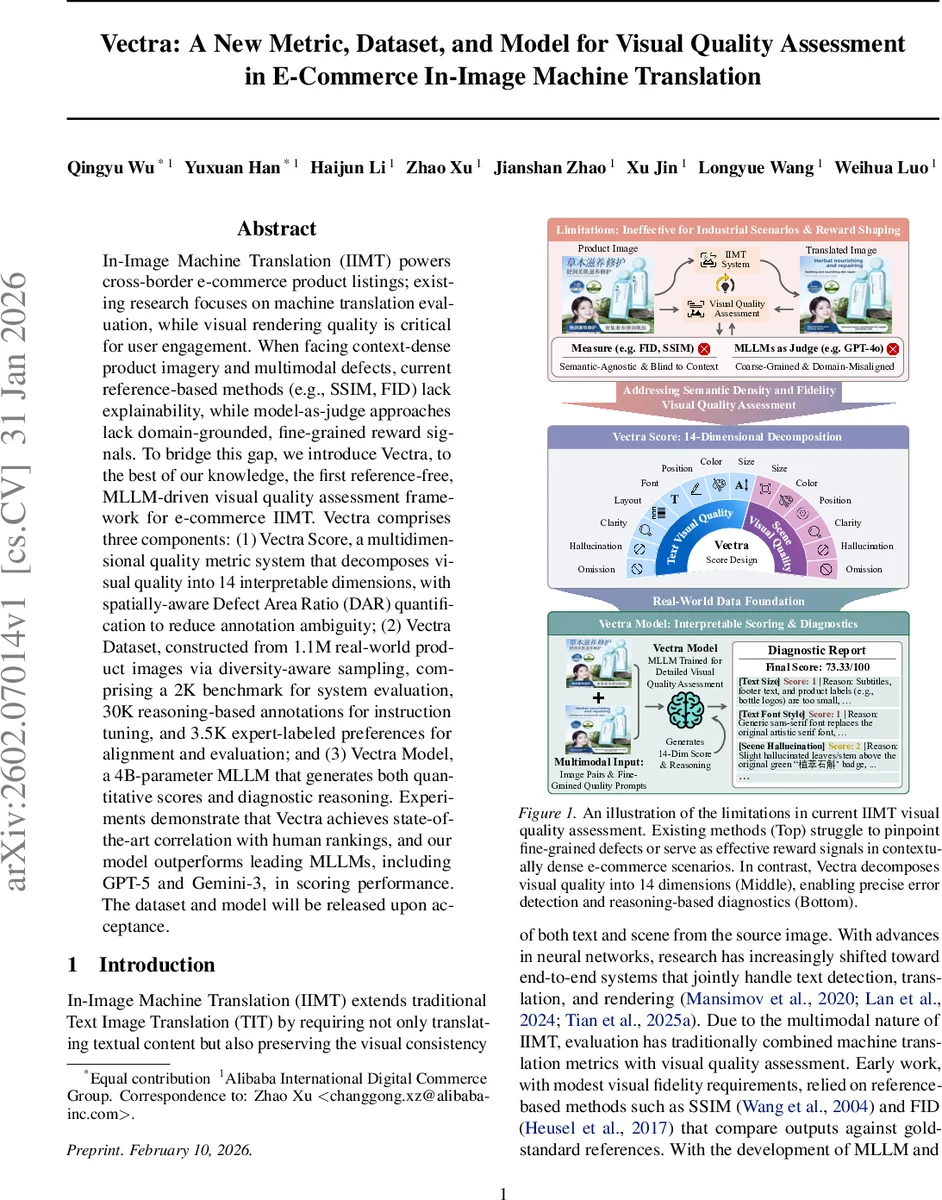

In-Image Machine Translation (IIMT) powers cross-border e-commerce product listings; existing research focuses on machine translation evaluation, while visual rendering quality is critical for user engagement. When facing context-dense product imagery and multimodal defects, current reference-based methods (e.g., SSIM, FID) lack explainability, while model-as-judge approaches lack domain-grounded, fine-grained reward signals. To bridge this gap, we introduce Vectra, to the best of our knowledge, the first reference-free, MLLM-driven visual quality assessment framework for e-commerce IIMT. Vectra comprises three components: (1) Vectra Score, a multidimensional quality metric system that decomposes visual quality into 14 interpretable dimensions, with spatially-aware Defect Area Ratio (DAR) quantification to reduce annotation ambiguity; (2) Vectra Dataset, constructed from 1.1M real-world product images via diversity-aware sampling, comprising a 2K benchmark for system evaluation, 30K reasoning-based annotations for instruction tuning, and 3.5K expert-labeled preferences for alignment and evaluation; and (3) Vectra Model, a 4B-parameter MLLM that generates both quantitative scores and diagnostic reasoning. Experiments demonstrate that Vectra achieves state-of-the-art correlation with human rankings, and our model outperforms leading MLLMs, including GPT-5 and Gemini-3, in scoring performance. The dataset and model will be released upon acceptance.

💡 Research Summary

The paper addresses the critical yet under‑explored problem of visual quality assessment for In‑Image Machine Translation (IIMT) in cross‑border e‑commerce. While prior work has focused almost exclusively on textual translation metrics and reference‑based image similarity measures such as SSIM or FID, these approaches fail to capture fine‑grained visual defects that arise in dense product imagery—issues like font style mismatches, layout distortions, color shifts, or hallucinated graphical elements. Moreover, recent model‑as‑judge methods that rely on large multimodal language models (MLLMs) provide limited domain‑specific feedback and lack interpretability. To fill this gap, the authors introduce Vectra, a comprehensive, reference‑free framework consisting of three tightly integrated components: (1) Vectra Score, a multidimensional metric that decomposes visual quality into 14 interpretable dimensions, (2) Vectra Dataset, a large‑scale, diversity‑aware collection of real‑world e‑commerce product images, and (3) Vectra Model, a 4‑billion‑parameter MLLM fine‑tuned to output both quantitative scores and natural‑language diagnostic reasoning.

Vectra Score

The metric separates quality into “Accuracy” (e.g., text omission, hallucination) and “Style” (e.g., font size, color, layout) categories, each containing eight and six sub‑dimensions respectively. Each dimension receives an ordinal score from 1 (poor) to 3 (excellent). To quantify spatial severity, the authors propose Defect Area Ratio (DAR), which measures the proportion of defective pixels relative to the total target region, computed separately for textual and non‑textual areas. Empirical calibration with 5 e‑commerce experts identifies a threshold τ = 0.3: DAR ≤ 0.3 is considered “fair” (score 2), while DAR > 0.3 is “poor” (score 1). The overall Vectra Score is obtained by min‑max normalizing the mean accuracy and style scores to

Comments & Academic Discussion

Loading comments...

Leave a Comment