Towards Multiscale Graph-based Protein Learning with Geometric Secondary Structural Motifs

Graph neural networks (GNNs) have emerged as powerful tools for learning protein structures by capturing spatial relationships at the residue level. However, existing GNN-based methods often face challenges in learning multiscale representations and modeling long-range dependencies efficiently. In this work, we propose an efficient multiscale graph-based learning framework tailored to proteins. Our proposed framework contains two crucial components: (1) It constructs a hierarchical graph representation comprising a collection of fine-grained subgraphs, each corresponding to a secondary structure motif (e.g., $α$-helices, $β$-strands, loops), and a single coarse-grained graph that connects these motifs based on their spatial arrangement and relative orientation. (2) It employs two GNNs for feature learning: the first operates within individual secondary motifs to capture local interactions, and the second models higher-level structural relationships across motifs. Our modular framework allows a flexible choice of GNN in each stage. Theoretically, we show that our hierarchical framework preserves the desired maximal expressiveness, ensuring no loss of critical structural information. Empirically, we demonstrate that integrating baseline GNNs into our multiscale framework remarkably improves prediction accuracy and reduces computational cost across various benchmarks.

💡 Research Summary

The paper introduces a novel multiscale graph‑based framework for learning protein structures that explicitly leverages secondary‑structure motifs (α‑helices, β‑strands, loops) as intermediate building blocks. The authors first segment a protein sequence using the widely adopted DSSP algorithm, assigning each residue a secondary‑structure token and grouping consecutive residues with the same token into a “motif”. For each motif a fine‑grained subgraph is constructed where residues are nodes and edges encode short‑range spatial or chemical relationships; local frames are computed for each residue, and the relative orientation between frames (g_i^T g_j) is stored as an edge attribute, guaranteeing equivariance to rotations and reflections. All motifs are then abstracted into a single coarse‑grained graph: each motif becomes a node, and edges capture the spatial arrangement and relative orientation of motifs using the same frame‑based features. This hierarchical representation is provably sparse (total edge count O(N)) and preserves geometric fidelity.

Learning proceeds in two stages. Stage‑1 applies an off‑the‑shelf graph neural network (e.g., GVP, EGNN, SE(3)‑Transformer) independently to each motif subgraph, producing a motif‑level embedding that aggregates local residue interactions. Stage‑2 feeds these embeddings into a second GNN that operates on the coarse‑grained motif graph, thereby modeling long‑range dependencies and the overall fold. The authors prove that, under the assumption of maximal expressiveness (injective UPDATE, AGGREGATE, READOUT), the two‑stage pipeline retains the full expressive power of a maximally expressive GNN on the original SCHull graph of the protein point cloud. In other words, no structural information is lost when moving from the fine‑grained to the coarse‑grained level.

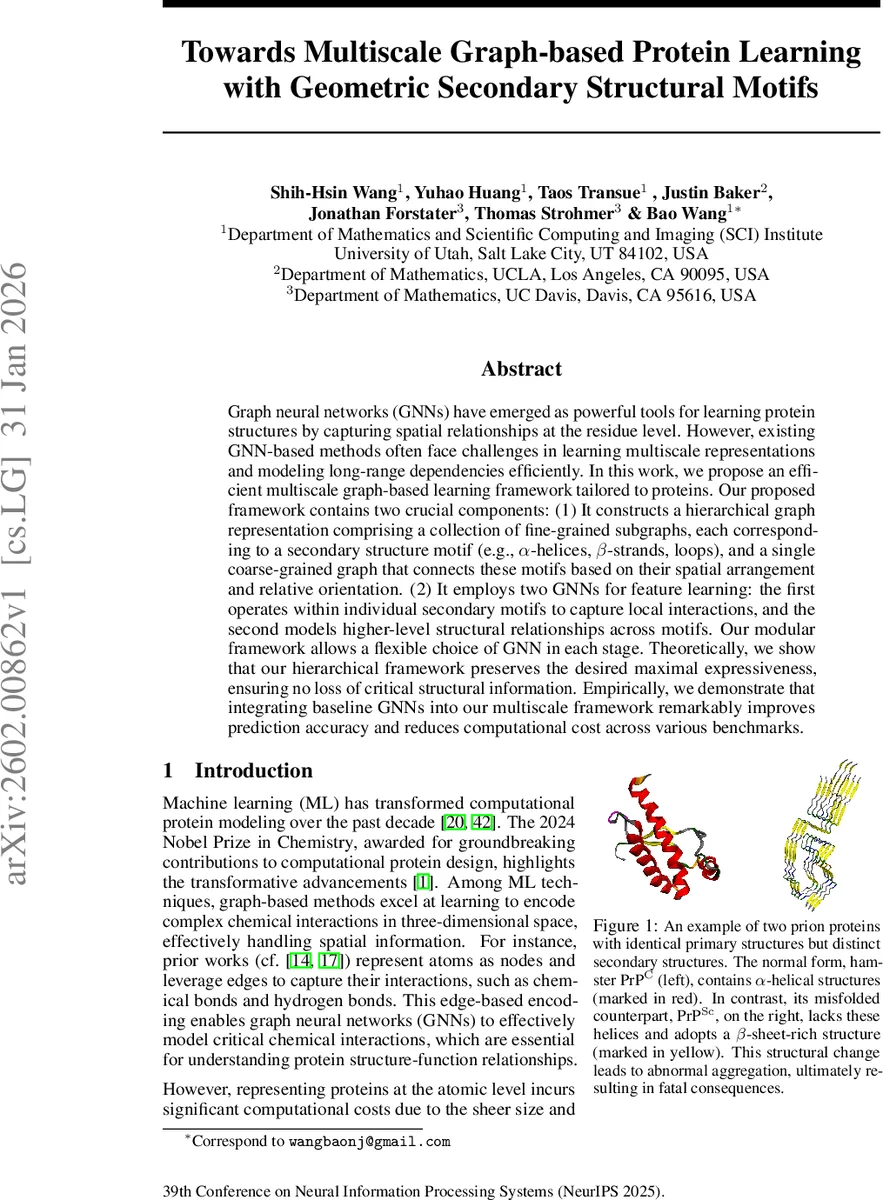

Empirical evaluation spans several protein‑related benchmarks, including protein‑protein interaction prediction, enzyme commission number classification, and mutation effect estimation. By plugging the proposed hierarchical pipeline into existing residue‑level GNNs, the authors achieve consistent accuracy improvements of 2–5 percentage points while reducing the number of graph nodes and edges by roughly 30–45 %. Consequently, GPU memory consumption and inference time drop by a factor of 1.5–2×. A particularly illustrative case study involves the prion protein: the normal cellular form (PrP^C) and the pathogenic misfolded form (PrP^Sc) share identical primary sequences but differ in secondary‑structure composition. The two‑stage model successfully distinguishes these conformations, highlighting its ability to capture biologically relevant long‑range structural changes that single‑scale models miss.

Overall, the work makes three key contributions: (1) a biologically grounded, geometry‑aware hierarchical graph representation that combines domain‑expert secondary‑structure segmentation with provable sparsity; (2) a theoretically justified two‑stage GNN architecture that preserves maximal expressiveness across scales; and (3) extensive experimental evidence that the framework improves both predictive performance and computational efficiency across diverse protein learning tasks. This positions the proposed approach as a practical and theoretically sound pathway toward scalable, high‑fidelity protein representation learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment