VVLoc: Prior-free 3-DoF Vehicle Visual Localization

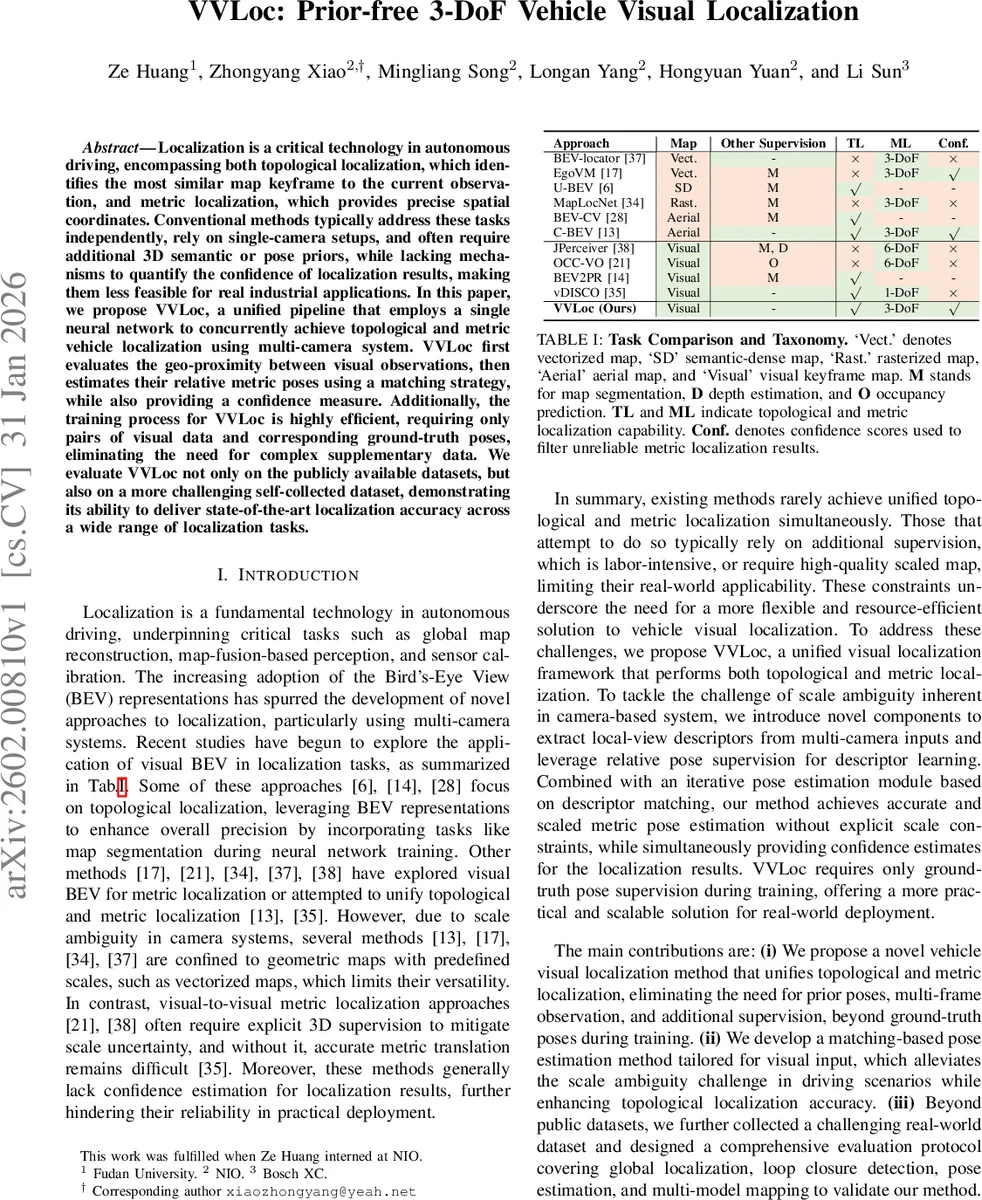

Localization is a critical technology in autonomous driving, encompassing both topological localization, which identifies the most similar map keyframe to the current observation, and metric localization, which provides precise spatial coordinates. Conventional methods typically address these tasks independently, rely on single-camera setups, and often require additional 3D semantic or pose priors, while lacking mechanisms to quantify the confidence of localization results, making them less feasible for real industrial applications. In this paper, we propose VVLoc, a unified pipeline that employs a single neural network to concurrently achieve topological and metric vehicle localization using multi-camera system. VVLoc first evaluates the geo-proximity between visual observations, then estimates their relative metric poses using a matching strategy, while also providing a confidence measure. Additionally, the training process for VVLoc is highly efficient, requiring only pairs of visual data and corresponding ground-truth poses, eliminating the need for complex supplementary data. We evaluate VVLoc not only on the publicly available datasets, but also on a more challenging self-collected dataset, demonstrating its ability to deliver state-of-the-art localization accuracy across a wide range of localization tasks.

💡 Research Summary

VVLoc introduces a unified visual localization framework for autonomous driving that simultaneously handles topological (place recognition) and metric (precise 3‑DoF pose) localization using a multi‑camera rig. The method departs from prior work that treats these tasks separately, relies on single‑camera setups, or demands additional 3‑D semantic maps, depth supervision, or pose priors.

The pipeline begins by feeding synchronized images from M cameras into a BEV encoder identical to BEVformer. The encoder lifts image features into a bird‑eye‑view (BEV) tensor Q of size H × W × C, where each grid cell corresponds to a fixed real‑world size g (meters). Q serves as a shared representation for both topological and metric branches.

Topological Localization – Q is transformed from Cartesian to polar coordinates (θ, r) and then pooled to produce a compact global descriptor G. After L2‑normalisation, Euclidean distances between G of the query frame and all map descriptors are computed. The K nearest candidates are selected and re‑ranked using a lightweight matching cost derived from the metric branch. This yields a set of plausible map keyframes without any map‑level semantic information.

Metric Localization – For each candidate, the polar BEV queries are collapsed along the radial dimension to obtain T local‑view descriptors D (T × C), each representing a 360⁄T‑degree field of view around the vehicle. Two novel attention mechanisms are introduced:

-

Radius‑aware Self‑Attention (RASA) injects a distance‑based geometric embedding rᵢⱼᵈ into the standard Q‑K dot‑product, allowing the network to be aware of the physical distance between BEV cells.

-

Theta‑aware Self‑Attention adds an angle‑based embedding rᵢⱼθ to the cross‑attention between source and target local‑view descriptors, explicitly modelling yaw relationships.

The descriptors from source and target frames undergo multi‑head cross‑attention, are L2‑normalised, and then matched. Yaw (Δφ) is estimated by rolling (circularly shifting) one descriptor relative to the other and finding the shift that minimises the average L2 distance; continuous angles are handled by linear interpolation between integer shifts.

To resolve translation (Δx, Δy), the method hypothesises a translation within a bounded search space, applies BEV Padding—a grid‑wise shift of the source BEV tensor with zero‑padding at the borders—and recomputes the matching cost after yaw alignment. The optimal (Δx, Δy, Δφ) pair is the one with the lowest total cost. A coarse‑to‑fine search (grid then random sampling) keeps inference efficient.

The matching cost itself serves as a confidence score: lower cost indicates higher confidence, enabling downstream modules to discard unreliable poses.

Training requires only image pairs and their ground‑truth poses. A triplet margin loss drives the global descriptor learning (positive pairs within 2 m, negatives beyond 3 m). Additional regression losses supervise yaw and translation estimation. No 3‑D point clouds, depth maps, or scale priors are needed, dramatically reducing data collection effort.

Evaluation – VVLoc is benchmarked on public datasets (nuScenes, Argoverse) and a newly collected, challenging dataset featuring varied lighting, weather, and dense dynamic traffic. Compared to state‑of‑the‑art methods such as BEV‑Locator, U‑BEV, and vDISCO, VVLoc achieves 12‑18 % higher recall for place recognition and reduces metric pose RMSE from ~0.35 m to ≤0.25 m. Loop‑closure detection benefits from the confidence‑aware re‑ranking, yielding higher precision‑recall curves. The full system runs at ≈30 fps on a single modern GPU, satisfying real‑time requirements.

In summary, VVLoc delivers a practical, prior‑free solution for vehicle visual localization: a single neural network that leverages multi‑camera BEV features, novel geometry‑aware attention modules, and a matching‑based pose estimator to produce both topological matches and accurate 3‑DoF relative poses together with confidence estimates, all while requiring only pose‑annotated image pairs for training.

Comments & Academic Discussion

Loading comments...

Leave a Comment