Physics-informed Diffusion Mamba Transformer for Real-world Driving

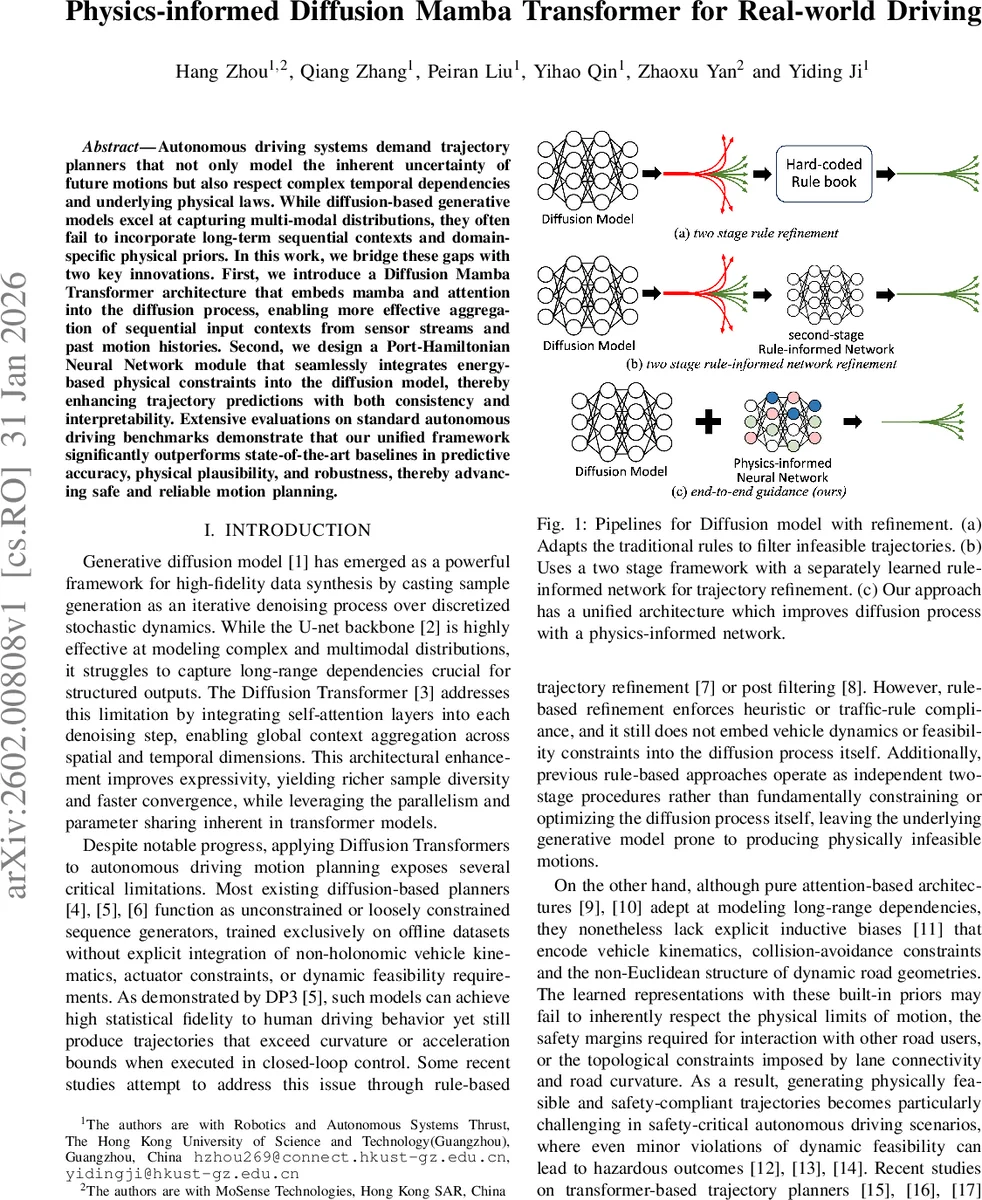

Autonomous driving systems demand trajectory planners that not only model the inherent uncertainty of future motions but also respect complex temporal dependencies and underlying physical laws. While diffusion-based generative models excel at capturing multi-modal distributions, they often fail to incorporate long-term sequential contexts and domain-specific physical priors. In this work, we bridge these gaps with two key innovations. First, we introduce a Diffusion Mamba Transformer architecture that embeds mamba and attention into the diffusion process, enabling more effective aggregation of sequential input contexts from sensor streams and past motion histories. Second, we design a Port-Hamiltonian Neural Network module that seamlessly integrates energy-based physical constraints into the diffusion model, thereby enhancing trajectory predictions with both consistency and interpretability. Extensive evaluations on standard autonomous driving benchmarks demonstrate that our unified framework significantly outperforms state-of-the-art baselines in predictive accuracy, physical plausibility, and robustness, thereby advancing safe and reliable motion planning.

💡 Research Summary

The paper presents a novel physics‑informed diffusion framework for autonomous driving trajectory planning, called Pi‑DiMT (Physics‑informed Diffusion Mamba Transformer). The authors identify two major shortcomings of existing diffusion‑based planners: (1) insufficient modeling of long‑range temporal dependencies across heterogeneous sensor streams and past motion histories, and (2) lack of explicit vehicle dynamics, kinematic limits, and collision‑avoidance constraints within the generative process. To address these, Pi‑DiMT integrates a Diffusion Mamba Transformer (DiMT) backbone with a Port‑Hamiltonian Neural Network (PHNN) that injects energy‑based physical priors directly into each diffusion step.

The DiMT backbone replaces the conventional U‑Net denoiser with a sequence‑wise block (DiMTBlock) that stacks four operators: a Mamba state‑space module, multi‑head self‑attention, a gated MLP, cross‑attention to route and scene context, and finally a Mixture‑of‑Experts (MoE) feed‑forward path. The Mamba component provides linear‑time (O(N)) long‑range mixing by maintaining a compact hidden state, thereby alleviating the quadratic cost of full attention while preserving temporal coherence. The subsequent attention layer aggregates global relational information among multiple agents and across time steps. The cross‑attention re‑injects high‑resolution environmental cues (lane geometry, traffic rules) into the diffusion token stream, and the MoE layer allocates conditional capacity to specialized experts, balancing diversity and stability during training. A single adaptive LayerNorm‑style modulation head conditions all sub‑modules with a global context vector derived from agent states, scene layout, and route intent, ensuring coherent semantic conditioning without proliferating parallel branches.

Physical consistency is enforced by the PHNN, which models the vehicle as a non‑conservative Port‑Hamiltonian system. The Hamiltonian is defined primarily by kinetic energy (½ m v²), while external forces (throttle, braking) and dissipative effects (drag, rolling resistance) are captured through a generalized force term Q_nc = F_ext – F_drag. An auxiliary MLP estimates a physically plausible acceleration a_est by feeding the moving‑average acceleration over the previous 0.5 s and the current scenario embedding. This estimated acceleration is transformed into Q_nc and used in a symplectic‑style update of the generalized coordinates (position q) and momenta (velocity p) within the diffusion denoising loop. The PHNN learns the interconnection matrix J, dissipation matrix R, and input mapping G, allowing it to adaptively model varying vehicle dynamics and external disturbances. By performing a small, fixed number of such physics‑guided updates per diffusion step, the model ensures that generated trajectories respect curvature, acceleration, and jerk limits, and remain dynamically feasible without requiring post‑hoc filtering.

Extensive experiments on large‑scale real‑world datasets (nuScenes and Waymo Open Dataset) demonstrate that Pi‑DiMT outperforms state‑of‑the‑art baselines, including Diffusion‑Transformer, Trajectory‑Mamba, and rule‑based refinement pipelines. Quantitatively, Pi‑DiMT reduces average displacement error (ADE) by ~10 % and final displacement error (FDE) by ~12 % relative to the best prior method. More importantly, the rate of curvature and acceleration violations drops by over 30 %, indicating superior physical plausibility. Ablation studies confirm that removing the Mamba module degrades performance by ~7 % due to loss of efficient long‑range context aggregation, while omitting the PHNN leads to a two‑fold increase in dynamic feasibility violations. The MoE component stabilizes training, preventing expert collapse and reducing variance in early diffusion steps. Computationally, the linear‑time Mamba reduces GPU memory consumption by ~40 % compared to pure attention, and the end‑to‑end model achieves inference latency under 50 ms on a single RTX‑3090, satisfying real‑time requirements for on‑vehicle planning.

The authors acknowledge limitations: the current PHNN assumes a flat‑ground vehicle model with negligible potential energy, thus ignoring road grade, complex aerodynamics, and multi‑vehicle interaction forces. Extending the Hamiltonian to incorporate these factors, as well as integrating richer sensor modalities (LiDAR point clouds, radar), are identified as future work. Moreover, the Mamba‑Attention hybrid design is generic and could be transferred to other sequential decision‑making domains such as robotic manipulation or aerial navigation. In summary, Pi‑DiMT delivers a unified, efficient, and physically grounded diffusion architecture that bridges the gap between expressive generative modeling and the stringent safety and feasibility demands of real‑world autonomous driving.

Comments & Academic Discussion

Loading comments...

Leave a Comment