Any3D-VLA: Enhancing VLA Robustness via Diverse Point Clouds

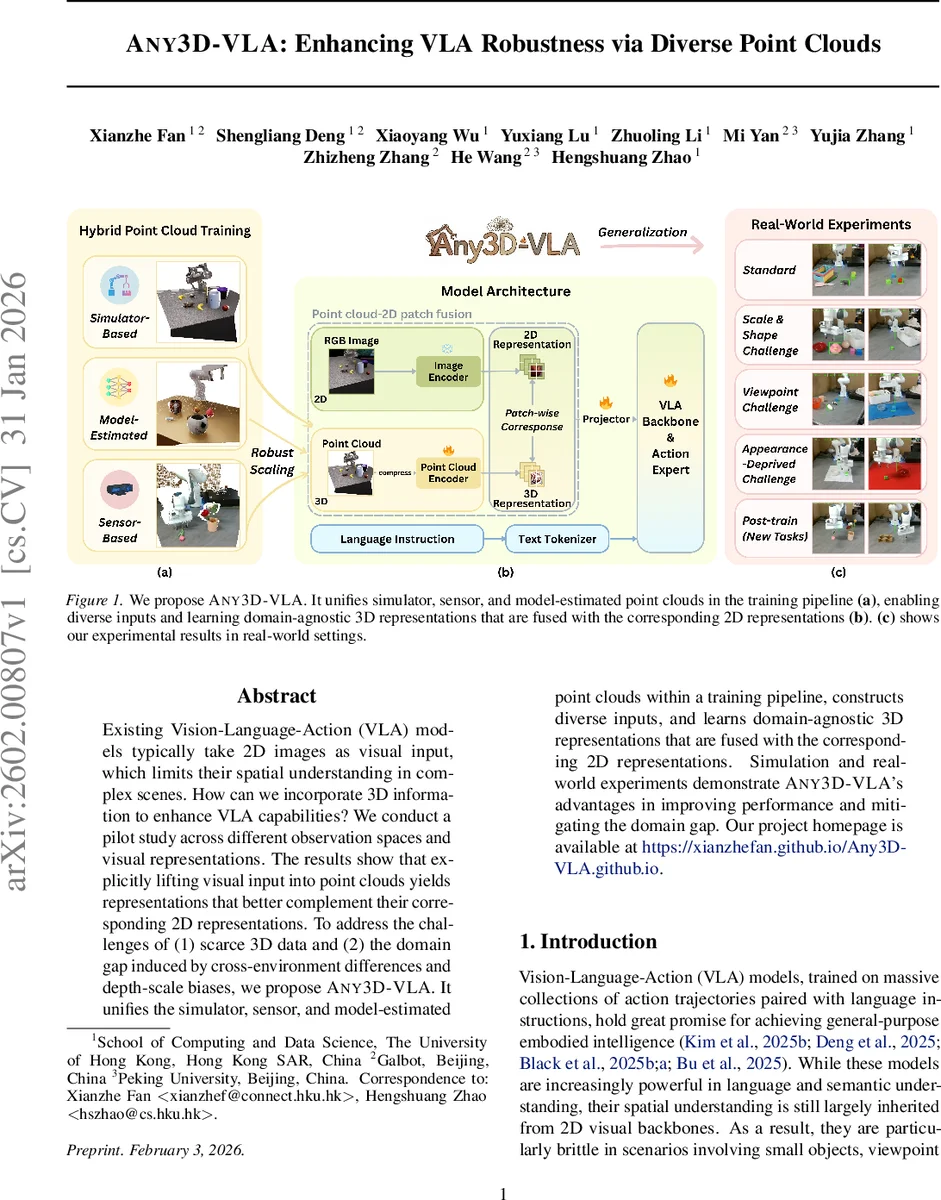

Existing Vision-Language-Action (VLA) models typically take 2D images as visual input, which limits their spatial understanding in complex scenes. How can we incorporate 3D information to enhance VLA capabilities? We conduct a pilot study across different observation spaces and visual representations. The results show that explicitly lifting visual input into point clouds yields representations that better complement their corresponding 2D representations. To address the challenges of (1) scarce 3D data and (2) the domain gap induced by cross-environment differences and depth-scale biases, we propose Any3D-VLA. It unifies the simulator, sensor, and model-estimated point clouds within a training pipeline, constructs diverse inputs, and learns domain-agnostic 3D representations that are fused with the corresponding 2D representations. Simulation and real-world experiments demonstrate Any3D-VLA’s advantages in improving performance and mitigating the domain gap. Our project homepage is available at https://xianzhefan.github.io/Any3D-VLA.github.io.

💡 Research Summary

Any3D‑VLA tackles a fundamental limitation of current Vision‑Language‑Action (VLA) systems: their reliance on 2‑D images for visual input, which hampers fine‑grained spatial reasoning in cluttered, occluded, or viewpoint‑varying environments. The authors first conduct a systematic pilot study comparing five observation‑space designs—2D‑only, implicit‑depth, implicit‑3D (using a spatial foundation model), RGB‑D image‑plane, and point‑cloud‑2D‑patch fusion. The study reveals that merely adding depth as an extra image channel or using reconstruction‑based priors (e.g., VGGT) yields modest gains, whereas explicitly lifting RGB‑D data into a point‑cloud representation and fusing it with 2‑D patch tokens delivers the most substantial improvement, especially in single‑trial success rates.

Building on this insight, Any3D‑VLA proposes a unified training pipeline that incorporates three distinct sources of point clouds: (1) high‑quality metric depth from a simulator, (2) noisy depth from consumer‑grade sensors (e.g., RealSense D435), and (3) depth estimated by state‑of‑the‑art monocular depth models (UniDepthV2, Depth Anything 3). All depth maps are back‑projected using camera intrinsics to obtain 3‑D points, which are then spatially compressed via a grid‑sampling scheme similar to Sonata, reducing computational load while preserving geometric fidelity.

The compressed point cloud is processed by a pre‑trained point‑cloud encoder (Concerto), producing per‑point embeddings that are aligned with 2‑D patch embeddings from a DINOv2 + SigLIP image encoder. The two modalities are concatenated along the channel dimension and projected into the language model’s embedding space. This “point‑cloud‑2D‑patch fusion” module is a plug‑in that can be attached to any existing VLA backbone without altering its core architecture.

To address the scarcity of 3‑D data, the authors synthesize a large‑scale RGB‑D pre‑training dataset in Isaac Sim. The dataset covers 290 object categories, 10,680 instances, and 95 distinct test scenes, with randomized lighting, materials, backgrounds, and camera extrinsics. Both ground‑truth depth and model‑estimated depth are stored, enabling the hybrid training strategy that exposes the model to diverse depth quality and scale distributions.

Experiments span simulation, real‑world robot trials, and standard benchmarks (LIBERO, CALVIN). In simulation, Any3D‑VLA achieves a 61.1 % single‑trial success rate, outperforming the best baseline (56.8 %). In zero‑shot real‑world tests, it reaches 62.5 % overall accuracy—an improvement of 29.2 % over the strongest prior method—demonstrating robustness to sensor noise and depth‑scale bias. Fine‑tuning with a small amount of real demonstration data pushes overall accuracy to 93.3 %. Across all settings, the model shows strong sim‑to‑real generalization, maintaining performance even when depth inputs are degraded.

In summary, Any3D‑VLA introduces a practical, modular approach to inject precise 3‑D geometric cues into VLA systems. By unifying simulator, sensor, and model‑estimated point clouds, employing a large‑scale RGB‑D pre‑training corpus, and fusing native 3‑D structure with 2‑D visual tokens, it substantially improves spatial reasoning, reduces domain gaps, and paves the way for more reliable embodied AI in real‑world manipulation tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment