Latent Shadows: The Gaussian-Discrete Duality in Masked Diffusion

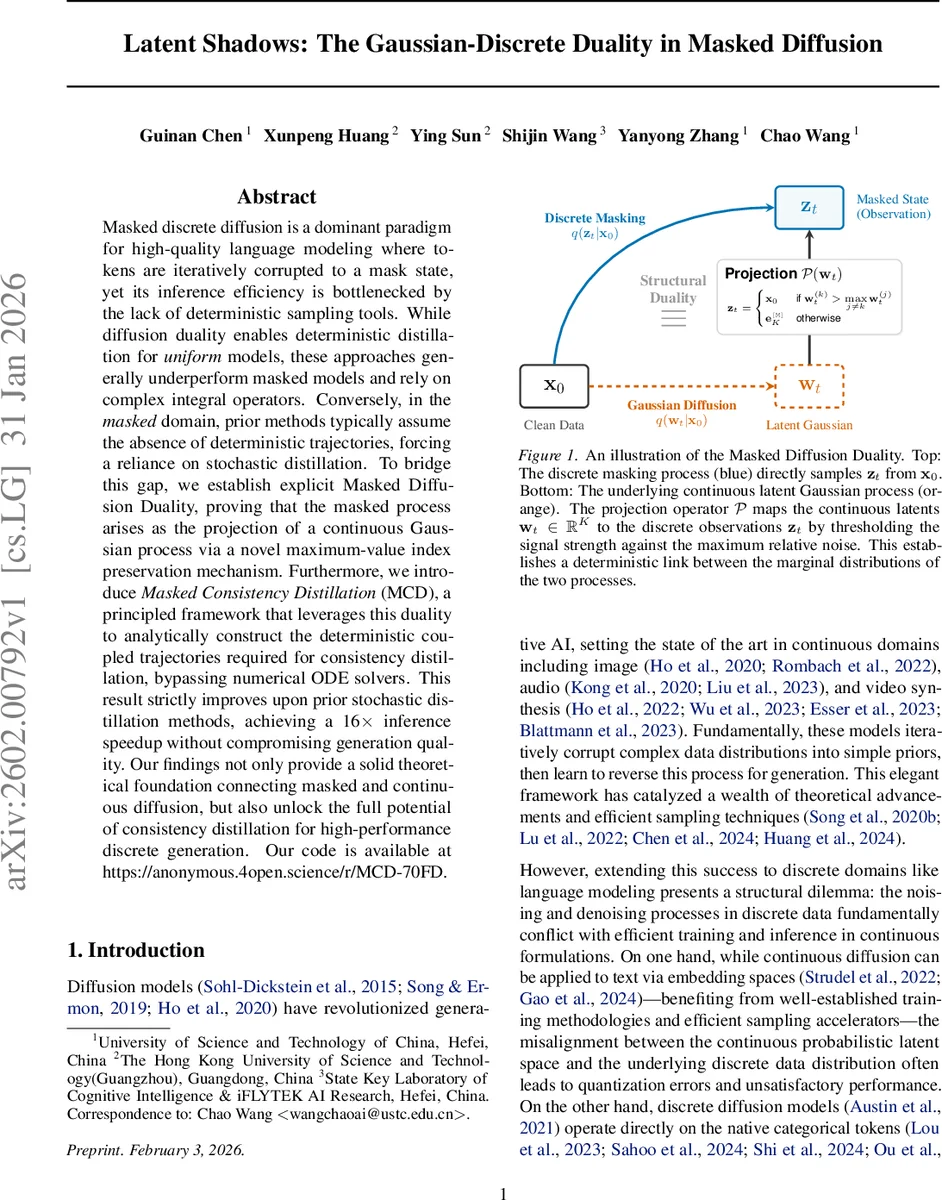

Masked discrete diffusion is a dominant paradigm for high-quality language modeling where tokens are iteratively corrupted to a mask state, yet its inference efficiency is bottlenecked by the lack of deterministic sampling tools. While diffusion duality enables deterministic distillation for uniform models, these approaches generally underperform masked models and rely on complex integral operators. Conversely, in the masked domain, prior methods typically assume the absence of deterministic trajectories, forcing a reliance on stochastic distillation. To bridge this gap, we establish explicit Masked Diffusion Duality, proving that the masked process arises as the projection of a continuous Gaussian process via a novel maximum-value index preservation mechanism. Furthermore, we introduce Masked Consistency Distillation (MCD), a principled framework that leverages this duality to analytically construct the deterministic coupled trajectories required for consistency distillation, bypassing numerical ODE solvers. This result strictly improves upon prior stochastic distillation methods, achieving a 16$\times$ inference speedup without compromising generation quality. Our findings not only provide a solid theoretical foundation connecting masked and continuous diffusion, but also unlock the full potential of consistency distillation for high-performance discrete generation. Our code is available at https://anonymous.4open.science/r/MCD-70FD.

💡 Research Summary

The paper tackles the long‑standing efficiency gap between masked discrete diffusion models, which dominate modern language modeling, and continuous diffusion models that benefit from deterministic sampling and consistency distillation. While continuous diffusion enjoys a probability‑flow ODE that enables few‑step generation, masked diffusion is inherently a stochastic absorbing‑state process, preventing the construction of deterministic teacher‑student trajectory pairs required for consistency distillation.

To bridge this gap, the authors introduce Masked Diffusion Duality, a rigorous theoretical framework that shows the masked process is not an independent stochastic jump process but the deterministic projection of a latent continuous Gaussian diffusion. They define a latent variable wₜ ∈ ℝᴷ as a VP‑scaled mixture of the clean one‑hot token x₀ and a fixed Gaussian noise vector ε: wₜ = α̃ₜ x₀ + σ̃ₜ ε. A novel projection operator P maps wₜ to the discrete observation zₜ by preserving the original token only when its coordinate dominates all others; otherwise the state collapses to the mask token. By calibrating the product α̃ₜσ̃ₜ to the inverse CDF of the noise‑difference distribution, they prove (Lemma 3.1) that the marginal probability P(zₜ = x₀) exactly matches the prescribed masking schedule γₜ. This establishes static duality between the continuous and discrete marginals.

Beyond static equivalence, the authors demonstrate dynamic duality: because the continuous trajectory follows a deterministic DDIM path (wₜ = α̃ₜ x₀ + σ̃ₜ ε), the projected discrete sequence {zₜ} is also deterministic, fully determined by the pair (x₀, ε). Proposition 3.2 shows that any two time points s < t produce discrete states that are merely different “views” of the same underlying noise, guaranteeing they lie on a single consistent trajectory. This insight resolves the structural gap that previously forced stochastic approximations.

However, using the full K‑dimensional ε is computationally burdensome. The authors uncover a crucial simplification: the projection condition depends only on the scalar margin u = εₖ − max_{j≠k} εⱼ, i.e., the difference between the signal coordinate and the strongest competing noise coordinate. By locking this scalar trajectory (Scalar Trajectory Locking), they can keep u fixed across all time steps, effectively preserving the entire high‑dimensional noise without storing it. This reduces the problem to a simple scalar threshold, eliminating the need for numerical ODE solvers.

Leveraging this deterministic coupling, they propose Masked Consistency Distillation (MCD). A teacher model (the EMA of the student) generates a less‑noisy state zₛ from a current state zₜ by analytically applying the DDIM update using the locked scalar u. The student is trained to predict the same clean token from both (zₜ, t) and (zₛ, s) via the standard consistency loss L_CD = E

Comments & Academic Discussion

Loading comments...

Leave a Comment